Learning From Observations

Learning From Observations. “In which we describe agents that can improve their behavior through diligent study of their own experiences.” - Artificial Intelligence: A Modern Approach. Prepared by: San Chua, Natalie Weber, Henry Kwong. Outline. Learning agents Inductive learning

Learning From Observations

E N D

Presentation Transcript

Learning From Observations “In which we describe agents that can improve their behavior through diligent study of their own experiences.” -Artificial Intelligence: A Modern Approach Prepared by: San Chua, Natalie Weber, Henry Kwong

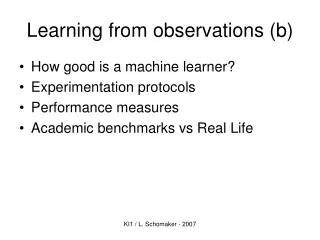

Outline • Learning agents • Inductive learning • Learning decision trees • Example of a decision tree • Decision-tree-learning algorithm • Accessing the performance • Learning general logical descriptions • Current-best hypothesis search algorithm • Version space learning algorithm • Computational learning theory • Summary

Learning Agent • Four Components • Performance Element: collection of knowledge and procedures to decide on the next action. E.g. walking, turning, drawing, etc. • Learning Element: takes in feedback from the critic and modifies the performance element accordingly.

Learning Agent (con’t) - Critic: provides the learning element with information on how well the agent is doing based on a fixed performance standard. E.g. the audience - Problem Generator: provides the performance element with suggestions on new actions to take.

Designing a Learning Element • Depends on the design of the performance element • Four major issues • Which components of the performance element to improve • The representation of those components • Available feedback • Prior knowledge

Components of the Performance Element • A direct mapping from conditions on the current state to actions • Information about the way the world evolves • Information about the results of possible actions the agent can take • Utility information indicating the desirability of world states

Representation • A component may be represented using different representation schemes • Details of the learning algorithm will differ depending on the representation, but the general idea is the same • Functions are used to describe a component

Feedback & Prior Knowledge • Supervised learning: inputs and outputs available • Reinforcement learning: evaluation of action • Unsupervised learning: no hint of correct outcome • Background knowledge is a tremendous help in learning

Outline • Learning agents • Inductive learning • Learning decision trees • Example of a decision tree • Decision-tree-learning algorithm • Accessing the performance • Learning general logical descriptions • Current-best hypothesis search algorithm • Version space learning algorithm • Computational learning theory • Summary

Inductive Learning • Key idea: • To use specific examples to reach general conclusions • Given a set of examples, the system tries to approximate the evaluation function. • Also called Pure Inductive Inference

Learning Agent Training Examples Recognizing Handwritten Digits

Recognizing Handwritten Digits Different variations of handwritten 3’s

Bias • Bias: any preference for one hypothesis over another, beyond mere consistency with the examples. • Since there are almost always a large number of possible consistent hypotheses, all learning algorithms exhibit some sort of bias.

Example of Bias Is this a 7 or a 1? Some may be more biased toward 7 and others more biased toward 1.

Formal Definitions • Example: a pair (x, f(x)), where • x is the input, • f(x) is the output of the function applied to x. • hypothesis: a function h that approximates f, given a set of examples.

Task of Induction • The task of induction: Given a set of examples, find a function h that approximates the true evaluation function f.

Outline • Learning agents • Inductive learning • Learning decision trees • Example of a decision tree • Decision-tree-learning algorithm • Accessing the performance • Learning general logical descriptions • Current-best hypothesis search algorithm • Version space learning algorithm • Computational learning theory • Summary

Decision Tree Example Goal Predicate: Will wait for a table? Patrons? none full some No Yes WaitEst? >60 0-10 30-60 10-30 No Alternate? Hungry? Yes yes no yes no No Yes Reservation? Fri/Sat? yes no yes no No Yes No Yes http://www.cs.washington.edu/education/courses/473/99wi/

Logical Representation of a Path Patrons? none full some WaitEst? 0-10 >60 30-60 10-30 Hungry? yes no Yes r [Patrons(r, full) Wait_Estimate(r, 10-30) Hungry(r, yes)] Will_Wait(r)

Expressiveness of Decision Trees • Any Boolean function can be written as a decision tree • Limitations • Can only describe one object at a time. • Some functions require an exponentially large decision tree. • E.g. Parity function, majority function • Decision trees are good for some kinds of functions, and bad for others. • There is no one efficient representation for all kinds of functions.

Principle Behind the Decision-Tree-Learning Algorithm • Uses a general principle of inductive learning often called Ockham’s razor: “The most likely hypothesis is the simplest one that is consistent with all observations.”

Outline • Learning agents • Inductive learning • Learning decision trees • Example of a decision tree • Decision-tree-learning algorithm • Accessing the performance • Learning general logical descriptions • Current-best hypothesis search algorithm • Version space learning algorithm • Computational learning theory • Summary

Decision-Tree-Learning Algorithm • Goal: Find a relatively small decision tree that is consistent with all training examples, and will correctly classify new examples. • Note that finding the smallest decision tree is an intractable problem. So the Decision-Tree-Algorithm uses some simple heuristics to find a “smallish” one.

Getting Started • Come up with a set of attributes to describe the object or situation. • Collect a complete set of examples (training set) from which the decision tree can derive a hypothesis to define (answer) the goal predicate.

The Restaurant Domain Will we wait, or not? http://www.cs.washington.edu/education/courses/473/99wi/

Splitting Examples by Testing on Attributes + X1, X3, X4, X6, X8, X12 (Positive examples) - X2, X5, X7, X9, X10, X11 (Negative examples)

Splitting Examples by Testing on Attributes (con’t) + X1, X3, X4, X6, X8, X12 (Positive examples) - X2, X5, X7, X9, X10, X11 (Negative examples) Patrons? none full some + - X7, X11 +X1, X3, X6, X8 - +X4, X12 - X2, X5, X9, X10

Splitting Examples by Testing on Attributes (con’t) + X1, X3, X4, X6, X8, X12 (Positive examples) - X2, X5, X7, X9, X10, X11 (Negative examples) Patrons? none full some + - X7, X11 +X1, X3, X6, X8 - +X4, X12 - X2, X5, X9, X10 No Yes

Splitting Examples by Testing on Attributes (con’t) + X1, X3, X4, X6, X8, X12 (Positive examples) - X2, X5, X7, X9, X10, X11 (Negative examples) Patrons? none full some + - X7, X11 +X1, X3, X6, X8 - +X4, X12 - X2, X5, X9, X10 No Yes Hungry? no yes + X4, X12 - X2, X10 + - X5, X9

What Makes a Good Attribute? Better Attribute Patrons? none full some + - X7, X11 +X1, X3, X6, X8 - +X4, X12 - X2, X5, X9, X10 Not As Good An Attribute Type? French Burger Italian Thai + X1 - X5 +X6 - X10 + X4,X8 - X2, X11 +X3, X12 - X7, X9 http://www.cs.washington.edu/education/courses/473/99wi/

Final Decision Tree Patrons? none some full Hungry? No Yes No Yes Type? No French burger Italian Thai Yes Fri/Sat? Yes No no yes No Yes http://www.cs.washington.edu/education/courses/473/99wi/

Original Decision Tree Example Goal Predicate: Will wait for a table? Patrons? none full some No Yes WaitEst? >60 0-10 30-60 10-30 No Alternate? Hungry? Yes yes no yes no No Yes Reservation? Fri/Sat? yes no yes no No Yes No Yes http://www.cs.washington.edu/education/courses/473/99wi/

Outline • Learning agents • Inductive learning • Learning decision trees • Example of a decision tree • Decision-tree-learning algorithm • Accessing the performance • Learning general logical descriptions • Current-best hypothesis search algorithm • Version space learning algorithm • Computational learning theory • Summary

Assessing the Performance of the Learning Algorithm • A learning algorithm is good if it produces hypotheses that do a good job of predicating the classifications of unseen examples • Test the algorithm’s prediction performance on a set of new examples, called a test set.

Methodology in Accessing Performance 1. Collect a large set of examples. 2. Divide it into 2 disjoint set: the training set and the test set. It is very important that these 2 sets are separate so that the algorithm doesn’t cheat. Usually this division of examples is done randomly. 3. Use the learning algorithm with the training set as examples to generate a hypothesis H.

Methodology (con’t) 4. Measure the percentage of examples in the test set that are correctly classified by H. 5. Repeat steps 1 to 4 for different sizes of training sets and different randomly selected training sets of each size.

Analyzing the Results Learning Curve for the Decision Tree Algorithm (On examples in the restaurant domain) 1.0 0.9 0.8 0.7 0.6 0.5 0.4 % correct on test set Happy Graph 0 20 40 60 80 100 Training set size “Artificial Intelligence A Modern Approach”, Stuart Russel Peter Norwig

Overfitting • Overfitting is what happens when a learning algorithm finds meaningless “regularity” in the data. • Caused by irrelevant attributes. • Solution: decision tree pruning. • Resulting decision tree is. • Smaller. • More tolerant to noise. • More accurate in its predictions.

Practical Uses of Decision Tree Learning • Designing oil platform equipment. • Learning to fly a plane. • Diagnosing heart attacks.

Outline • Learning agents • Inductive learning • Learning decision trees • Example of a decision tree • Decision-tree-learning algorithm • Accessing the performance • Learning general logical descriptions • Current-best hypothesis search algorithm • Version space learning algorithm • Computational learning theory • Summary

Learning General Logical Description • Key idea: • Look at inductive learning generally • Find a logical description that is equivalent to the (unknown) evaluation function • Make our hypothesis more or less specific to match the evaluation function.

Outline • Learning agents • Inductive learning • Learning decision trees • Example of a decision tree • Decision-tree-learning algorithm • Accessing the performance • Learning general logical descriptions • Current-best hypothesis search algorithm • Version space learning algorithm • Computational learning theory • Summary

Current-best-hypothesis Search • Key idea: • Maintain a single hypothesis throughout. • Update the hypothesis to maintain consistency as a new example comes in.

Definitions • Positive example: an instance of the hypothesis • Negative example: not an instance of the hypothesis • False negative example: the hypothesis predicts it should be a negative example but it is in fact positive • False positive example: should be positive but it is actually negative.

Current-best-hypothesis Search Algorithm 1. Pick a random example to define the initial hypothesis 2. For each example, • In case of a false negative: • Generalize the hypothesis to include it • In case of a false positive: • Specialize the hypothesis to exclude it 3. Return the hypothesis

How to Generalize • Replacing Constants with Variables:Object(Animal,Bird) Object (X,Bird) • Dropping Conjuncts:Object(Animal,Bird) & Feature(Animal,Wings) Object(Animal,Bird) • Adding Disjuncts:Feature(Animal,Feathers) Feature(Animal,Feathers) v Feature(Animal,Fly) • Generalizing Terms:Feature(Bird,Wings) Feature(Bird,Primary-Feature) http://www.pitt.edu/~suthers/infsci1054/8.html

How to Specialize • Replacing Variables with Constants:Object (X, Bird) Object(Animal, Bird) • Adding Conjuncts:Object(Animal,Bird) Object(Animal,Bird)&Feature(Animal,Wings) • Dropping Disjuncts:Feature(Animal,Feathers)v Feature(Animal,Fly) Feature(Animal,Fly) • Specializing Terms:Feature(Bird,Primary-Feature) Feature(Bird,Wings) http://www.pitt.edu/~suthers/infsci1054/8.html

What do all these mean? • Let’s look at some examples...

Generalize and Specialize • Must be consistent with all other examples • Non-deterministic • At any point there may be several possible specializations or generalizations that can be applied.

Potential Problem of Current-best-hypothesis Search • Extension made not necessarily lead to the simplest hypothesis. • May lead to an unrecoverable situation where no simple modification of the hypothesis is consistent with all of the examples. • The program must backtrack to a previous choice point.