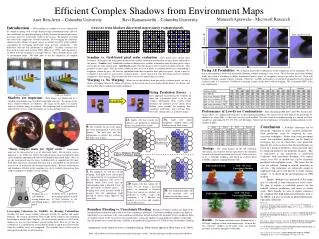

Efficient Complex Shadows from Environment Maps

scanline, no warping (SN). scanline, warping (SW). grid, no warping (GN). grid, warping (GW). b. a. Fully shadow-traced. no cast shadows. with cast shadows. b. a.

Efficient Complex Shadows from Environment Maps

E N D

Presentation Transcript

scanline, no warping (SN) scanline, warping (SW) grid, no warping (GN) grid, warping (GW) b a Fully shadow-traced no cast shadows with cast shadows b a Shadows are important – Both images are illuminated by a sampled environment map (400 directional light sources). The image on the left is rendered without cast shadows. The image on the right is accurately with cast shadows using our method. The rendering time to add shadows is reduced from 120 sec (with full shadow-ray casting method) to 19 sec. uncertainty (U) boundary (B) Grid – No warp Uncertainty flooding Fully shadow-traced a b Visibility for x2 is predicted by warping blockers a and b to their positions in the light-space relative to x2. Visibility for x1 is calculated by tracing shadow rays and finding points a and b. Grid – No warp Uncertainty flooding Fully shadow-traced x1 x2 Leveraging Coherence in Visibility by Reusing Calculations Visibility for most scenes exhibits coherence in both the angular and spatial domains. By reusing calculations form nearby pixels (spatial) and combining knowledge of nearby lights (angular), we can accurately predict over 90% of the shadow rays in a ray-tracer without actually casting them. Here we see how the visibility for x2 is predicted by reusing blocker points a and b, discovered earlier when the visibility for x1 was computed. The visibility for x2 will be used in subsequent predictions at other locations. “Many samples make for ‘light’ work.” – Environment maps can be represented as a set of directional lights. Recent work, such as [Agarwal et. al. 2003], has shown that high dynamic-range environment maps can be faithfully approximated with several hundred directional lights. Here we see the environment map for Grace Cathedral (left) as sampled into 82 lights. Each light represents a principal direction and is surrounded by the directions nearest to it, as defined by the Voronoi diagram (right). Because of the density and abundance of lights in this sampling, we can us visibility information from a light’s neighbors to predict its own visibility. Results – The bunny and plant scenes illuminated by a 200 light sampling of their environment maps. Even in the low coherence visibility of the plant scene, our method performs accurately, though less efficiently. Efficient Complex Shadows from Environment Maps Maneesh Agrawala – Microsoft Research Aner Ben-Artzi – Columbia University Ravi Ramamoorthi – Columbia University Introduction – When adding cast shadows to scenes illuminated by complex lighting such as high dynamic-range environment maps, most of the calculations are spent determining visibility between the many light-source directions and every scene point visible to the camera. We propose a method that selectively samples the visibility function. By leveraging the coherence inherent to the visibility of typical scenes, we predict over 90% of the visibility calculations by leveraging information from previous calculations. The difference from the full calculations is negligible. Visibility coherence has been used in works such as [Guo 1998], [Hart et al. 1999], and [Agrawala et al. 2000], but only for point or area light sources. Those methods do not scale to environment maps. We show how to use the complexity of sampled environment maps to our advantage. 4 ways to reuse blockers discovered in previously evaluated pixels Scanline vs. Grid-based pixel order evaluation – Grey pixels have already been evaluated. Blue pixels are being predicted based on visibility informatin conatined in the gray pixels indicated by the arrows. Scanline (left): Traditional scanline evaluation uses visibility information from the three pixels above and to the left of the current pixel. Grid-Based (right): First the image is fully evaluated at regular intervals. This produces a coarse grid. Next the center (shown in blue) of every 4 pixels is evaluated based on its surrounding 4 neighbors (shown in grey). All four are equidistant from their respective finer-grid-level pixel. Again, a pixel is evaluated at the center of 4 previously evaluated pixels. At the end of this step, the image has been regularly sampled at a finer spacing. This becomes the new coarse level, and the process repeats. Warping vs. No Warping – When we use the blockers from previously evaluated pixels, we hav a choice of warping them to the frame of reference of the current pixel, or assuming tht the blocker are distant, and leaving their relative angular direction unchanged. Trying All Possibilities – We examined all possible combinations of the components of our algorithm. We see that scanline produces errors with uncertainty flooding, whether warping is used, or not. This is because uncertainty flooding works best when it assimilates visibility information from a variety of viewpoints, not just up and to the left. Even with boundary flooding, scanline requires warping. Otherwise, visibility information is erroneously propagated too far along the scanline before it is corrected. Grid-based evaluation actually works better without warping. We recommend GNU as the best combination. Fixing Prediction Errors – A naïve approach to predicting the visibility of lights for a particular pixel is to let the visibility information form nearby pixels determine the visibility at the current pixel. Without some method for marking low-confidence predictions, and verifying them, the results contain errors as in the left image. fix predictions Performance of Low-Error Combinations – After eliminating SNB, SNU, and SWU based on the images above, we compared the efficiency or the remaining algorithms. The entries for the table indicate the percentage of shadow rays where NL > 0 that were traced by each method. For each scene an environment map was chosen, and then sampled as approximately 50, 100, 200, and 400 lights. Notice that the performance of boundary flooding depends on the number of lights. 3A. Light cells whose neighbors’ visibility differs are shadow-traced (blue center). 2A. Lights cells that contain any blocker(s) are predicted as blocked. Others are predicted as visible. Conclusion – Visibility coherence can be efficiently exploited to make accurate predictions. Such predictions must be tempered by error-correction techniques. Rather than using perceptual errors, low-confidence predictions can be found be leveraging the way in which they were generated. Specifically, we have shown that when predictions are based on a group of predictors, error-correction must be employed whenever the predictors disagree. This is manifested in our framework as grid-based evaluation with uncertainty flooding. For typical scenes, over 90% of shadow-rays can be accurately predicted with negligible errors. The errors that do exist are coherent, leading to smooth animations in our tests. Our techniques are technically simple to implement and can be used directly to render realistic images, or to speed up the precomputation in PRT methods. Higher speedups than presented in this paper may be achieved if some tolerance for errors exists. We plan to explore a controlled process for the tradeoff between predictions and errors in future work. More broadly, we want to explore coherence for sampling other high dimensional functions such as the BRDF, and more generally in global illumination, especially animations. 0. The visibility for all of the lights has been determined at 3 pixels (not shown). We will now use that information to predict the visibility for lights at new pixel. Flood and Shadow-trace predictions Timings – The actual timings for the full rendering, shown here, are related to, but not equivalent to the reduction in work as measured in shadow-rays traced. Each scene was lit by a 200-light sampling, and timed on an Intel Xeon 3.06GHz computer, running Windows XP. Path A: Boundary Flooding Path B: Uncertainty Flooding Predictions & uncertainty tracing Flood and Shadow-trace 1. For simplicity, we will not consider warping. Each light in our current pixel (as represented by its hexagonal cell in the Voronoi diagram), will receive a blocker (black dots) every time the corresponding light is blocked in one of the previously evaluated pixels. If warping were used, blockers would be warped onto the appropriate light, instead of always being added to the same light. 2B. Lights cells that contain the 3 (since we are using 3 previous pixels) are predicted as blocked. Those that contain no blockers are predicted as visible. Light cells containing 1 or 2 blockers are marked as uncertain and shadow-traced (blue center). 3B. The neighboring light cells of all uncertain cells are shadow-traced (blue center). References: Agarwal, S., Ramamoorthi, R., Belongie, S., and Jensen, H. W.. Structured Importance Sampling of Environment Maps. In Proceedings of SIGGRAPH 2003, pages 605–612. Agrawala, M., Ramamoorthi, R., Heirich, A., and Moll, L. Efficient Image-Based Methods for Rendering Soft Shadows. In Proceedings of SIGGRAPH 2000, pages 375–384. Ben-Artzi, A., Ramamoorthi, R., Agrawala, M. Efficient Shadows from Sampled Environment Maps. Columbia University Tech Report CUCS-025-04 2004. Guo, B. Progressive radiance evaluation using directional coherence maps. In Proceedings of SIGGRAPH 1998, pages 255-266. Hart, D., Dutre, P., Greenberg, D. Direct illumination with lazy visibility evaluation. In Proceedings of SIGGRAPH 1999, pages 147-154. Boundary Flooding vs. Uncertainty Flooding – Boundary flooding considers any light on the boundary between blocked and visible to be a low-confidence prediction. Uncertainty flooding considers any light for which there is no consensus to be a low-confidence prediction. In both methods, the neighbors of low-confidence lights are shadow-traced. If the trace reveals that a prediction is wrong, the shadow-tracing floods to neighbors of that light; until all shadow-traces return the same visibility as the prediction. [Agrawala et al. 2000] use this for boundary flooding. Animations can be found at www.cs.columbia.edu/cg. More details appear in [Ben-Artzi et al. 2004]. Note: All shadows have been enhanced to make them more visible. Actual renderings would contain softer shadows.