The AIM-F Algorithm review

This review explores the AIM-F algorithm for frequent itemset mining, discussing techniques such as diffsets and compressed bit vectors for efficient mining. It covers the calculation of support, use of optimizations like dynamic reordering, and contributions by Amir Epstein. The algorithm aims to find all frequent itemsets in a dataset and has key features including DFS generate-and-test and vector projection. Relevant research works like Apriori and SLPMiner are compared, highlighting the benefits of using diffsets and compressed bit vectors in certain scenarios for improved performance. The review concludes with optimization strategies and welcomes questions and feedback.

The AIM-F Algorithm review

E N D

Presentation Transcript

The AIM-F Algorithm review Presented by Sagi Shporer

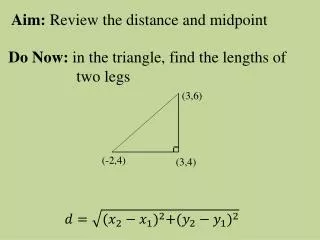

Frequent Itemset Problem • Let I={i1,i2,…,im} be a set of items • Let TI be a transaction • Let D be a dataset of n transactions. • Task: Find all X I s.t. support(X)≥minsupport (e.g. there are at least minsupport transactions for which X T).

Dataset id: item set 1: a b c d e 2: a b c d 3: b c d 4: b e 5: c d e MinSup=2 Example – Frequent Itemsets What itemsets are frequent itemsets (FI)? a, b, c, d, e, ab, ac, ad, bc, bd, be, cd, ce, de, abc, abd, acd, bcd, cde, abcd

Previous research work • Candidate set generate-and-test approach • Apriori, VLDB 94, R. Agrawal. • Sampling technique • H. Toivonen • Adaptive Support • SLPMiner, ICDM 2002, M. Seno & G. Karypis • Data transform • FP-tree, SIGMOD 2000, J. Han.

General • Goal : Mining Frequent Itemsets • Main features: • DFS generate-and-test • Compressed vertical database • Diffsets • PEP • Dynamic reordering • Vector projection • Optimized Initialization

:abcde a:bcde b:cde c:de d:e e: ab:cde ac:de ad:e ae: bc:de bd:e be: cd:e ce: de: abc:de abd:e abe: acd:e ace: ade: bcd:e bce: bde: cde: abcd:e abce: abde: acde: bcde: Cut abcde: Enumeration tree

FI Dataset |D|=5 2 4 4 4 3 id: item set abcd: :a b c d e 1: a b c d e 2: a b c d 3: b c d 4: b e 5: c d e eb: :a e b c d ecd: |Da|=2 1 2 2 2 |De|=3 2 2 2 a: e b c d c: d d: e: b c d ed: ec: d eb: c d b: c d e: b c d MinSup=2 |Deb|=2 1 1 |Dec|=2 2 eb: ecd: An Example (Illustration only) abcd:

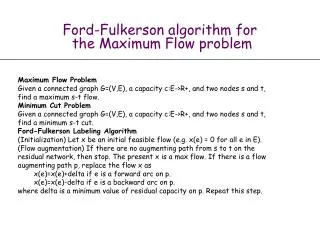

Diffsets • Let t(P) be the set of transactions (TIDs) supporting P. • Define diffset d(PX)=t(P)\t(X) • Then support(PX)=support(P)-|d(PX)| Amir Epstein

Diffsets • How to Calculate support(PXY) using d(PX) and d(PY) ? • support(PXY)=support(PX)-|d(PXY)| • d(PXY)=d(PY) - d(PX) Amir Epstein

Example t(X) t(P) t(Y) d(PY) d(PX) d(PXY) t(PXY) Amir Epstein

Contributions • Dynamical use of various itemset mining optimizations (Specifically diffsets). • Use of compressed vertical bit vector with diffsets.

Dynamic Optimization Usage • Every optimization has strengths and weaknesses. • Optimizations should be used only when they give some benefit.

Dynamic Optimization Usage Cont. • Diffsets – Start using diffsets only when d(PX) < t(PX) • Optimized Initialization – Use only for sparse datasets (when the number of ‘1’s reach a threshold)

Compressed Bit Vector • Sparse Vertical Bit Vector – Hold only the needed cells in the vertical bit vector

Compressed Bit Vector Cont. • Use of diffsets directly from the compressed form • Faster than tid-list for dense datasets. • Competitive with tid-list for sparse datasets

Questions & Comments THANK YOU !