Data preprocessing

Data preprocessing. Dr. Yan Liu Department of Biomedical, Industrial and Human Factors Engineering Wright State University. Why Data Preprocessing. “Dirty” Real-World Data Noisy: contain errors or outliers e.g. salary = “-10”

Data preprocessing

E N D

Presentation Transcript

Data preprocessing Dr. Yan Liu Department of Biomedical, Industrial and Human Factors Engineering Wright State University

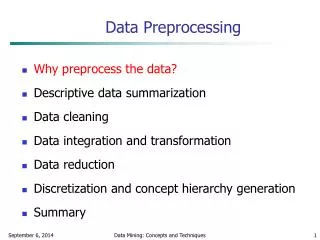

Why Data Preprocessing • “Dirty” Real-World Data • Noisy: contain errors or outliers • e.g. salary = “-10” • Missing: lack attribute values or certain attributes of interest • e.g., occupation= BLANK • Inconsistent: contain discrepancies in codes or names • e.g. Age=“42” and Birthday=“03/07/1997”; was rating “1,2,3”, now rating “A, B, C”; discrepancy between duplicate records • “Garbage-in-Garbage-out” • No quality data, no quality DM results

Why Are Data Dirty • Noisy Data Result from • Faulty data collection instruments • Data entry problems • Data transmission problems • Missing Data Result from • N/A data value collected • Different considerations between the time when the data were collected and when they are analyzed • Human/hardware/software problems • Inconsistent Data Result from • Different data sources • Functional dependency violation • A functional dependency occurs when one attribute in a relation of a database uniquely determines another attribute • e.g. SSN → Name

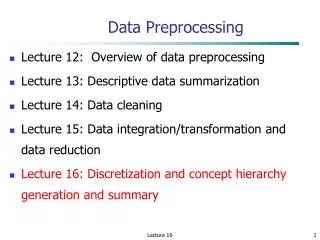

Major Tasks in Data Preprocessing • Data Cleaning • Fill in missing values, smooth noisy data, identify or remove outliers, and resolve inconsistencies • Data Integration • Integration of multiple files, databases, or data cubes • Data Transformation • Normalization and aggregation • Data Reduction • Obtains a reduced representation of data that is much smaller in volume but produces the same or similar analytical results • Data Discretization • Part of data reduction, especially useful for numerical data

Data Cleaning: Missing Values • Ignore Record • Usually done when class label is missing (in a classification task) • Not effective when the missing values per attribute vary considerably • Fill in Missing Value Manually • Time-consuming and tedious • Sometimes infeasible • Fill in Automatically with • A global constant (e.g. “unknown”) • Can lead to mistakes in DM results • Attribute mean • e.g. the average income is $56,000, then use this value to replace missing values of income • Attribute mean for all samples belonging to the same class (smarter) • e.g. the average income is $56,000 for customers with “good credit”, then use this value to replace missing values of income for those with “good credit” • The most probable value • Regression • Inference-based (e.g. Bayesian formula or decision tree induction) • Uses the most information from the data to predict missing values

Data Cleaning: Noisy Data • Noise • Random error or variance in a measured variable • Smoothing Techniques • Binning (example) • First sort data and partition into (usually equal-frequency) bins • Data in each bin are smoothed by bin means, bin median, or bin boundaries (the minimum and maximum values) • Regression (example) • Smooth by fitting the data into regression functions • Clustering (example) • Detect and remove outliers • Similar values are organized into clusters and thus values that fall outside the clusters may be outliers

Y1 y = x + 1 Y1’ x X1 Linear Regression

Clustering Method to Detect Outliers (The three red dots may be outliers)

Data Integration • Purpose • Combines data from multiple sources into a coherent store • Schema Integration and Object Matching • Entity identification problem: how to match equivalent real-world entities from multiple data sources • e.g., A.customer-id B.customer-no • Metadata: information about data • Metadata for each attribute include the name, meaning, data type, and range of values permitted for the attribute • Redundancy • An attribute may be redundant if it can be derived from another attribute or a set of attributes • Inconsistencies in attribute or dimension naming can also cause redundancies • Correlation analysis • Measure how strongly two attributes correlate with each other • Correlation coefficient (e.g. Pearson’s product moment coefficient) for numerical attributes • Chi-square (χ2) test for categorical attributes

Pearson’s Product Moment Coefficient of Numerical Attributes A and B (Eq. 1) N: number of records in each attribute ai, bi (i=1,2,..,N): the ith value of attributes A and B, respectively σA, σB: the standard deviation of attributes A and B, respectively : the average values of attributes A and B, respectively

Chi-Square Test of Categorical Attributes A and B Cell (Ai, Bj) represents the joint event that A= Ai and B= Bj (Eq. 2) : statistic test of the hypothesis that A and B are independent oij(i=1,2,..,p;j=1,2,…,q): the observed frequency (i.e. actual count) of the joint event (Ai, Bj) eij(i=1,2,..,p;j=1,2,…,q): the expected frequency of the joint event (Ai, Bj) (Eq. 3) N: number of records in each attribute Count(A=Ai): the observed frequency of the event A=Ai Count(B=Bj): the observed frequency of the event B=Bj

= 284.44 + 121.90 + 71.11 + 30.48 = 507.93 dof = (2-1)*(2-1)=1 P< 0.001 Conclusion: Gender and Preferred_Reading are strongly correlated!

Data Integration (Cont.) • Detection and Resolution of Data Value Conflicts • Attribute values from different sources may be different • Possible reasons • Different representations • e.g. “age” and “birth date” • Different scaling • e.g., metric vs. British units

Data Transformation • Purpose • Data are transformed into forms appropriate for mining • Strategies • Smoothing • Remove noise from data • Aggregation • Summary or aggregation operations are applied to the data • e.g. Daily sales data may be aggregated to compute monthly and annual total amounts • Generalization • Low-level data are replaced by higher-level concepts through the use of concept hierarchies • e.g. age be can be generalized to “youth, middle-aged, or senior” • Normalization • Scaled to fall within a small, specified range (e.g. 0 – 1) • Attribute/Feature Construction • New attributes are constructed from the given ones

Data Normalization Suppose that the minA and maxA are the minimum and maximum values of an attribute A. Min-max normalization maps a value, v, of A to v’ in the new range [new_minA, new_maxA] by computing (Eq. 4) (new_maxA – new_minA) + new_minA Suppose the minimum and maximum values for the attribute income are $12,000 and $98,000, respectively. We would like to map income to the range [0.0, 1.0]. By min-max normalization, a value of $73,600 for income will be transformed to Min-Max Normalization

Data Normalization(Cont.) A value, v, of A is normalized to v’ by computing Mean of A (Eq. 5) σA: the standard deviation of A Suppose the mean and standard deviation of the values the attribute income are $54,000 and $16,000, respectively. With z-score normalization, a value of $73,600 for income is transformed to • Z-Score Normalization • The values of attribute A are normalized based on the mean and standard deviation of A

Data Normalization(Cont.) A value, v, of A is normalized to v’ by computing j: the smallest integer such that max(|v’|)<1 (Eq. 6) Suppose that the recorded values of A range from -986 to 917. The maximum absolute value of A is 986. To normalize by decimal scaling, we divide each value by 1,000 (j=3). As a result, the normalized values of A range from -0.986 to 0.917. • Normalization by Decimal Scaling • Normalize by moving the decimal point of values of attribute A

Data Reduction • Purpose • Obtain a reduced representation of the dataset that is much smaller in volume, yet closely maintains the integrity of the original data • Strategies • Data cube aggregation • Aggregation operations are applied to construct a data cube • Attribute subset selection • Irrelevant, weakly relevant, or redundant attributes are detected and removed • Data compression • Data encoding or transformations are applied so as to obtain a reduced or “compressed” representation of the original data • Numerosity reduction • Data are replaced or estimated by alternative, smaller data representations (e.g. models) • Discretization and concept hierarchy generation • Attribute values are replaced by ranges or higher conceptual levels

Attribute/Feature Selection • Purpose • Select a minimum set of attributes (features) such that the probability distribution of different classes given the values for those attributes is as close as possible to the original distribution given the values of all features • Reduce the number of attributes appearing in the discovered patterns, helping to make the patterns easier to understand • Heuristic Methods • An exhaustive search for the optimal subset of attributes can be prohibitively expensive (2n possible subsets for n attributes) • Stepwise forward selection • Stepwise backward elimination • Combination of forward selection and backward elimination • Decision-tree induction

Stepwise Selection • Stepwise Forward (Example) • Start with an empty reduced set • The best attribute is selected first and added to the reduced set • At each subsequent step, the best of the remaining attributes is selected and added to the reduced set (conditioning on the attributes that are already in the set) • Stepwise Backward (Example) • Start with the full set of attributes • At each step, the worst of the attributes in the set is removed • Combination of Forward and Backward • At each step, the procedure selects the best attribute and adds it to the set, and removes the worst attribute from the set • Some attributes were good in initial selections but may not be good anymore after other attributes have been included in the set

Decision Induction • Decision Tree • A mode in the form of a tree structure • Decision nodes • Each denotes a test on the corresponding attribute which is the “best” attribute to partition data in terms of class distributions at the point • Each branch corresponds to an outcome of the test • Leaf nodes • Each denotes a class prediction • Can be used for attribute selection

Data Compression • Purpose • Apply data encoding or transformations to obtain a reduced or “compressed” representation of the original data • Lossless Compression • The original data can be reconstructed from the compressed data without any loss of information • e.g. some well-tuned algorithms for string compression • Lossy Compression • Only an approximation of the original data can be constructed from the compressed data • e.g. wavelet transforms and principal component analysis (PCA)

Principal Component Analysis • Overview • Suppose a dataset has n attributes. PCA searches for k (k<n) n-D orthogonal vectors that can best be used to represent the data • Works for numeric data only • Used when the number of attributes is large • PCA can reveal relationships that were not previously expected

Principal Component Analysis (Cont.) • Basic Procedure • For each attribute, subtract its mean from its values, so that the mean of each attribute is 0 • PCA computes k orthogonal vectors (called principal components) that provide a basis for the normalized input data. • The k principal components are sorted in terms of decreasing “significance” (the importance in showing variance among data) • The size of the data can be reduced by eliminating the weaker components

Numerosity Reduction • Purpose • Reduce data volume by choosing alternative, smaller data representations • Parametric Methods • A model is used to fit data, store only the model parameters not original data (except possible outliers) • e.g. Regression models and Log-linear models • Non-Parametric Methods • Do not use models to fit data • Histograms • Use binning to approximate data distributions • A histogram of attribute A partitions the data distribution of A into disjoint subsets, or buckets • Clustering • Use cluster representation of the data to replace the actual data • Sampling • Represent the original data by a much smaller sample (subset) of the data

Histogram • Equal-Width (Example) • The width of each bucket range is the same • Equal-Frequency (Equal-Depth) (Example) • The number of data samples in each bucket is (about) the same • V-Optimal (Example) • Bucket boundaries are placed in a way that minimizes the cumulative weighted variance of the buckets • Weight of a buckle is proportional to the number of samples in the buckle • MaxDiff (Example) • A MaxDiff histogram of size h is obtained by putting a boundary between two adjacent attribute values vi and vi+1 of V if the difference between f(vi+1)·σi+1 and f(vi)·σi is one of the (h − 1) largest such differences (where σi denotes the spread of vi). The product f(vi)· σi is said the area of v. σi= vi+1-vi • V-Optimal and MaxDiff tend to be more accurate and practical than equal-width and equal-frequency histograms

Suppose we have the following data: 1, 3, 4, 7, 2, 8, 3, 6, 3, 6, 8, 2, 1, 6, 3, 5, 3, 4, 7, 2, 6, 7, 2, 9 Create a three-bucket histogram Sorted (24 data samples in total) 1 1 2 2 2 2 3 3 3 3 3 4 4 5 6 6 6 6 7 7 7 8 8 9 Equal-Width Histogram

For the ith bucket, its weighted variance SSEi = V-Optimal Histogram ubi: the max. value in the ith bucket lbi: the min. value in the ith bucket f(j) (j=lbi,…,ubi): the frequency of jth value in the ith bucket avgi: the average frequency in the ith bucket Accumulated weight variance SSE Suppose the three buckets are [1,2], [3,5], and [6,9]

Sampling • Simple Random Sampling without Replacement (SRSWOR) • Draw s of the N records from dataset D (s<N), with no record can be drawn more than once • Simple Random Sampling with Replacement (SRSWR) • Each time a record is drawn from D, it is recorded and then placed back to D, so it may be drawn more than once • Cluster Sampling • Records in D are first divided into groups or clusters, and a random sample of these clusters is then selected (all records in the selected clusters are included in the sample) • Stratified Sampling • Records in D are divided into subgroups (or strata), and random sampling techniques are then used to select sample members from each stratum

Raw Data Stratified Sampling Cluster Sampling

Discretization and Concept Hierarchy • Discretization • Reduce the number of values for a given continuous attribute by dividing the range of the attribute into intervals • Interval labels can then be used to replace actual data values • Supervised versus unsupervised • Supervised discretization process uses class information • Unsupervised discretization process does not use class information • Techniques • Binning (unsupervised) • Histogram (unsupervised) • Clustering (unsupervised) • Entropy-based discretization (supervised) • Concept Hierarchies • Reduce the data by collecting and replacing low level concepts by higher level concepts

Entropy-Based Discretization Given m classes, c1, c2, …, cm, in dataset D, the entropy of D is H(D) pi: the probability of class ci in D, calculated as: pi= (# of records of ci) / |D| |D|: # of records in D • Entropy (“Self-Information”) • A measure of the uncertainty associated with a random variable in information theory

A dataset has 64 records, among which 16 records belong to c1 and 48 records belong to c2 p(c1) =16/64 =0.25 p(c2) = 48/64 = 0.75 H(D) = -[0.25·log2(0.25) + 0.75·log2(0.75)] = 0.811

Entropy-Based Discretization (Cont.) |D1|, |D2|: # of records in D1and D2, respectively H(D1), H(D2): entropy of D1and D2, respectively • The boundary that minimizes the entropy function over all possible boundaries is selected as a binary discretization. • The process is recursively applied to partitions obtained until some stopping criterion is met • e.g.H(D) – H(D,T) < δ Given a dataset D, if D is discretized into two intervals D1 and D2 using boundary T, the entropy after partitioning is

A dataset D has 64 records, among which 16 records belong to c1 and 48 records belong to c2 (1) D is divided into two intervals: D1 has 45 records (2 belonging to c1 and 43 belonging to c2) and D2 has 19 records (14 belonging to c1 and 5 belonging to c2) (2) D is divided into two intervals: D3 has 40 records (10 belonging to c1 and 30 belonging to c2) and D4 has 24 records (6 belonging to c1 and 18 belonging to c2) (1) H(D1) = -[2/45*log2(2/45)+43/45*log2(43/45)] = 0.2623 H(D2) = -[14/19*log2(14/19)+5/19*log2(5/19)] = 0.8315 H(D,T1) = (45/64)*0.2623+(19/64)*0.8315 = 0.4313 (2) H(D3) = -[10/40*log2(10/40)+30/40*log2(30/40)] = 0.8113 H(D4) = -[6/24*log2(6/24)+18/24*log2(18/24)] = 0.8113 H(D,T2) = (40/64)*0.8113+(24/64)*0.8113 = 0.8113 H(D,T1) < H(D,T2), so T1 is better than T2