DirectCompute Accelerated Separable Filtering

Learn about the efficient AMD's favorite effects using DirectCompute and accelerated separable filters for improved performance. Discover tips for optimizing kernels, thread groups, and shared memory usage, as well as techniques like bilinear HW filtering and bilateral dilation. Explore the benefits of utilizing packing in TGSM and achieving high-definition ambient occlusion while performing at half resolution. Understand the advantages of DirectCompute over pixel shader, with insights on bilateral dilate & blur and depth of field effects.

DirectCompute Accelerated Separable Filtering

E N D

Presentation Transcript

DirectCompute Accelerated Separable Filtering AMD‘s Favorite Effects

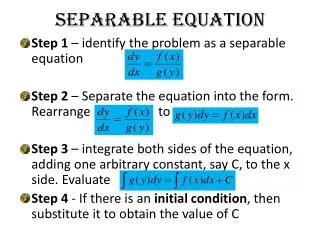

Separable Filters • Much faster than executing a box filter • Classically performed by the Pixel Shader • Consists of a horizontal and vertical pass • Source image over-sampling increases with kernel size • Shader is usually TEX instruction limited AMD‘s Favorite Effects

Separable? – Who Cares • In many cases developers use this technique even though the filter may not actually be separable • Results are often still acceptable • Much faster than performing a real box filter • Accelerates many bilateral cases AMD‘s Favorite Effects

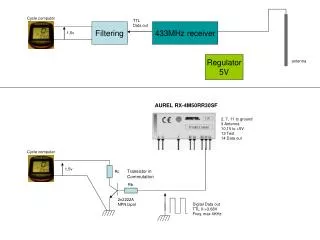

Typical Pipeline Steps Source RT Intermediate RT Destination RT Horizontal Pass Vertical Pass AMD‘s Favorite Effects

Use Bilinear HW filtering? • Bilinear filter HW can halve the number of ALU and TEX instructions • Just need to compute the correct sampling offsets • Not possible with more advanced filters • Usually because weighting is a dynamic operation • Think about bilateral cases... AMD‘s Favorite Effects

Where to start with DirectCompute • Is the Pixel Shader version TEX or ALU limited? • You need to know what to optimize for! • Use IHV tools to establish this • Achieving peak performance is not easy – so write a highly configurable kernel • Will allow you to easily experiment and fine tune AMD‘s Favorite Effects

Thread Group Shared Memory (TGSM) • TGSM can be used to reduce TEX ops • TGSM can also be used to cache results • Thus saving ALU ops too • Load a sensible run length – base this on HW wavefront/warp size (AMD = 64, NVIDIA = 32) • Choose a good common factor (multiples of 64) AMD‘s Favorite Effects

Kernel #1 128 threads load 128 texels • Redundant compute threads ........... Kernel Radius 128 – ( Kernel Radius * 2 ) threads compute results AMD‘s Favorite Effects

Avoid Redundant Threads • Should ensure that all threads in a group have useful work to do – wherever possible • Redundant threads will not be reassigned work from another group • This would involve alot of redundancy for a large kernel diameter AMD‘s Favorite Effects

Kernel #2 Kernel Radius * 2 threads load 1 extra texel each 128 threads load 128 texels • No redundant compute threads ........... Kernel Radius 128 threads compute results AMD‘s Favorite Effects

Multiple Pixels per Thread • Allows for natural vectorization • 4 works well on AMD HW • Doesn‘t hurt performance on scalar HW • Possible to cache TGSM reads on General Purpose Registers (GPRs) • Quartering TGSM reads - absolute winner!! AMD‘s Favorite Effects

Kernel #3 Kernel Radius * 2 threads load 1 extra texel each 32 threads load 128 texels • Compute threads not a multiple of 64 ........... Kernel Radius 32 threads compute 128 results AMD‘s Favorite Effects

Multiple Lines per Thread Group • Process multiple lines per thread group • Better than one long line • 2 or 4 works well • Improved texture cache efficiency • Compute threads back to a multiple of 64 AMD‘s Favorite Effects

Kernel #4 Kernel Radius * 4 threads load 1 extra texel each 64 threads load 256 texels ........... ........... Kernel Radius 64 threads compute 256 results AMD‘s Favorite Effects

Kernel Diameter • Kernel diameter needs to be > 7 to see a DirectCompute win • Otherwise the overhead cancels out the advantage • The larger the kernel diameter the greater the win AMD‘s Favorite Effects

Use Packing in TGSM • Use packing to reduce storage space required in TGSM • Only have 32k per SIMD • Reduces reads/writes from TGSM • Often a uint is sufficient for color filtering • Use SM5.0 instructions f32tof16(), f16tof32() AMD‘s Favorite Effects

High Definition Ambient Occlusion Depth + Normals = * HDAO buffer Original Scene Final Scene AMD‘s Favorite Effects

Perform at Half Resolution • HDAO at full resolution is expensive • Running at half resolution captures more occlusion – and is obviously much faster • Problem: Artifacts are introduced when combined with the full resolution scene AMD‘s Favorite Effects

Bilateral Dilate & Blur HDAO buffer doesn‘t match with scene A bilateral dilate & blur fixes the issue AMD‘s Favorite Effects

New Pipeline... ½ Res Still much faster than performing at full res! Horizontal Pass Vertical Pass Bilinear Upsample Intermediate UAV Dilated & Blurred AMD‘s Favorite Effects

Pixel Shader vs DirectCompute *Tested on a range of AMD and NVIDIA DX11 HW, DirectCompute is between ~2.53x to ~3.17x faster than the Pixel Shader AMD‘s Favorite Effects

Depth of Field • Many techniques exist to solve this problem • A common technique is to figure out how blurry a pixel should be • Often called the Cirle of Confusion (CoC) • A Gaussian blur weighted by CoC is a pretty efficient way to implement this effect AMD‘s Favorite Effects

The Pipeline... Vertical Pass Horizontal Pass Intermediate UAV CoC AMD‘s Favorite Effects

Shogun 2: DoF OFF AMD‘s Favorite Effects

Shogun 2: DoF ON AMD‘s Favorite Effects

Pixel Shader vs DirectCompute *Tested on a range of AMD and NVIDIA DX11 HW, DirectCompute is between ~1.48x to ~1.86x faster than the Pixel Shader AMD‘s Favorite Effects

Summary • DirectCompute greatly accelerates larger kernel diameter filters • Allows for filtering at full resolution • For access to source code: • HDAO11: jon.story@amd.com • DoF11: nicolas.thibieroz@amd.com AMD‘s Favorite Effects

Questions?takahiro.harada@amd.comholger.gruen@amd.comjon.story@amd.comPlease fill in the feedback forms! AMD‘s Favorite Effects