Protein Homology Detection Using String Alignment Kernels

Jean-Phillippe Vert, Tatsuya Akutsu. Protein Homology Detection Using String Alignment Kernels. Learning Sequence Based Protein Classification. Problem: classification of protein sequence data into families and superfamilies

Protein Homology Detection Using String Alignment Kernels

E N D

Presentation Transcript

Jean-Phillippe Vert, Tatsuya Akutsu Protein Homology Detection Using String Alignment Kernels

Learning Sequence Based Protein Classification • Problem: classification of protein sequence data into families and superfamilies • Motivation: Many proteins have been sequenced, but often structure/function remains unknown • Motivation: infer structure/function from sequence-based classification

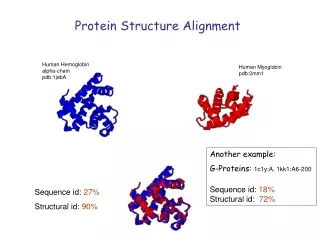

Sequence Data Versus Structure and function Sequences for four chains of human hemoglobin Tertiary Structure >1A3N:A HEMOGLOBIN VLSPADKTNVKAAWGKVGAHAGEYGAEALERMFLSFPTTKTYFPHFDLSHGSAQVKGHGK KVADALTNAVAHVDDMPNALSALSDLHAHKLRVDPVNFKLLSHCLLVTLAAHLPAEFTPA VHASLDKFLASVSTVLTSKYR >1A3N:B HEMOGLOBIN VHLTPEEKSAVTALWGKVNVDEVGGEALGRLLVVYPWTQRFFESFGDLSTPDAVMGNPKV KAHGKKVLGAFSDGLAHLDNLKGTFATLSELHCDKLHVDPENFRLLGNVLVCVLAHHFGK EFTPPVQAAYQKVVAGVANALAHKYH >1A3N:C HEMOGLOBIN VLSPADKTNVKAAWGKVGAHAGEYGAEALERMFLSFPTTKTYFPHFDLSHGSAQVKGHGK KVADALTNAVAHVDDMPNALSALSDLHAHKLRVDPVNFKLLSHCLLVTLAAHLPAEFTPA VHASLDKFLASVSTVLTSKYR >1A3N:D HEMOGLOBIN VHLTPEEKSAVTALWGKVNVDEVGGEALGRLLVVYPWTQRFFESFGDLSTPDAVMGNPKV KAHGKKVLGAFSDGLAHLDNLKGTFATLSELHCDKLHVDPENFRLLGNVLVCVLAHHFGK EFTPPVQAAYQKVVAGVANALAHKYH Function: oxygen transport

Structural Hierarchy • SCOP: Structural Classification of Proteins • Interested in superfamily-level homology – remoteevolutionary relationship Difficult !!

Learning Problem • Reduce to binary classification problem: positive (+) if example belongs to a family (e.g. G proteins) or superfamily (e.g. nucleoside triphosphate hydrolases), negative (-) otherwise • Focus on remote homology detection • Use supervised learning approach to train a classifier Labeled Training Sequences Classification Rule Learning Algorithm

Two supervised learning approaches to classification • Generative model approach • Build a generativemodel for a single protein family; classify each candidate sequence based on itsfitto the model • Only uses positive training sequences • Discriminative approach • Learning algorithm tries to learn decision boundary between positive and negative examples • Uses both positive and negative training sequences

Class Secondary Structure Prediction Fold Threading Super Family HMM, PSI-BLAST, SVM Family SW, BLAST, FASTA Targets of the current methods

Discriminative Learning Discriminative approach Train on both positive and negative examples to learn classifier Modern computational learning theory • Goal: learn a classifier that generalizes well to new examples • Do not use training data to estimate parameters of probability distribution – “curse of dimensionality”

SVM for protein classification • Want to define feature map from space of protein sequences to vector space • Goals: • Computational efficiency • Competitive performance with known methods • No reliance on generative model – general method for sequence-based classification problems

Summary of the current kernel methods • Feature vector from HMM • Fisher kernel(Jaakkola et al., 2000) • Marginalized kernel (Tsuda et al., 2002) • Feature vector from sequence • Spectrum kernel (Leslie et al., 2002) • Mismatch kernel (Leslie et al., 2003) • Feature vector from other score • SVM pairwise (Liao & Noble, 2002)

String Alignment Kernels • Observation: SW alignment score provides measure of similarity with biological knowledge on protein evolution. • It can not be used as kernel because of lack of positive definiteness. • A family of local alignment (LA) kernels that mimic SW score are presented .

LA Kernels Choose Feature Vector representation Get Kernel by inner product of vectors Other Kernels LA Kernel Measure similarity Get valid kernel

LA Kernels • Pair score Kaβ(x,y) • Gap kernel Kgβ(x,y) for penalty gap model Β>=0, s is a symmetric similarity score. with d is gap opening and e is extension costs

LA Kernels • Kernel convolution: • For n>=1, the string kernel can be expressed as K0=1 K0 is initial part, succession of n aligned residues Ka β with n-1 possible gap Kgβ and a terminal part K0.

LA Kernels It is convergent for any x and y because of finite number of non-null terms. It is a point-wise limit of Mercer Kernels

LA with SW score • π:local alignment • p(x,y,π): score of local alignment π over x,y. • Π:set of all possible local alignment over x,y.

Why SW can not be kernel • 1. SW only keep the best alignment instead of sum of alignment of x,y. • 2. Logrithm can destroy the property of being postive definite.

Example LA Kernel SW score

SVM-pairwise LA kernel x y SW Score y x Pair HMM (0.2, 0.3, 0.1, 0.01) (0.9, 0.05, 0.3, 0.2) Inner Product 0.253 0.227

Diagonal Dominant Issue • It is the fact that K(x,x) is easily orders of magnitude larger than K(x,y) of similar sequence which bias the performance of SVM.

Diagonal Dominant Issue (1) The eigen kernel LA-eig : a. By subtracting from the diagonal the smallest negative eigenvalue of the training Gram matrix, if there are negative eigenvalues. b. LA-eig, is equal to except eventually on the diagonal. (2) The empirical kernel map LA-ekm

Methods • Implementation • The computation of the kernel [and therefore of ] with a complexity in O(|x| · |y|), Using dynamic programming by a slight modification of the SW algorithm. • Normaliztion • Dataset • 4352 sequences extracted from the Astral database (www.cs.columbia.edu/compbio/svmpairwise), grouped into families and superfamilies.