Parameter estimate in IBM Models:

270 likes | 296 Views

Explore the essential concepts, formulas, and training methods in IBM models for machine translation. Learn about word alignment, modeling, training processes, and the EM algorithm. Uncover the basics of IBM Model 1 and its application in translation and noisy channel models.

Parameter estimate in IBM Models:

E N D

Presentation Transcript

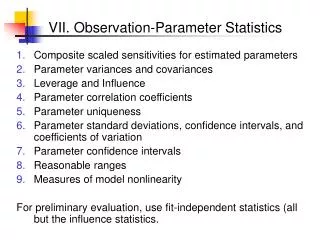

Parameter estimate in IBM Models: Ling 572 Fei Xia Week ??

Outline • IBM Model 1 Review: (from LING571) • Word alignment • Modeling • Training: formula • Formulae

IBM Model Basics • Classic paper: Brown et. al. (1993) • Translation: F E (or Fr Eng) • Resource required: • Parallel data (a set of “sentence” pairs) • Main concepts: • Source channel model • Hidden word alignment • EM training

Intuition • Sentence pairs: word mapping is one-to-one. • (1) S: a b c d e T: l m n o p • (2) S: c a e T: p n m • (3) S: d a c T: n p l (b, o), (d, l), (e, m), and (a, p), (c, n), or (a, n), (c, p)

Source channel model for MT P(E) P(F | E) Fr sent Eng sent Noisy channel • Two types of parameters: • Language model: P(E) • Translation model: P(F | E)

Word alignment • a(j)=i aj = i • a = (a1, …, am) • Ex: • F: f1 f2 f3 f4 f5 • E: e1 e2 e3 e4 • a4=3 • a = (0, 1, 1, 3, 2)

Word alignment • An alignment, a, is a function from Fr word position to Eng word position: a(j)=i means that the fj is generated by ei. • The constraint: each fr word is generated by exactly one Eng word (including e0):

Notation • E: the Eng sentence: E = e1 …el • ei: the i-th Eng word. • F: the Fr sentence: f1 … fm • fj: the j-th Fr word. • e0: the Eng NULL word • F0 : the Fr NULL word. • aj: the position of Eng word that generates fj.

Notation (cont) • l: Eng sent leng • m: Fr sent leng • i: Eng word position • j: Fr word position • e: an Eng word • f: a Fr word

Generative process • To generate F from E: • Pick a length m for F, with prob P(m | l) • Choose an alignment a, with prob P(a | E, m) • Generate Fr sent given the Eng sent and the alignment, with prob P(F | E, a, m). • Another way to look at it: • Pick a length m for F, with prob P(m | l). • For j=1 to m • Pick an Eng word index aj, with prob P(aj | j, m, l). • Pick a Fr word fj according to the Eng word ei, whereaj=I, with prob P(fj | ei ).

Approximation • Fr sent length depends only on Eng sent length: • Fr word depends only on the Eng word that generates it: • Estimating P(a | E, m): All alignments are equally likely:

Final formula and parameters for Model 1 • Two types of parameters: • Length prob: P(m | l) • Translation prob: P(fj | ei), or t(fj | ei),

Training • Mathematically motivated: • Having an objective function to optimize • Using several clever tricks • The resulting formulae • are intuitively expected • can be calculated efficiently • EM algorithm • Hill climbing, and each iteration guarantees to improve objective function • It does not guaranteed to reach global optimal.

Training: Fractional counts • Let Ct(f, e) be the fractional count of (f, e) pair in the training data, given alignment prob P. Alignment prob Actual count of times e and f are linked in (E,F) by alignment a

Estimating P(a|E,F) • We could list all the alignments, and estimate P(a | E, F).

Formulae so far New estimate for t(f|e)

The algorithm • Start with an initial estimate of t(f | e): e.g., uniform distribution • Calculate P(a | F, E) • Calculate Ct (f, e), Normalize to get t(f|e) • Repeat Steps 2-3 until the “improvement” is too small.

No need to enumerate all word alignments • Luckily, for Model 1, there is a way to calculate Ct(f, e) efficiently.

The algorithm • Start with an initial estimate of t(f | e): e.g., uniform distribution • Calculate P(a | F, E) • Calculate Ct (f, e), Normalize to get t(f|e) • Repeat Steps 2-3 until the “improvement” is too small.

Summary of Model 1 • Modeling: • Pick the length of F with prob P(m | l). • For each position j • Pick an English word position aj, with prob P(aj | j, m, l). • Pick a Fr word fj according to the Eng word ei, with t(fj | ei), where i=aj • The resulting formula can be calculated efficiently. • Training: EM algorithm. The update can be done efficiently. • Finding the best alignment: can be easily done.

EM algorithm • EM: expectation maximization • In a model with hidden states (e.g., word alignment), how can we estimate model parameters? • EM does the following: • E-step: Take an initial model parameterization and calculate the expected values of the hidden data. • M-step: Use the expected values to maximize the likelihood of the training data.

Training Summary • Mathematically motivated: • Having an objective function to optimize • Using several clever tricks • The resulting formulae • are intuitively expected • can be calculated efficiently • EM algorithm • Hill climbing, and each iteration guarantees to improve objective function • It does not guaranteed to reach global optimal.