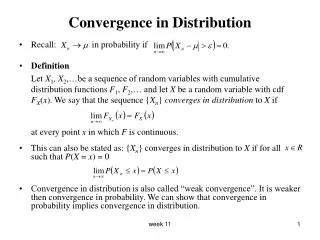

Convergence in Distribution

Convergence in Distribution. Recall: in probability if Definition Let X 1 , X 2 ,…be a sequence of random variables with cumulative distribution functions F 1 , F 2 ,… and let X be a random variable with cdf

Convergence in Distribution

E N D

Presentation Transcript

Convergence in Distribution • Recall: in probability if • Definition Let X1, X2,…be a sequence of random variables with cumulative distribution functions F1, F2,… and let X be a random variable with cdf FX(x). We say that the sequence {Xn} converges in distribution to X if at every point x in which F is continuous. • This can also be stated as: {Xn} converges in distribution to X if for all such that P(X = x) = 0 • Convergence in distribution is also called “weak convergence”. It is weaker then convergence in probability. We can show that convergence in probability implies convergence in distribution. week 11

Simple Example • Assume n is a positive integer. Further, suppose that the probability mass function of Xn is: Note that this is a valid p.m.f for n ≥ 2. • For n ≥ 2, {Xn} convergence in distribution to X which has p.m.f P(X = 0) = P(X = 1) = ½ i.e. X ~ Bernoulli(1/2) week 11

Example • X1, X2,…is a sequence of i.i.d random variables with E(Xi) = μ < ∞. • Let . Then, by the WLLN for any a > 0 as n ∞. • So… week 11

Continuity Theorem for MGFs • Let X be a random variable such that for some t0 > 0 we have mX(t) < ∞ for . Further, if X1, X2,…is a sequence of random variables with and for all then {Xn} converges in distribution to X. • This theorem can also be stated as follows: Let Fn be a sequence of cdfs with corresponding mgf mn. Let F be a cdf with mgf m. If mn(t) m(t) for all t in an open interval containing zero, then Fn(x) F(x) at all continuity points of F. • Example: Poisson distribution can be approximated by a Normal distribution for large λ. week 11

Example to illustrate the Continuity Theorem • Let λ1, λ2,…be an increasing sequence with λn∞ as n ∞ and let {Xi} be a sequence of Poisson random variables with the corresponding parameters. We know that E(Xn) = λn = V(Xn). • Let then we have that E(Zn) = 0, V(Zn) = 1. • We can show that the mgf of Zn is the mgf of a Standard Normal random variable. • We say that Zn convergence in distribution to Z ~ N(0,1). week 11

Example • Suppose X is Poisson(900) random variable. Find P(X > 950). week 11

Central Limit Theorem • The central limit theorem is concerned with the limiting property of sums of random variables. • If X1, X2,…is a sequence of i.i.d random variables with mean μ and variance σ2 and , then by the WLLN we have that in probability. • The CLT concerned not just with the fact of convergence but how Sn/n fluctuates around μ. • Note that E(Sn) = nμ and V(Sn) = nσ2. The standardized version of Sn is and we have that E(Zn) = 0, V(Zn) = 1. week 11

The Central Limit Theorem • Let X1, X2,…be a sequence of i.i.d random variables with E(Xi) = μ < ∞ and Var(Xi) = σ2 < ∞. Suppose the common distribution function FX(x) and the common moment generating function mX(t) are defined in a neighborhood of 0. Let Then, for - ∞ < x < ∞ where Ф(x) is the cdf for the standard normal distribution. • This is equivalent to saying that converges in distribution to Z ~ N(0,1). • Also, i.e. converges in distribution to Z ~ N(0,1). week 11

Example • Suppose X1, X2,…are i.i.d random variables and each has the Poisson(3) distribution. So E(Xi) = V(Xi) = 3. • The CLT says that as n ∞. week 11

Examples • A very common application of the CLT is the Normal approximation to the Binomial distribution. • Suppose X1, X2,…are i.i.d random variables and each has the Bernoulli(p) distribution. So E(Xi) = p and V(Xi) = p(1- p). • The CLT says that as n ∞. • Let Yn = X1 + … + Xn then Yn has a Binomial(n, p) distribution. So for large n, • Suppose we flip a biased coin 1000 times and the probability of heads on any one toss is 0.6. Find the probability of getting at least 550 heads. • Suppose we toss a coin 100 times and observed 60 heads. Is the coin fair? week 11