Learning kernel matrix by matrix exponential update

Learning kernel matrix by matrix exponential update. Jun Liao. Problem. Given a square matrix that shows the similarities between examples. could be asymmetric could have missing values

Learning kernel matrix by matrix exponential update

E N D

Presentation Transcript

Learning kernel matrix by matrix exponential update Jun Liao

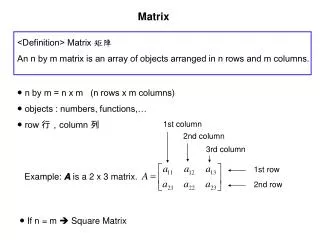

Problem • Given a square matrix that shows the similarities between examples. • could be asymmetric • could have missing values • Want to learn a kernel matrix from this similarity matrix so that we can use various kernel learning algorithms. • Two ways to do this: • Naïve approach • matrix exponential update algorithm [Koji etc 2004] ).

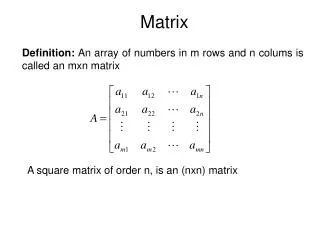

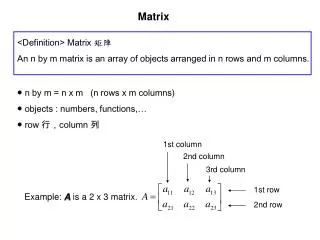

Kernel Matrix • What kind of square matrix can be used as a kernel matrix? symmetric, semi positive definite and no missing values. • Naïve approach to construct a kernel matrix from a similarity matrix? • Symmetric: A=(A+A’)/2; • Semi Positive Definite: A=A+I λ • No missing values: put 0 Called “Diag Kernel”

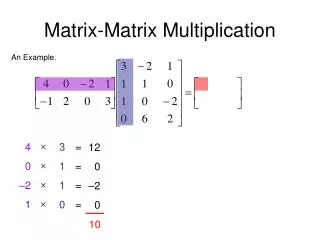

Matrix Exponential Update • Extension of EG: the parameter to learn is a symmetric positive definite(SymPD) matrix W instead of a vector w. • Bregman Divergence and the update

Matrix Exponential Update (Cont.) When we use von neumann divergence, Matrix exponential translate a symmetric matrix into a SymPD matrix. As long as W0 is SymPD and Xt is symmetric, Wt is always SymPD. Thus keep the kernel matrix properties.

Kernel matrix learning • Back to the case of learning kernel from similarity matrix: W is the learned kernel matrix. Each element in the similarity matrix is an instance for the update: . only has nonzero value 0.5 at (i,j) and (j,i) positions (learning one element of similarity per iteration) • “batch” version (learning all the elements of similarity per iteration) (MExp Kernel ) Here, is for all the none-zero elements in the similarity matrix.

Experiment • Data Subsample of a drug discovery dataset CDK2. 37 positives and 37 negatives. Similarity matrix is created by FLEXS. It does 3D alignment of two molecules. The similarity value is asymmetric. It distinguishes the reference molecule and the test molecule. • Test Setup First learn the kernel matrix using the whole similarity matrix by the two approaches. Then do 10 random 50%(training)-50%(testing) split of the dataset. Use SVM classification. The average of 10 runs’ test error rate is reported.

Kernel matrix learned by naïve approach (1) A=(A+A’)/2; (2) A=A+I λ

Kernel matrix learned by matrix exponential update Original matrix is made to be symmetric before using algorithm. W0 is the identity matrix.The displayed matrix is learned matrix after 80 iterations.

Generalization error of the learned kernel matrix. Matrix Exponential Update is significantly better than the naïve approach.

Conclusion and future problems • Matrix exponential update is a better way to learn kernel matrix from a similarity matrix with missing values. • Future problems • Numerical issues: Avoid (exp,log). Only use exp. • Learn a Semi positive definite matrix: Matrix Exponential Update always produces a positive definite matrix. • Large scale: exp(W) needs • Rectangle similarity matrix • Asymmetric kernel? Data are inherently asymmetric.