4.3 Diagnostic Checks

4.3 Diagnostic Checks. VO 107.425 - Verallgemeinerte lineare Regressionsmodelle. Diagnostic Checks. Goodness-of-fit tests provide only global measures of the fit of a model. Regression diagnostics aims at identifying reasons for a bad fit.

4.3 Diagnostic Checks

E N D

Presentation Transcript

4.3 Diagnostic Checks VO 107.425 - Verallgemeinerte lineare Regressionsmodelle

Diagnostic Checks • Goodness-of-fit tests provide only global measures of the fit of a model. • Regression diagnostics aims at identifying reasons for a bad fit. • Diagnostic measures should in particular identify observations • that are not well explained by the model. • that are influential for some aspect of it.

4.3.1 Residuals Residuals measure the agreement between single observations and their fitted values and help to identify poorly fitting observations that may have a strong impact on the overall fit of the model. For scaled binomial data the Pearson residual has the form: with as the estimated standard deviation as the probability for the fitted model as the number of observations

4.3.1 Residuals For small ni the distribution of rp(yi, πi) is rather skewed, an effect that is ameliorated by using the transformation to Anscombe residuals: where Ansombe residuals consider an approximation to by the use of the delta method, which yields The Pearson residuals cannot be expected to have unit variance because the variance of the residual has not been taken into account. The standardization just uses the estimated standard deviation of

4.3.1 Residuals When looking for ill-fitting observations, one prefers the standardized Pearson residuals: where hii denotes the i-th diagonal element of H. The standardized Pearson residuals are simply the Pearson residuals divided by As shown in Section 3.10 the variance of the residual vector may be approximated by where the matrix is given by Alternative residuals derive from the deviance: where: Special case for ni = 1: when

4.3.1 Residuals A transformation that yields a better approximation to the normal distribution is the adjusted residuals: Standardized deviance residuals are obtained by dividing by : Residuals are typically visualized in a graph: residuals against observation number shows which observations have large values and may be considered outliers. residuals against fitted linear predictor to find systematic deviations from the model

4.3.1 Residuals residuals against ordered fitted values if one suspects that particular values should be transformed before being included in the linear predictor residuals against corresponding quantiles of a normal distribution Compares the standardized residuals to the order of an N(0,1)-sample. If the model is correct and residuals can be expected to be approximately normally distributed, the plot should show approximately a straight line as long as outliers are absent.

Example 4.3: Unemployment In a study on the duration of unemployment with sample size n = 982 we distinguish between short term unemployment ( ≤ 6 months) and long-term unemployment (> 6 months). It is shown that that for older unemployed persons the, the fitted values tend to be larger than the observed.

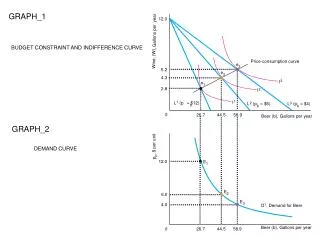

Example 4.4: Food-Stamp Data The food-stamp data from Künsch et al. (1989) consists of n = 150 persons, 24 of whom participated in the federal food-stamp program. The response indicates participation. The predictor variables represent the binary variables tenancy (TEN) supplemental income (SUP) log-transformation of monthly income log(monthly income + 1) (LMI)

Hat Matrix and Influential Observations The iteratively reweighted least-squares fitting that can be used to compute the maximum likelihood (ML) estimate has the form with the adjusted variable where and the diagonal matrices and At convergence one obtains which may be seen as the least-squares solution of the linear model where the dependence on is suppressed.

Hat Matrix and Influential Observations The corresponding matrix has the form Since the matrix H is idempotent and symmetric, it may be seen as a projection matrix for tr(H) = rank(H) holds. The equation shows how the matrix maps the adjusted variable into the fitted values . It may be shown that approximately c holds, where H = (hij) may be seen as a measure of the influence of on in standardized units of changes.

Hat Matrix and Influential Observations In summary, large values of hii should be useful in detecting influential observations. But it should be noted that, in contrast to the normal regression model, the hat matrix depends on because . The essential difference from an ordinary linear regression is that the hat matrix does depend not only on the design but also the fit.

4.3.3 Case Deletion A strategy to investigate the effect of single observations on the parameter estimates is to compare the estimate with the estimate , obtained from fitting the model to the data without the i-th observation. Cook’s distance for observation I has the form To obtain Cook’s distance, some replacements and approximations have to be done, such as a second-order Taylor approximation and one Fisher scoring step. Large values of Cook’s measureci or its approximation indicate that the i-th observation is influential. Its presence determines the value of the parameter vector. A useful way of presenting this measure of influence is in an index plot.

Example 4.5: Unemployment Cook‘s distances for unemployment data show that observations 33, 38, 44, which correspond to ages 48, 53, 59, are influential. All three observations are rather far from the fit.

Example 4.6:Exposure to Dust (Non-Smokers) Observed covariates: • mean dust concentration at working place in mg/m³ (dust) • duration of exposure in years (years) • smoking (1: yes; 0: no) Binary response: • Bronchitis (1: present; 0: not present) Sample number: • n = 1246

Example 4.6:Exposure to Dust (Non-Smokers) Table 4.4 shows the estimated coefficient for the main effects model. Table 4.5 shows the fit without the observation (15.04, 27) It can be seen that the coefficient for the concentration of dust has distinctly changed.

Example 4.6:Exposure to Dust (Non-Smokers) As seen in Figure 4.7, large values of Cook’s distance are the following observations with their respective values: 730 (1.63, 8); 1175 (8, 32); 1210 (8, 13) (dust, years) All three observations correspond to persons with bronchitis. They are NOT extreme in the range of years, which is the influential variable. The variable dust shows no significant effect, and therefore it is only a consequence that the Cool’s distance is small.

Example 4.7: Exposure to Dust In this example the exposure data, including non-smokers, is used. The full dataset • concentration of dust • years of exposure • smoking are significantly influential! one observation positioned very extreme in the observation space!

Example 4.7: Exposure to Dust By excluding the extreme value • coefficient estimates for the variables years and smoking are similar to the estimates for the full data set. • coefficients for the variable concentration of dust differ by about 8%. Since observation 1246 is far away from the data and the mean exposure is a variable not easy to measure should be considered as an outlier and omitted.