Overview of LCG: Achievements, Challenges, and Future Perspectives

This overview highlights the first year of the LCG (LHC Computing Grid) and its collaborative efforts among CERN divisions, regional centers, and experiments like ATLAS, CMS, and LHCb. It discusses key developments in application areas, grid deployment, and the importance of establishing a coherent software environment. Notable successes and ongoing challenges are presented, emphasizing the constructive interactions among different approaches. The document outlines the critical next steps required for successful data challenges and the establishment of LCG as a robust production service.

Overview of LCG: Achievements, Challenges, and Future Perspectives

E N D

Presentation Transcript

CMS ATLAS LHCb

Overview • The first year of LCG • Experiment perspectives on the LCG management bodies • The Experiment Plans for Data Challenges • Developing the Computing TDRs • Summary • Successes • Outstanding questions

Opening Comments • The LCG process has started reasonably well • The Experiments, the CERN Divisions, the Regional Centers and the LCG Project itself are all working together to achieve the common goals • Differences of approach and emphasis exist between all parties, but are much less significant than the commonalities • Most importantly these differences are not destructive to the process, but probably constructive

LCG Area 1: Applications Area (AA) • By the SC2/RTAG process we attempt to identify common requirements of our applications and pull out common projects wherever appropriate • AA projects or contributions in: • Persistency service (POOL) • Basic Services (SEAL) • ROOT • Math Libraries • Simulation Services (Including G4) • Physicist Interface (PI) • Infrastructure (SPI) • ATLAS, CMS and LHCb have baselines to use the POOL software this summer. • There is little scope for accepting delays. The next 2-3 months will be critical if the AA projects are to be successful } Important deliverables in next few months

The LCG Application Blueprint • ALICE expects to build most of its application base on ROOT (and AliEn) • They have a well established program • The creation of the EP/SFT ROOT group, the CERN commitment to ROOT and the addition of LCG manpower are solid contributions to the ROOT development and support. • ATLAS, CMS and LHCb expect ROOT to be a major component but have worked towards an architecture in which it can/will be used, but is not the unique underpinning • These three experiments also have well established programs • The support for ROOT is also at their request • They work with AA to develop new packages (such as POOL) and to bring their separate work to coherence (as in SEAL) • The differences between the approaches of ALICE and ATLAS-CMS-LHCb cannot be denied. • But they have the potential to be constructive • The project and the experiments have developed working models to cope with these differences and to avoid stalemate situations

AA Resources • All parties contribute extensively to AA • Existing Products and Manpower to extend/support : ROOT, SCRAM • Experienced manpower in design and implementation for POOL • Sharing of existing products and development of a coherent base in SEAL • Numerically about 2-4FTEs from each experiment in LCG/AA projects • Qualitatively very important as these are typically our best people • We have the impression that the redirection of experiment and CERN resources has been more effective in this area than the addition of new resources. Time will tell • The Software Process and Infrastructure Group has made an excellent start • Useful tools deployed (savannah…) • Extension of SCRAM to Windows underway • Support of existing experiment projects and the new LCG projects • The real test comes when we start to use the new products (POOL, SEAL,..) • Experiments hope to benefit from significant help from LCG project members in this crucial phase (coming in next few months)

LCG Area 2: Grid Deployment Area (GDA) • Grid Deployment Board (GDB) • Strong participation from all experiments, centers • Getting to grips with deploying an LCG1 service • There is no stable common middleware • Experiments and Grid projects have learned a lot and made many improvements in “Stress tests”. • Success of LCG1 will depend on ability to respond to change • We are building a production system on a base that is still largely prototypal • LCG1 must be capable of rapidly assimilating developments • Require Development, Testing, Hardening, Production environments and continuous migration of code • There is a temptation to believe too much in the ability of the current round of middleware • “This is a production service so it must be stable” (But it can’t be..) • Difficult balance between forcing a limited set of initial conditions and allowing for the actual wide diversity in regional centers m.o. • GDA is establishing a solid base of expertise in LCG

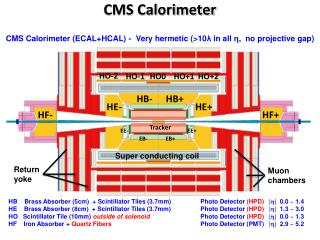

LCG1 • LCG1 must develop over this year to a useable production service • Data Challenges will depend on its functionality, even for a some User use-cases in early 2004. • By 2004 it must have a lot of resources! • For example CMS estimates 600 CPU’s this year, approaching 2000 in 2004 • Portability of the software is becoming critical • Large farms of 64bit processors are being built, but so far outside LCG1 possibilities • The experiments go to great lengths to be able to run on multiple platforms – and we get better software from that investment • Security policies, rapid convergence required, but not trivial • Can enhance security (we want!) • And/or put obstacles in the path of legitimate use (We don’t want!)

Grid Applications Group (GAG) • This impressively acronymed group recently established by SC2 • Follow on of the work done in the HEPCAL RTAG (HEP Common Application Area - describing the important use cases for the experiments • Track the match of deployed solutions with the HEPCAL use cases; extend HEPCAL. • Observation from recent GDB • GAG is the experiments way to maintain focus on some key issues such as middleware portability (Operating Systems etc) • Develop and promulgate a common understanding of as yet barely addressed issues such as “Virtual Data” • Great progress has been made with EDG and VDT software in the experiment stress-tests • As with most progress, it is acquired mostly by mistakes

LCG Area 3: GRID Technology Area (GTA) • Only recently has a coherent understanding of this activity started to be discussed • The important work of specifying the LCG1 middleware has been undertaken in GDB, but we expect this activity to migrate to GTA • A project plan has been presented, but still rather unclear who will do the work (David Foster is doing a great job, who will work with him?) • Experiments have developed different models of working in a GRID environment • ATLAS works with EDG, US projects and NorduGrid. • ALICE uses AliEn (standalone and interfaced to EDG/VDT) • CMS has run extensive tests with US and EDG products • LHCb, early tester of EDG, new tests in real Monte Carlo production (DC03) foreseen this month • Room for collaboration on grid/user portals, RTAG being planned

LCG Area 4: Fabric Technology Area • Good collaboration between experiments and IT • How does (Can?) this group be made more relevant for regional centers? • Tape IO • Obviously an issue for ALICE (1.25GB/s) in HI mode • (Plus: ATLAS and CMS plan to write data at least at their pp rate during HI running) • Even pp running can require this IO rate • Current tests at ~300MB/s. • Tape IO is a vital component of the CERN T0 • We appreciate the difficulties of the budget and the proposals to keep the overrun as low as possible. • TAPE IO tests must be able to track a reasonable ramp-up to build expertise and confidence

LCG Management Bodies • SC2 is working quite well • Most emphasis (too much?) on Applications • Dominated by experiment reps. (Because mostly Applications) • Regional Center participants not very vocal so far • Perceived difficulty of Overview • A detailed WBS has been produced • Necessarily complex • Verification of milestones and their relative meaning is problematic • PEB • Some confusion, on project and experiment side, on the role of experiment representatives has existed • Some discussion between LCG management and experiment management may be required to ensure this does not become a long term problem.

LHC1E34 Average slope =x2.5/year LHC2E33 DC06 Readiness DC05 LCG TDR DC04Physics TDR DAQTDR Time shared Resources Dedicated CMS Resources Data Challenges

Data Challenges • All experiments have detailed DC plans • Motivated by need to test scaling of solutions (Hardware, Middleware and Experiment Software) • At CERN we count on continued support for MSS, in particular CASTOR • Current tests are still a factor of 100 away from startup conditions (KSI2k.Months) • CERN has a special ramping difficulty going from shared systems in 2005/6 to separate systems in 2007. • Introduces an even sharper slope change between phase I and II • Data Challenges moving to Analysis Challenges • Data Challenges will typically have two components • A production phase, that is onerous but not part of the challenge per se • Should not be allowed to fail as also provides Physics data for experiment • A true challenge phase • That may fail and lead to revision of the Computing Model or some of its components • Global Scheduling is required for the two components • Productions can be in parallel, but typically not the true challenge periods

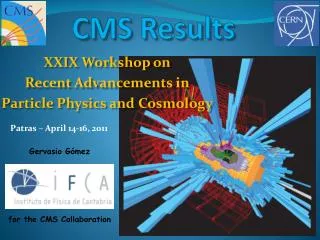

CMS LCG-1 “pilot” diagram PACMAN? PACMAN DB? EDG Replica Manager EDG Workload Management System MDS (GLUE schema) BOSS&R-GMA? EDG SE VDT Server BOSS-DB LDAP? RLS or Replica Catalogue EDG L&B Data Push data or info Pull info Experiment Software Software release Dataset Input Specification Dataset Algorithm Specification Copy data Dataset Definition New dataset request Data management operations Write data SW download & installation Fetch assignment data Retrieve Resource status Update dataset metadata Publish Resource status MCRunJob + EDG plug-in Input data location Job creation EDG UI VDT Client Read data JDL +scripts Job assignment to resources EDG CE VDT server Job submission Job output filtering Production monitoring Job Monitoring Definition D.Stickland Feb 03, POB Job type definition Resources monitoring EDT Monitor

Computing TDRs • Initial LHC Computing to be defined by four experiment CTDRs and an LCG TDR • Respective roles becoming clear (Discussed in last weeks SC2) • LCG TDR depends on CTDR “Computing Model”, but not on all details • Computing Models a joint effort of Experiments matching their Physics Model, and LCG expertise matching solutions. • Timescale of LCG TDR set for Mid 2005 (just) in time for MOUs and purchasing • Timescales for experiment CTDRs ~6 months earlier • (at least Computing Model components) • Experiment CTDRs expect to contain non-binding capacity estimates from regional centers to set appropriate “budget targets”. • Could also seed MOU deliberations • Close connection/collaboration required between LCG TDR and experiment CTDRs

Computing Model (CMS Example) The Current LCG funding shortfall (3.8MCHF) permits either the T0 or the T1 at 20% scale, but not both The Current LCG funding shortfall (3.8MCHF) permits either the T0 or the T1 at 20% scale, but not both

Computing Manpower in Experiments • Remains a problem in the short term • Experiments have willingly contributed many of their most experienced developers to establishing the LCG projects. • In the medium to long term we count on this moderating our manpower requirements • In the short term this exacerbates our manpower shortfalls and puts at risk our milestones. • This even leads to duplicated effort to cover both interim and final solutions • Manpower shortfalls of ~20 people exist across the experiments • We assume we will fill the agreed 6 experiment related LCG posts • Finding ways to tackle the remaining shortfall is critical and we will be looking to cooperate with the project leadership in finding solutions as soon as possible

Use of Outside Resources • Concern that projects are focused at CERN , possible disenfranchising some of the worldwide software developer effort • Probably an inevitable result of the necessary initial concentration on vital new developments • Strongly encourage use of all available tools to enable global participation • CERN video meeting resources are totally overstressed, not enough physically equipped rooms in key times of the day • Tools and networks much improved, this can be done effectively if the participants plan carefully and thoughtfully • Geographic sharing of projects in R&D and early production phases is very hard. • Most of our work requires detailed collaboration still at the project definition level • Similar problem for internal experiment and for LCG developments

Summary • This talk has concentrated on the difficulties • But the overall assessment is one of positive motion • Establishing stable middleware and ensuring its support is crucial. • Building a solid LCG1, but making allowance for flexibility will be critical • Ensuring the full scale of the CERN and Regional Phase I capabilities is still not assured, and is vital to success. • CERN T0/T1 phase I needs the additional 3.8MCHF • We require both T0 and T1 at scale to gain confidence this will all work • TAPE IO scale testing may still be at risk even with the 3.8MCHF • The entire acronym space is fully overloaded, we can’t establish any new committees…