Sequential Pattern Mining

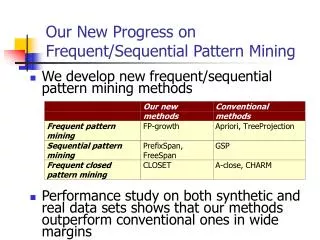

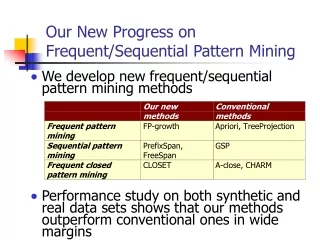

Sequential Pattern Mining. COMP 790-90 Seminar BCB 713 Module Spring 2011. Sequential Pattern Mining. Why sequential pattern mining? GSP algorithm FreeSpan and PrefixSpan Boarder Collapsing Constraints and extensions. Sequence Databases and Sequential Pattern Analysis.

Sequential Pattern Mining

E N D

Presentation Transcript

Sequential Pattern Mining COMP 790-90 Seminar BCB 713 Module Spring 2011

Sequential Pattern Mining • Why sequential pattern mining? • GSP algorithm • FreeSpan and PrefixSpan • Boarder Collapsing • Constraints and extensions

Sequence Databases and Sequential Pattern Analysis • (Temporal) order is important in many situations • Time-series databases and sequence databases • Frequent patterns (frequent) sequential patterns • Applications of sequential pattern mining • Customer shopping sequences: • First buy computer, then CD-ROM, and then digital camera, within 3 months. • Medical treatment, natural disasters (e.g., earthquakes), science & engineering processes, stocks and markets, telephone calling patterns, Weblog click streams, DNA sequences and gene structures

What Is Sequential Pattern Mining? • Given a set of sequences, find the complete set of frequent subsequences A sequence : < (ef) (ab) (df) c b > A sequence database An element may contain a set of items. Items within an element are unordered and we list them alphabetically. <a(bc)dc> is a subsequence of <a(abc)(ac)d(cf)> Given support thresholdmin_sup =2, <(ab)c> is a sequential pattern

Challenges on Sequential Pattern Mining • A huge number of possible sequential patterns are hidden in databases • A mining algorithm should • Find the complete set of patterns satisfying the minimum support (frequency) threshold • Be highly efficient, scalable, involving only a small number of database scans • Be able to incorporate various kinds of user-specific constraints

Seq. ID Sequence 10 <(bd)cb(ac)> 20 <(bf)(ce)b(fg)> 30 <(ah)(bf)abf> 40 <(be)(ce)d> 50 <a(bd)bcb(ade)> A Basic Property of Sequential Patterns: Apriori • A basic property: Apriori (Agrawal & Sirkant’94) • If a sequence S is not frequent • Then none of the super-sequences of S is frequent • E.g, <hb> is infrequent so do <hab> and <(ah)b> Given support thresholdmin_sup =2

Basic Algorithm : Breadth First Search (GSP) • L=1 • While (ResultL != NULL) • Candidate Generate • Prune • Test • L=L+1

Seq. ID Sequence 10 <(bd)cb(ac)> 20 <(bf)(ce)b(fg)> 30 <(ah)(bf)abf> 40 <(be)(ce)d> 50 <a(bd)bcb(ade)> Finding Length-1 Sequential Patterns • Initial candidates: all singleton sequences • <a>, <b>, <c>, <d>, <e>, <f>, <g>, <h> • Scan database once, count support for candidates min_sup =2

Seq. ID Sequence Cand. cannot pass sup. threshold 5th scan: 1 cand. 1 length-5 seq. pat. <(bd)cba> 10 <(bd)cb(ac)> 20 <(bf)(ce)b(fg)> Cand. not in DB at all <abba> <(bd)bc> … 4th scan: 8 cand. 6 length-4 seq. pat. 30 <(ah)(bf)abf> 3rd scan: 46 cand. 19 length-3 seq. pat. 20 cand. not in DB at all <abb> <aab> <aba> <baa><bab> … 40 <(be)(ce)d> 2nd scan: 51 cand. 19 length-2 seq. pat. 10 cand. not in DB at all 50 <a(bd)bcb(ade)> <aa> <ab> … <af> <ba> <bb> … <ff> <(ab)> … <(ef)> 1st scan: 8 cand. 6 length-1 seq. pat. <a> <b> <c> <d> <e> <f> <g> <h> The Mining Process min_sup =2

Generating Length-2 Candidates 51 length-2 Candidates Without Apriori property, 8*8+8*7/2=92 candidates Apriori prunes 44.57% candidates

Pattern Growth (prefixSpan) • Prefix and Suffix (Projection) • <a>, <aa>, <a(ab)> and <a(abc)> are prefixes of sequence <a(abc)(ac)d(cf)> • Given sequence <a(abc)(ac)d(cf)>

Example An Example ( min_sup=2):

PrefixSpan (the example to be continued) Step1: Find length-1 sequential patterns; <a>:4, <b>:4, <c>:4, <d>:3, <e>:3, <f>:3 support pattern Step2: Divide search space; six subsets according to the six prefixes; Step3: Find subsets of sequential patterns; By constructing corresponding projected databases and mine each recursively.

Example • Find sequential patterns having prefix <a>: • Scan sequence database S once. Sequences in S containing <a> are projected w.r.t <a> to form the <a>-projected database. • Scan <a>-projected database once, get six length-2 sequential patterns having prefix <a> : • <a>:2 , <b>:4, <(_b)>:2, <c>:4, <d>:2, <f>:2 • <aa>:2 , <ab>:4, <(ab)>:2, <ac>:4, <ad>:2, <af>:2 • Recursively, all sequential patterns having prefix <a> can be further partitioned into 6 subsets. Construct respective projected databases and mine each. • e.g. <aa>-projected database has two sequences : • <(_bc)(ac)d(cf)> and <(_e)>.

PrefixSpan Algorithm Main Idea: Use frequent prefixes to divide the search space and to project sequence databases. only search the relevant sequences. PrefixSpan(, i, S|) • Scan S| once, find the set of frequent items b such that • b can be assembled to the last element of to form a sequential pattern; or • <b> can be appended to to form a sequential pattern. • For each frequent item b, appended it to to form a sequential pattern ’, and output ’; • For each ’, construct ’-projected database S|’, and call PrefixSpan(’, i+1,S|’).

Approximate match Compatibility Matrix • When you observe d1 • Spread count as • d1: 90%, d2: 5%, d3: 5%

Match • The degree to which pattern P is retained/reflected in S • M(P,S) = P(P|S)= C(p,s) when when lS=lP • M(P,S) = max over all possible when lS>lP • Example

Calculate Max over all • Dynamic Programming • M(p1p2..pi, s1s2…sj)= Max of • M(p1p2..pi-1, s1s2…sj-1) * C(pi,sj) • M(p1p2..pi, s1s2…sj-1) • O(lP*lS) • When compatibility Matrix is sparse O(lS)

Match in D • Average over all sequences in D

Spread of match • If compatibility matrix is identity matrix • Match = support

Anti-Monotone • The match of a pattern P in a symbol sequence S is less than or equal to the match of any subpattern of P in S • The match of a pattern P in a sequence database D is less than or equal to the match of any subpattern of P in D • Can use any support based algorithm • More patterns match so require efficient solution • Sample based algorithms • Border collapsing of ambiguous patterns

Chernoff Bound • Given sample size=n, range R, • with probability 1- • true value: • = sqrt([R2ln(1/)]/2n) • Distribution free • More conservative • Sample size : fit in memory • Restricted spread : • For pattern P= p1p2..pL • R=min (match[pi]) for all 1 i L

Algorithm • Scan DB: O(N*min (Ls*m, Ls+m2)) • Find the match of each individual symbol • Take a random sample of sequences • Identify borders that embrace the set of ambiguous patterns O(mLp * |S| * Lp * n) • Min_match • existing methods for association rule mining • Locate the border of frequent patterns • in the entire DB • via border collapsing

Border Collapsing • If memory can not hold the counters of all ambiguous patterns • Probe-and-collapse : binary search • Probe patterns with highest collapsing power until memory is filled • If memory can hold all patterns up to the 1/x layer • the space of ambiguous patterns can be narrowed to at least 1/x of the original one • where x is a power of 2 • If it takes a level-wise search y scans of the DB, only O(logxy) scans are necessary when the border collapsing technique is employed

Periodic Pattern • Full periodic pattern • ABCABCABC • Partial periodic pattern • ABC ADC ACC ABC • Pattern hierarchy • ABC ABC ABC DE DE DE DE ABC ABC ABC DE DE DE DE ABC ABC ABC DE DE DE DE

Periodic Pattern • Recent Achievements • Partial Periodic Pattern • Asynchronous Periodic Pattern • Meta Pattern • InfoMiner/InfoMiner+/STAMP

Clustering Sequential Data • CLUSEQ • ApproxMAP