Web Site Example

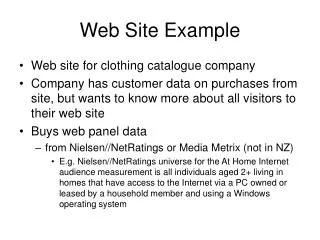

Web Site Example. Web site for clothing catalogue company Company has customer data on purchases from site, but wants to know more about all visitors to their web site Buys web panel data from Nielsen//NetRatings or Media Metrix (not in NZ)

Web Site Example

E N D

Presentation Transcript

Web Site Example • Web site for clothing catalogue company • Company has customer data on purchases from site, but wants to know more about all visitors to their web site • Buys web panel data • from Nielsen//NetRatings or Media Metrix (not in NZ) • E.g. Nielsen//NetRatings universe for the At Home Internet audience measurement is all individuals aged 2+ living in homes that have access to the Internet via a PC owned or leased by a household member and using a Windows operating system

Fit Poisson Model • R Code: visit.dist <- c(2046,318,129,66,38,30,16,11,9,10,55) lpois <- function(lambda,data) { visits <- 0:9 sum(data[1:10]*log(dpois(visits,lambda))) + data[11]*log(ppois(9,lambda,lower.tail=FALSE)) } optimise(function(param){-lpois(param,visit.dist)},c(0,10)) • Result: maximum value of log-likelihood is achieved at λ=0.72

Nature of Heterogeneity • Unobserved (or random) heterogeneity • The visiting rate λ is assumed to vary across the population according to some distribution • No attempt is made to explain why people differ in their visiting rates • Observed (or determined) heterogeneity • Explanatory variables are observed for each person • We explicitly link the value of λ for each person to their values of the explanatory variables • E.g. Poisson regression model

Poisson Regression Model • Let Yi be the number of times that individual i visits the web site • Assume Yi is distributed as a Poisson random variable with mean λi • Suppose each individual’s mean λi is related to their observed explanatory characteristics by • Take logs of household income and age first • R code, using glm function for Poisson regression: glm.siteVisits <- glm(Visits ~ logHouseholdIncome + Sex + logAge + HH.Size, family=poisson(), data=siteVisits) summary(glm.siteVisits)

Poisson Regression Estimates Can also fit model using maximum likelihood as for simple Poisson model, but this will not give standard errors

Poisson vs Poisson Regression • The simple Poisson model (model B) is nested within the Poisson regression model (model A) • So we can use a likelihood ratio test to see whether model A fits the data better • Compute the test statistic and reject the null hypothesis of no difference if

Expected Number of Visits • So person 2 should visit the site less often than person 1

Poisson Regression Fit • Poisson regression model improves fit over simple Poisson model • But not by much • Try introducing random heterogeneity instead of, or as well as, observed heterogeneity • Possibilities include: • Zero-inflated Poisson model • Zero-inflated Poisson regression • Negative binomial distribution • Negative binomial regression

Zero-inflated Poisson Regression • Assume that a proportion π of people never visit the site • However other people visit according to Poisson model • Probability distribution:

Zero-inflated Poisson Model • Note that Poisson model predicts too few zeros • Assume that a proportion π of people never visit the site • Remaining people visit according to Poisson distribution • No deterministic component • R code: lzipois <- function(pi,lambda,data) { visits <- 1:9 data[1]*log(pi + (1-pi)*dpois(0,lambda)) + sum(data[2:10]*log((1-pi)*dpois(visits,lambda))) + data[11]*log((1-pi)*ppois(9,lambda,lower.tail=FALSE)) } optim(c(0.5,1),function(param){-lzipois(param[1],param[2],visit.dist)}) • Likelihood maximised at π=0.73, λ=2.71

Zero-inflated Poisson Regression • Can add deterministic heterogeneity to zero-inflated Poisson (ZIP) model • Again assume that a proportion π of people never visit the site • However other people visit according to Poisson regression model • Probability distribution:

Fit ZIP Regression Model • R code: siteVisits <- read.csv(“visits.csv”) lzipreg <- function(param,data) { zpi <- param[1] lambda <- exp(param[2] + data[,3:6] %*% param[3:6]) sum(log(ifelse(data[,2] == 0,zpi,0) + (1-zpi)*dpois(data[,2],lambda))) } optim(c(.7,2,0,-0.1,0.1,0),function(param){-lzipreg(param,as.matrix(siteVisits))},control=list(maxit=1000)) • Likelihood maximised at π=0.74, β=(1.90,-0.09,-0.13, 0.11,0.02)

Simple NBD Model • Recall the negative binomial distribution • The number of visits Y made by each individual has a Poisson distribution with rate λ • λ has a Gamma distribution across the population • At the population level, the number of visits has a negative binomial distribution

Fitting NBD Model • R code: lnbd2 <- function(alpha,beta,data) { visits <- 0:9 prob <- beta/(beta+1) sum(data[1:10]*log(dnbinom(visits,alpha,prob))) + data[11]*log(1-pnbinom(9,alpha,prob)) } optim(c(1,1),function(param) {-lnbd2(param[1],param[2],visit.dist)}) • Likelihood maximised for α=0.157 and β=0.197

NBD Regression • Can also add deterministic heterogeneity to NBD model • Again assume that a proportion π of people never visit the site • However other people visit according to an NBD regression model • Probability distribution: • Reduces to simple NBD model when g=0

NBD Regression Estimates Can also fit model using maximum likelihood, but this will not give standard errors

Covariates In General • Choose a probability distribution that fits the individual-level outcome variable • This has parameters (a.k.a. latent traits) θi • Think of the individual-level latent traits θi as a function of covariates x • Incorporate a mixing distribution to capture the remaining heterogeneity in the θi • The variation in θi not explained by x • Fit this model (e.g. using maximum likelihood)

New Concepts • How to incorporate covariates in probability models • Poisson, zero-inflated Poisson and NBD regression models for count data • However, getting the outcome variable distribution right was more crucial here than introducing covariates • Importance of covariates is often exaggerated

Reach and Frequency Models • Advertising is a major industry • NZ Ad expenditure reached $1.5bn in 2000 • Many companies spend millions each year • Crucial to understand the effects of this expenditure • Major outcomes include how many people are reached by an ad campaign, and how many times • Known as reach and frequency (R&F) • Typically analysis is limited to calculating media exposure, not advertising exposure

Reach and Frequency Models • Data on TV viewing, newspaper and magazine, radio listening etc is routinely gathered • Ratings and readership figures determine the price of space in these media • However this data typically does not enable detailed reach and frequency analysis • E.g. readership questions ask about the last issue read, and how many read out of average 4 issues • Longitudinal data is collected on TV viewing, but item non-response causes problems with direct analysis • Models are needed to derive complete reach and frequency analyses from the collected data

Beta-Binomial Model for R&F • If an advertiser has placed an ad in each of 10 issues of a magazine, the beta-binomial model assumes that: • Each person has a probability p of reading each issue • These probabilities follow a beta distribution • Each issue is read independently, between and across individuals • Distribution of # issues read for each person is binomial • The resulting aggregate exposure distribution is the beta-binomial • Applied to R&F analysis by Metheringham (1964) • Still widely used • But not very accurate

Modified BBM • One problem with the beta-binomial model is that it does not model loyal viewers/readers/listeners well • By adding a point mass at 1 to the beta distribution of exposure probabilities, the BBM can be modified to accommodate loyal readers etc • Derived by Chandon (1976); improved by Danaher (1988), Austral. J. Statist.

Multiple Media Vehicles • The BBM (and modified BBM) focus on exposure to one media vehicle (e.g. one magazine) over the course of an ad campaign • Need to extend to multiple vehicles • Model both reading choice and times read, in one combined model • Could assume independence • E.g. Dirichlet-multinomial model • Assumes independence of irrelevant alternatives (IIA) • But there are known to be correlations between different media vehicles • E.g. women’s magazines, business papers, programmes on TV1 vs TV3

Multiple Media Vehicles • Models need to take correlations between media vehicles into account • Log-linear models have been used • But these are computationally intensive for moderately large advertising schedules • Canonical expansion model (Danaher 1992) • Uses Goodhardt and Ehrenburg’s “duplication of viewing” law to minimise need for multivariate correlations • Data on pairwise correlations used, but higher order joint probabilities are derived using this law • Higher order interactions are assumed to be zero • Canonical expansions are used for the joint probabilities to minimise computations

FMCG Sales/Purchasing • Retail sales figures for fast moving consumer goods • Have good aggregate weekly sales figures • Data available down to SKU level • Data collected at store level • Know when total sales are changing over time • Can also investigate overall response to promotions • Using store level data can give more accurate results, and even allow some segmentation by chain or region • However sales figures cannot show us who is buying more when sales increase, or who is affected by promotions • Heavy buyers? Light buyers? New buyers? • Households with kids? Retired couples? Flatters? • Even when overall sales are flat, there may be hidden changes • Marketing activities could be made more effective using this sort of information, so how can we find out about this?

Household Purchasing Data • Data about FMCG purchases collected from a panel of households • Can be collected through diaries • Or even weekly interviews, based on recall • Best method is currently to equip panel with scanners • This is used by each household member to record all items bought • ACNielsen (NZ) runs a scanner panel of over 1000 households • Data includes amount purchased, price, date, product details down to SKU level • Also have demographic characteristics of household

Common Research Questions • Who buys my product? • Perhaps better answered by U&A (usage and attitudes) study • How much do they buy? • How often? • Who are my heavy buyers? Light buyers? Frequent buyers? • How many are repeat buyers? • How does this compare to my other brands? How about my competitors? • Are my results normal? • How do they compare to similar products in other categories?

Observations • Usually there will be a wide range of purchasing intensity among buyers of each brand • Also a proportion who do not buy the brand • Instead of a whole brand, we can also look at a brand/package size combination • Similar findings apply at both levels

Another Example • Data gathered from a panel of 983 households • Purchases of Lux Flakes over a 12 week period • Various summary measures shown below

Example (continued) • Low penetration overall • 22 buyers, about 2% of panel • More than half the purchases were by “new” buyers • The cumulative purchasing distribution looks similar to the cumulative reach distributions from the last lecture

Negative Binomial Model • Fit NBD model – assumes Poisson process for purchase occasions, with Gamma heterogeneity • R code: purchase.dist <- c(961,17,3,2) lnbd3 <- function(alpha,beta,data) { visits <- 0:3 prob <- beta/(beta+1) sum(data[1:4]*log(dnbinom(visits,alpha,prob))) } optim(c(1,1),function(param) {-lnbd3(param[1],param[2],purchase.dist)}) • Likelihood maximised for α=0.045 and β=1.514

Negative Binomial Model • Can also fit the model based on the observed values of two quantities • The proportion of people p0 making no purchases during the study period • The mean number of purchases made m (assuming that only one item is purchased at each purchase occasion) • Then solve for α and βnumerically

Multivariate NBD • Generalise to multiple time periods with durations Ti, i=1,…,t • Various partitionings of the Ti lead to variables that are also NBD • E.g. divide into the first s time periods and the remaining t - s • The values for the latter t - s periods, conditional on those for the first s, are multivariate NBD • α is incremented by the total purchases from the first s periods, and the mean is updated as a weighted average of the original mean and the observed mean. • So can easily apply empirical Bayes techniques using this model

NBD Model for Longer Periods • Another property of the NBD is that purchases over a longer time period are also NBD (assuming that the purchasing process remains the same) • The mean number of purchases increases in proportion to the length of the period • But the parameter α remains fixed

NBD Model • The NBD model has been applied to products in a wide range of categories • It generally fits very well • The main exception (for diary data) is when the recording period is too short compared to the purchase frequency • Often people record shopping once in each period, rather than multiple times • Can cause problems if many people are expected to purchase once or more each period

α is Usually Constant • Typically α will be relatively constant across different products in the same category • This means that the heterogeneity in purchasing rates is similar across products • However β will vary to reflect the penetrations of the different products