Omphalos Session: Design and Results of the GI Competition

230 likes | 365 Views

Join us for the Omphalos Session, featuring insightful presentations by Alexander Clark and Georgios Petasis, and an open discussion on the Omphalos and GI competition. Explore the competition's design, complexity measures for GI algorithms, and the results showcasing the state-of-the-art in grammar induction. Learn about the challenges in creating effective training data, evaluation methods, and task complexity. Engage with the community to enhance research and innovation in grammar induction algorithms.

Omphalos Session: Design and Results of the GI Competition

E N D

Presentation Transcript

Omphalos Session Programme • Design & Results 25 mins • Award Ceremony 5 mins • Presentation by Alexander Clark 25 mins • Presentation by Georgios Petasis 10 mins • Open Discussion on Omphalos and GI competition 20 mins

Omphalos : Design and Results Brad Starkie François Coste Menno van Zaanen

Contents • Design of the Competition • A complexity measure for GI • Results • Conclusions

Aims • Promote new and better GI algorithms • A forum to compare GI algorithms • Provide an indicative measure of current state-of-the-art.

Design Issues • Format of training data • Method of evaluation • Complexity of tasks

Training Data • Plain Text or Structured Data • Bracketed, Partially bracketed, Labelled, Unlabelled • (+ve and –ve data) or (+ve data only) Plain Text,(+ve and –ve) and (+ve only) • Similar to Abbadingo • Placed fewest restrictions on competitors

Method of Evaluation • Classification of unseen examples • Precision and Recall • Comparison of derivation trees Classification of unseen examples • Similar to Abbadingo • Placed fewest restrictions on competitors

Complexity of the Competition Tasks • Learning task should be sufficiently difficult. • Outside the current state-of-the-art, but not too difficult • Ideally provable that the training sentences are sufficient to identify the target language

Three axes of difficulty • Complexity of the underlying grammar • +ve/-ve or +ve only. • Similarity between -ve and +ve examples.

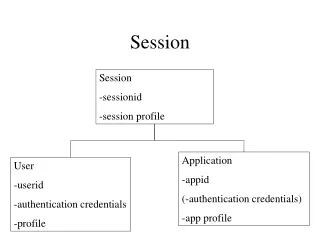

Complexity Measure of GI • Created a model of the GI based upon a brute force search (Non polynomial) • Complexity measure = size of the hypothesis space created when presented with a characteristic set.

Hypothesis Space for GI • All CFGs can be converted to Chomsky Normal Form. • For any sentence there are a finite number of unlabelled derivations given CNF • Finite number of labelled derivation trees • The grammar can be reconstructed given sufficient number of derivation trees • All possible labelled derivation trees corresponds to all possible CNF grammars given the maximum number of nterms • Solution: calculate max number of nterms and create all possible grammars

BruteForceLearner • Given the positive examples construct all possible grammars • Discard any grammars that generate any negative sentences • Randomly select a grammar from hypothesis set

Characteristic Set of Positive Sentences • Put the grammar into minimal CNF form • If a single rule is removed one or more sentences can't be derived • For each rule add a sentence that can only be derived using that rule • Such a sentence exists if G in minimal form • When presented with this set, one of the hypothesis grammars is correct

Characteristic set of Negative Sentences. • Given G calculate positive sentences • Construct hypothesis set • For each hypothesis H G, L(H) L(G) add + sentence s | s L(G) but s L(H) • For each hypothesis H G, L(H) L(G) add - sentence s | s L(H) but s L(G) Generating -ve data according to this technique requires exponential time – Therefore cannot be used to generate –ve data in Omphalos.

Creation of the Target Grammars • Benchmark probs identified in literature • Stolcke-93,Nakamura-02,Cook-76,Hopcroft-01 • Number of nterms, terms and rules were selected • Randomly generated grammars, useless rules removed, CF constructs (center recursion) added • A characteristic set of sentences was generated, and complexity measured • To test if deterministic, LR(1) tables created using Bison • For non-deterministic grammar non-deterministic constructs added

Creation of positive data • Characteristic set generated from grammar • Additional training examples added • Size of training set 10 20 size of characteristic set • Longest training example was shorter than the longest test

Creation of negative data • Not guaranteed to be sufficient • Originally randomly created (bad idea) • For probs 6a 10 regular equivalents to grammars constructed and negative data could be generated from regular equivalent to CFG • Nederhof-00 • Center recursion expanded to a finite depth Vs true center recursion • Equal number of positive and negative examples in the test sets

Participation • Omphalos 1st page: ~ 1000 hits from 270 domains • Attempted to discard crawlers and bots hits • All continents except 2 • Data sets : downloaded by 70 different domains • Oracle: 139 label submissions by 8 contestants (4) • Short test sets: 76 submissions • Large test sets: 63 submissions

Techniques Used. • Prob 1 • Solved by hand. • Probs 3, 4, 5, and 6 • Pattern matching using n-grams. • Generated its own negative data • the majority of randomly generated strings would not be contained within the language. • Probs 2, 6.2, 6.4 • Distributional Clustering and ABL

Conclusions • The way in which negative data is created is crucial to judging performance of competitors entries

Review of Aims • Promote development of new and better GI algorithms • Partially achieved • A forum to compare different GI algorithms • Achieved • Provide an indicative measure of the state-of-the-art. • Achieved