Scaling Data Stream Processing Systems on Multicore Architectures

920 likes | 944 Views

Explore the importance of data stream processing on multicore architectures, focusing on scalability and common design aspects of modern DSP systems like Twitter Heron and Apache Storm. Discover challenges and key findings to optimize performance.

Scaling Data Stream Processing Systems on Multicore Architectures

E N D

Presentation Transcript

Scaling Data Stream Processing Systemson Multicore Architectures Shuhao Zhang Shuhao.zhang@comp.nus.edu.sg

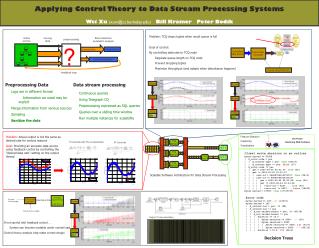

Importance of Data Stream Processing • Data stream processing (DSP) has attracted much attention for real-timeanalysis applications. • Many DSP systems have been proposed recently. … Twitter Heron Apache Storm Apache Flink 2

DSPS on Modern Hardware • DSPS are mostly build for scale-out. • Multicore architectures are attractive platform for DSPSs. • However, fully exploiting its computation power can be challenging. Saber, SIGMOD’16 StreamBox, ATC’17 … Out-of-order arrival This thesis CPU+GPU Multicore-awareness 3

Agenda • Revisiting the Design of Data Stream Processing Systems on Multi-Core Processors, ICDE’17 Shuhao Zhang1,2, Bingsheng He1, Daniel Dahlmeier2, Amelie Chi Zhou3, Thomas Heinze2 1 2 3 5

Common Designs of Recent DSP Systems • Existing systems mainly focus on scaling out using a cluster of commodity machines. • Three common design aspects • Pipelined processing with message passing • On-demand data parallelism • JVM based implementation 6

Common Designs of Recent DSP Systems • Existing systems mainly focus on scaling out using a cluster of commodity machines. • Three common design aspects • Pipelined processing with message passing • On-demand data parallelism • JVM based implementation • Turns out that JVM (e.g., GC) involves a minor overhead during stream processing 7

Design Aspect 1: Pipelined Processing with Message Passing Data Source Split Count Sink Word-count application Input tuples Aim to achieve low processing latency. 8

Design Aspect 2: On-demand Data Parallelism • Modern DSP systems such as Storm and Flink are also designed to support data parallelism. Word-count application Sink Split Count Data Source Aim to achieve high throughput. 9

Can DSP systems Perform well on Scale-up Architecture? http://www.tweaktown.com/news/41273/sgi-demonstrates-30-million-iops-beast-with-intel-p3700-s-at-sc14/index.html A single large machine with 100s or 1000s of cores A cluster of commodity machines 10

Scale-up Architecture is Complex Socket 1 (8 Cores) DRAM (128 GB) Socket 0 (8 Cores) 16 GB/S • Non-uniform memory access (NUMA) brings performance issues • Complex memory subsystem and deep execution pipelines Socket 1 (8 Cores) DRAM (128 GB) Instruction Fetch Units Socket 0 (8 Cores) Instruction Length Decoder (ILD) 51.2 GB/S Front end L1-I cache ITLB Instruction Queue (IQ) Memory Instruction Decoders 1.5k D-ICache L2 cache Instruction Decode Queue (IDQ) LLC cache Renamer There is a lack of detailed studies on profiling the aforementioned common design aspects of DSP systems on scale-up architectures. L1-D cache Back end DTLB Scheduler Execution Core Retirement 11

Benchmark Design • There has been no standard benchmark for DSP systems, especially on scale-up architectures. • We design our benchmark according to the four criteria proposed by Jim Gray [1]. 1: J. Gray, Benchmark Handbook: For Database and Transaction Processing Systems. San Francisco, CA, USA: Morgan Kaufmann Publishers Inc., 1992. 12

Scalability on Varying Number of Cores/Sockets (4sockets) (4sockets) (2sockets) (2sockets) (a) Storm (b) Flink Scale well on a single socket Scale poorly on multiple sockets Overhead > Additional resource benefits 13

Are there any Problems when Running on a Single Socket? Processor stalls (a) Storm (b) Flink 70% of the execution times are spent in processor stalls. 14

Are there any Problems when Running on a Single Socket? Front-end stalls (a) Storm (b) Flink Front-end stalls is a major bottleneck. 15

Instruction Footprint L1I-Cache: 32KB L2-Cache: 256KB (i) Common range of their instruction footprints is between 1KB to 10MB and 1KB to 1MB (ii) 30~50% and 20~40% of the instruction footprints are larger than the L1-ICache Mainly caused by the pipelined-processing design. 16

Are there any Problems when Running on Multiple Sockets? • Operators may be scheduled at different CPU sockets. Table: LLC miss stalls when running Storm with four CPU sockets Up to 24% of the total execution time are wasted due to remote memory access Mainly caused by the message passing design. 17

Key Findings (recap) • Unmanaged massive pipelined processing • large instruction footprint between two consecutive invocations of the same function • significant L1-ICache misses. • NUMA-oblivious message passing design • further performance degradation due to significant remote memory access overhead. 18

Agenda • BriskStream: Scaling Data Stream Processing on Shared-Memory Multicore Architectures, SIGMOD’19 • Shuhao Zhang*1, Jiong He2, Amelie Chi Zhou3, Bingsheng He1 3 1 2 *Work done while as research trainee at SAP Singapore. 20

Outline • Motivation • Performance Model • Algorithm Design • Experimental Results 21

NUMA Servers (a) Server A: HUAWEI KunLun Cores (w/o HT) @ 1.2GHz (b) Server B: HP ProLiant DL980 Cores (w/o HT) @ 2.27 GHz Different NUMA topology 22

Word Count (WC) as an Example • Each operator can be scaled to multiple instances (called replicas). • Each replica can be independently scheduled. (a) Logical view of WC. (b) One example execution plan of WC. Three CPU sockets are used. Given a NUMA machine (limited HW resources), what is the optimal deployment plan? Focus on placement optimization in this talk. 23

Zoom into the System Design e.g., Counter e.g., Splitter Producer Consumer • Relative location affects the processing behavior of consumer. • Each operator (except Spout) is a consumer of its upstream operator. 4 2 3 1 Pass value queue Pass reference x Memory Step x Socket x? Socket 0 24

Outline • Motivation • Performance Model • Algorithm Design • Experimental Results 25

The Performance Model Bolt Sink … Bolt • : input rate – depends on ( of upstream operators). • : output rate – depends on (processing speed) and of upstream operators). • The model tries to estimate throughput (), which is Sink’s . 26

Estimating of an Operator can be estimate as: #tuples processed () / time needed to process them () • Consider an arbitrary observation time t, • = total aggregated input tuples arrived during t; • = aggregated time spend on processing all of tuples (assume ). • stands for the average time spent on handling each tuple under an execution plan 27

Estimating Time Spend on Each Tuple • :Actual function execution time assuming the operator has the input data. • :Time required to fetch (local or remotely) the input data from its producers. Estimated as follows. This is why varies under different execution plans... varies under different plans. 28

Outline • Motivation • Performance Model • Algorithm Design • Experimental Results 29

NUMA-aware Placement is Tricky • Stochasticity is introduced into the problem. • Objective value (e.g., throughput) or weight (e.g., resource demand) of each operator is no longer constant. • The placement decisions may conflict with each other and ordering is introduced into the problem. We apply Branch and Bound technique 30

Algorithm Running Example S0 S1 • Allocate four operators into two sockets (choices). • Three operators cannot be allocated at the same socket due to resource constraint.

Outline • Motivation • Performance Model • Algorithm Design • Experimental Results 144 cores (w/o HT) 33

Experimental Evaluation • Applications: • WC: word-count; FD: fault-detection; SD: spike-detection; LR: linear roadbenchmark • Much higher throughput

Evaluation of Scalability • (1) Scales much better (144 cores) (2) Unable to linearly scaleup • Stream compression? [TerseCades, ATC’18] Linear Road Benchmark Tray 2 Tray 1

Recap • BriskStream scales stream computation towards hundred of cores under NUMA effect even without the tedious tuning process. • We demonstrated that relative-location awareness (or varying processing capability awareness) is the key to address NUMA effect in optimizing stream computation on modern multicore architectures. 36

Agenda • Scaling Consistent Stateful Stream Processing on Shared-Memory Multicore Architectures • Shuhao Zhang1, Yingjun Wu2, Feng Zhang3, Bingsheng He1 1 2 3 38

Outline • Motivation • Our system Designs • Experimental Results 39

Data Stream Processing Systems • Recent efforts have demonstrated ultra-fast stream processing on large-scale multicore architectures. • However, a potential weakness is the inadequate support of consistent stateful stream processing. 40

Linear Road Benchmark as an Example • Road Speed and Vehicle Cntmaintain and update road congestion status. • Toll Notification computes ``toll” of each vehicle depends on road congestion status. • Road congestion status is shared application states among streaming operators. 41

The Current System Design • Recall: • Pipelined processing with message passing • On-demand data parallelism • (1) Concurrent control • Key-based stream partition • Lock 42

Key-based Stream Partition • Can not handle general case No Conflict! AYE Instance 1 AYE AYE Pay extra penalty if AYE is empty! copy PIE PIE Instance 2 PIE AYE 43

Lock-based State Sharing • Poor performance AYE Instance 1 AYE AYE & PIE PIE PIE Instance 2 44

The Current System Design • Recall: • Pipelined processing with message passing • On-demand data parallelism • (1) Concurrent control • Key-based stream partition • Can not handle general case • Lock-based • Poor performance • (2) Access Ordering • Buffer and sort • Performs poorly 45

Consistent Stateful Stream Processing • Maintaining shared states consistency through employing transactional schematics. • State transaction (): set of state access triggered by processing of a single input event at one executor. • Consistent property (): state transaction schedule must be conflict equivalent to … 46

Existing Solutions Revisited • Limited parallelism opportunities. • Can we explore more parallelism opportunities? • Large synchronization overhead for processing every event. • Can we reduce such overhead?

Outline • Motivation • Our system Designs • Experimental Results 48

System Overview • To explore more parallelism opportunities • Three-step procedure abstraction • Punctuation Signal Slicing Scheduling • To reduce state access synchronization overhead • Fine-grained Parallel State Access • Up to 6.8 times higher throughput with similar or even lower processing latency! 49

Three-step Procedure Process step State access step Post-process step 50