CLEF Interactive Track Overview

160 likes | 179 Views

Explore CLEF-Interactive Track results focusing on query search, ranked list, and query reformulation. Learn about the study goals, design, participating teams, and key outcomes. Discover iCLEF goals, component evaluation, and document selection experiments.

CLEF Interactive Track Overview

E N D

Presentation Transcript

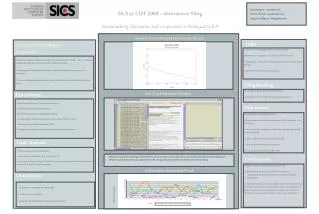

CLEF Interactive Track Overview iCLEF Douglas W. Oard, UMD, USA Julio Gonzalo, UNED, Spain CLEF 2003

Outline • Goals • Track design • Participating teams • Results

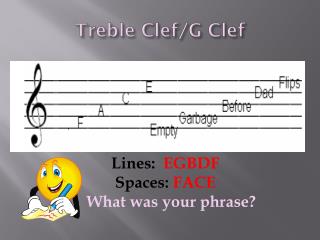

CLEF Query Search Ranked List

Query Query Reformulation iCLEF Query Formulation Query Translation Translated Query Search Ranked List Selection Document Examination Document Use

iCLEF Goals • Track design • Component evaluation (since 2001) • End-to-end evaluation (since 2002) • System evaluation • Support for interactive document selection • Support for query creation • Support for iterative refinement

Document Selection Experiments Topic Description Standard Ranked List Interactive Selection F 0.8

End-to-End Experiments Topic Description Query Formulation Automatic Retrieval Interactive Selection Average Precision F 0.8

Topics • Eight “broad” (multifaceted) topics • 1: The Ames espionage case (C100) • 2: European car industry (C106) • 3: Computer security (C109) • 4: Computer animation (C111) • 5: Economic policies of Eduoard Balladur (C120) • 6: Marriage Jackson-Presley (C123) • 7: German armed forces out-of-area (C133) • 8: EU fishing quotas (C139) • Selected from the CLEF 2002 topic set • Not too easy (e.g., proper name not perfectly predictive) • Not too hard (e.g., requiring specialize expertise) • (Relevant documents in every collection?)

Test Collection • Any CLEF-2002 language collection • Systran baseline translations • Spanish to English, English to Spanish • Augmented relevance judgments • Start with CLEF-2002 judgments • Enrich pools with: • Top 20 documents from every iteration • Every document judged by a user • Judge all additions to the pools

Measures of Effectiveness • Query Formulation: Uninterpolated Average Precision • Expected value of precision [over relevant document positions] • Interpreted based on query content at each iteration • Document Selection: Unbalanced F-Measure: • P = precision • R = recall • = 0.8 favors precision • Models expensive human translation

Variation in Automatic Measures • System • What we seek to measure • Topic • Sample topic space, compute expected value • Topic+System • Pair by topic and compute statistical significance • Collection • Repeat the experiment using several collections

Additional Effects in iCLEF • Learning • Vary topic presentation order • Fatigue • Vary system presentation order • Topic+User (Expertise) • Ask about prior knowledge of each topic

iCLEF 2003 Research Questions • SICS (Sweden) • What happens when Swedes read English? • Alicante (Spain) • Can NLP-based summaries beat passages? • BBN/Maryland (USA) • Can NLP-based summaries beat passages? • Maryland (USA) • Is user-assisted translation helpful? • UNED (Spain) • Is searching summaries as good as full text? Document Selection End-to-End

? Why? 2003 Results