The POPPI 1 Example: Statistical Comments

The POPPI 1 Example: Statistical Comments Thomas A. Louis, PhD Department of Biostatistics Johns Hopkins Bloomberg SPH tlouis@jhsph.edu Little nits regarding the testing setup ...transformed as log(mean C-peptide+ 1 ) . Why “ 1 ?”

The POPPI 1 Example: Statistical Comments

E N D

Presentation Transcript

The POPPI 1 Example:Statistical Comments Thomas A. Louis, PhD Department of Biostatistics Johns Hopkins Bloomberg SPH tlouis@jhsph.edu TrialNet Workshop March 7, 2005

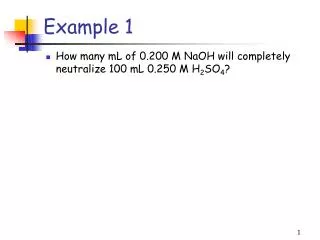

Little nits regarding the testing setup ...transformed as log(mean C-peptide+1). • Why “1?” • A formal, Bayesian approach would automatically (and implicitly) move away from log(0) ...Shapiro-Wilk test for normality of the residuals and the White test for homoscedasticity • How to choose level • These tests have low power • The alternative is always true • Properties of testitests can be poor Either go with the Wilcoxon or use a Bootstrap-based test (direct test or BCa CI-based) TrialNet Workshop March 7, 2005

Bigger issues with the design TrialNet Workshop March 7, 2005

Have you missed an important contrast? • Size and power were determined for three pair-wise group comparisons: • Mycophenolate mofetil (MMF) vs control • MMF/DZB (Daclizumab) vs control • MMF versus MMF/DZB • If the contrast: (MMF + MMF/DZB) –2*Control is statistically and clinically significant, will you regret not having included it in your framework for controlling type I error? TrialNet Workshop March 7, 2005

Fundamental Issue Analysis plan should separate “evidence” (the joint likelihood for the three randomization groups) from decisions based on the evidence Multiplicity should be addressed by an explicit loss function or a collection of loss functions TrialNet Workshop March 7, 2005

Sample Size and Power • Sample size (and follow-up time) need to address uncertainty in the relation between the surrogate (C-peptide) and clinical endpoints • “60 subjects per group” • “subjects” “patients” (or participants) • Hard to imagine that with the current state of knowledge, 60/60/60 is sufficient • Is equal allocation efficient (statistically and for recruiting?) TrialNet Workshop March 7, 2005

Sample Size and PowerAccounting for uncertainties • A series of “what ifs” (e.g., what if = 1, 2, ...) generally underestimates the necessary sample size • Need to account for uncertainty in , base rates, dropouts, biologically plausible clinical differences and other uncertainties TrialNet Workshop March 7, 2005

TrialNet Workshop March 7, 2005

TrialNet Workshop March 7, 2005

TrialNet Workshop March 7, 2005

TrialNet Workshop March 7, 2005

TrialNet Workshop March 7, 2005

TrialNet Workshop March 7, 2005

(CV)2 TrialNet Workshop March 7, 2005

TrialNet Workshop March 7, 2005

0.0 0.8 1.3 1.9 2.5 CV TrialNet Workshop March 7, 2005

0.0 0.8 1.3 1.9 2.5 CV TrialNet Workshop March 7, 2005

Pre-workshop Questions • How long should the treatment and follow-up period in trials be? Sufficient to address the relevant treatment comparisons. If a surrogate endpoint is not sufficiently validated, follow-up must includ clinical endpoints, in part to produce meaningful comparisons and in part to gain information on the relation between the surrogate and clinical endpoints. • Can larger numbers of treatments be compared simultaneously with aggressive curtailment for futility? Yes, but follow-up still must be sufficient to support dropping a treatment or declaring a winner. TrialNet Workshop March 7, 2005

Pre-workshop Questions • Can short term trials be grafted onto long term trials – roll phase 2 patients into phase 3 studies? Yes!! • Should trials be powered for large effect sizes or for more moderate effect sizes with monitoring guidelines that could terminate early for an unexpected large effect? Definitely power for clinically important and biologically viable effect sizes. Monitoring will temper what may be large, maximal sample sizes, but if the size needs to be big, it needs to be big. • Should trials be powered to rule out adverse effects? Yes, to rule out relatively high-incidence (S)AEs. Whether to power to rule out small to moderate (S)AEs depends on the integrity of post-marketing surveillance and on external information. TrialNet Workshop March 7, 2005

A culture change Successfully addressing and implementing the foregoing requires changes in culture and bureaucracy, plus some statistical developments TrialNet Workshop March 7, 2005