Introduction to stochastic process

Introduction to stochastic process. Dr. Nur Aini Masruroh. Outlines. Concept of probability. Random variables. Expected value. Conditional probability. Limit theorem. Stochastic processes. Probability in Industrial Engineering. Random arrivals of jobs Random service time

Introduction to stochastic process

E N D

Presentation Transcript

Introduction to stochastic process Dr. NurAiniMasruroh

Outlines Concept of probability Random variables Expected value Conditional probability Limit theorem Stochastic processes

Probability in Industrial Engineering • Random arrivals of jobs • Random service time • Random requests for resources • Probability of queue overflow • Service delays • Scheduling problems • Flow control and routing • Revenue management • Risk assessment for decision analysis • Etc

Basic probability • Random experiment: an experiment whose outcome cannot be determined in advanced • Sample space (S): set of all possible outcomes • Event (E): a subset of sample space, it occurs if the outcome of the experiment is an element of that subset • A number P(E) is defined and satisfies: • 0 ≤ P(E) ≤ 1 • P(S) =1 • For any sequence of events E1, E2, … that are mutually exclusive

Random variables • Consider a random experiment having sample space S. • A random variable X is a function that assigns a real value to each outcome in S • The distribution function F of the random variable X is defined for any real number x by • A random variable X is said to be discrete if its set of possible values is countable. For discrete random variables, • A random variable is called continuous if there exists a function f(x), called the probability density function, such that For every set B

Random variables • Since it follows that • The join distribution function F of two random variables X and Y is defined by F(x, y) = P{X≤ x, Y ≤ y} • The distribution functions of X and Y, Fx(x) = P{X≤ x} and FY(y) = P{Y≤y} It can be obtained from F(x, y) by making use of the continuity property of the probability operator. Continuity property: Similarly, F(x, y) = Fx(x)FY(y) if X and Y are independent

Cumulative Distribution Function (CDF) • CDF, F, of the random variable X is defined by all real numbers b, -∞ < b < ∞, by F(b) = P(X ≤ b) • F(b) denotes the cumulative probability that the random variable X takes on a value that is less or equal to b (the total probability mass) • CDF is a non-decreasing function: P(X≤ a) ≤ P(X ≤ b) if a < b and thus F(a) ≤ F(b) for a < b • F(∞) = 1 and F(-∞)=0: bounded • CDF is a right continuous function: for any b, F(b+), value of F(b) just to the right of b, equals to F(b) as b+ get closer to b. The CDF is right continuous at all values, but may be left discontinuous at some values

Probabilities from CDF • P(X ≤ b) = F(b) • P(X<b) = F(b – ε) = F(b-) • ε is a small number • P(X=b) = P(X ≤ b) – P(X < b) = F(b) – F(b-) • P(a < X ≤ b) = F(b) – F(a) • P(a ≤ X ≤ b) = F(b) – F(a-) • P(a ≤ X < b) = F(b-) – F(a-) • P(a < X < b) = F(b-) – F(a)

Probability Density Function (pdf) • The pdf of a continuous random variable X is defined by the derivative of the continuous CDF at differentiable intervals : dF(b)/db = f(b) • The pdf of a continuous random variable tells us the density of the mass at each point on the sample space • The value of pdf is not probability, for example, if f(x) = 2x, 0 ≤ x ≤ 1, and 0 otherwise, f(1) = 2 is obviously not a probability value • Graphically, pdf is the area under f(x) from ain interval a,b. • Note: if pmf has meaning itself, the value itself for pdf has no meaning

Discrete random variable: summary • A random variable X associates a number with each outcome of an experiment • A discrete random variable takes on a finite number • The probabilistic behavior of a discrete random variable X is described by its probability mass function (pmf), p(u) • P(uj) = p(X=uj) • All the probabilistic information about the discrete random variable X is summarized in its pmf • A discrete random variable X has a CDF F(b) which is: • Right-continuous • Staircase CDF

Continuous random variable: summary • No mass to define pmf: every event has zero probability • Example: p(height of people= 173.7897654) 0 • However P(147< height of people < 187) 1 • The set of possible values are uncountable while the set of possible values were finite or countably infinite for a discrete random variable • Sample space is not a discrete set, but a continuous space (or interval) • A continuous random variable X has a CDF F(b) which is: • Continuous at all b, -∞ < b < ∞ • Differentiable at all b (except possibly at finite set of points)

Expected value • Expectation of the random variable X, • The variance of the random variable X is defined by Var X = E[(X – E[X])2] = E[X2] – E2[X] • Two jointly distributed random variables X and Y are said to be uncorrelated if their covariance defined by Cov (X, Y) = E[(X – EX)(Y – EY)] = E[XY] – E[X]E[Y]

Expected value • Expectation of a sum of random variables is equal to the sum of the expectations • Variances:

Conditional probabilities • In general, given information about the outcome of some events, we may revise our probabilities of other events • We do this through the use of conditional probabilities • The probability of an event X given specific outcomes of another event Y is called the conditional probability X given Y • The conditional probability of event X given event Y and other background information ξ, is denoted by p(X|Y, ξ) and is given by

Bayes’ Theorem • Given two uncertain events X and Y. Suppose the probabilities p(X|ξ) and p(Y|X, ξ) are known, then

Limit theorem • Strong Law of Large Numbers • If X1, X2, … are independent and identically distributed with mean μ, then • Central Limit Theorem • If X1, X2, … are independent and identically distributed with mean μ and variance σ2, then • Thus if we let where X1, X2, … are independent and identically distributed, then the Strong of LLN states that, with probability1, Sn/n will converge to E[Xi]; whereas the CLT states that Sn will have an asymptotic normal distribution as n → ∞

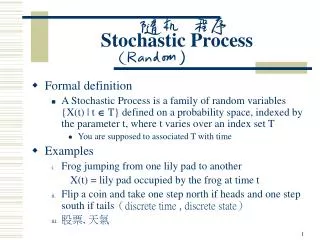

Stochastic process X(t) is the state of the process (measurable characteristic of interest) at time t • the state space of the a stochastic process is defined as the set of all possible values that the random variables X(t) can assume • when the set T is countable, the stochastic process is a discrete time process; denote by {Xn, n=0, 1, 2, …} • when T is an interval of the real line, the stochastic process is a continuous time process; denote by {X(t), t≥0}

Stochastic process Hence, • a stochastic process is a family of random variables that describes the evolution through time of some (physical) process. • usually, the random variables X(t) are dependent and hence the analysis of stochastic processes is very difficult. • Discrete Time Markov Chains (DTMC) is a special type of stochastic process that has a very simple dependence among X(t) and renders nice results in the analysis of {X(t), t∈T} under very mild assumptions.

Example of stochastic processes Refer to X(t) as the state of the process at time t A stochastic process {X(t), t∈T} is a time indexed collection of random variables • X(t) might equal the total number of customers that have entered a supermarket by time t • X(t) might equal the number of customers in the supermarket at time t • X(t) might equal the stock price of a company at time t

Counting process • Definition: • A stochastic process {N(t), t≥0} is a counting process if N(t) represents the total number of “events” that have occurred up to time t

Counting process • Examples: • If N(t) equal the number of persons who have entered a particular store at or prior to time t, then {N(t), t≥0} is a counting process in which an event corresponds to a person entering the store • If N(t) equal the number of persons in the store at time t, then {N(t), t≥0} would not be a counting process. Why? • If N(t) equals the total number of people born by time t, then {N(t), t≥0} is a counting process in which an event corresponds to a child is born • If N(t) equals the number of goals that Ronaldo has scored by time t, then {N(t), t≥0} is a counting process in which an event occurs whenever he scores a goal

Counting process • A counting process N(t) must satisfy • N(t)≥0 • N(t) is integer valued • If s ≤t, then N(s) ≤ N(t) • For s<t, N(t)-N(s) equals the number of events that have occurred in the interval (s,t), or the increments of the counting process in (s,t) • A counting process has • Independent increments if the number of events which occur in disjoint time intervals are independent • Stationary increments if the distribution of the number of events which occur in any interval of time depends only on the length of the time interval

Independent increment • This property says that numbers of events in disjoint intervals are independent random variables. • Suppose that t1< t2≤ t3< t4. Then N(t2)-N(t1), the number of events occurring in (t1,t2], is independent of N(t4)-N(t3), the number of events occurring in (t3, t4].