Topic

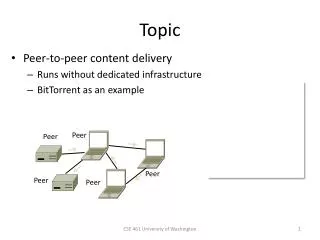

Topic. Peer-to-peer content delivery Runs without dedicated infrastructure BitTorrent as an example. Peer. Peer. Peer. Peer. Peer. Context. Delivery with client/server CDNs: Efficient, scales up for popular content Reliable, managed for good service … but some disadvantages too:

Topic

E N D

Presentation Transcript

Topic • Peer-to-peer content delivery • Runs without dedicated infrastructure • BitTorrent as an example Peer Peer Peer Peer Peer CSE 461 University of Washington

Context • Delivery with client/server CDNs: • Efficient, scales up for popular content • Reliable, managed for good service • … but some disadvantages too: • Need for dedicated infrastructure • Centralized control/oversight CSE 461 University of Washington

P2P (Peer-to-Peer) • Goal is delivery without dedicated infrastructure or centralized control • Still efficient at scale, and reliable • Key idea is to have participants (or peers) help themselves • Initially Napster ‘99 for music (gone) • Now BitTorrent ‘01 onwards (popular!) CSE 461 University of Washington

P2P Challenges • No servers on which to rely • Communication must be peer-to-peer and self-organizing, not client-server • Leads to several issues at scale … Peer Peer Peer Peer Peer CSE 461 University of Washington

P2P Challenges (2) • Limited capabilities • How can one peer deliver content to all other peers? • Participation incentives • Why will peers help each other? • Decentralization • How will peers find content? CSE 461 University of Washington

Overcoming Limited Capabilities • Peer can send content to all other peers using a distribution tree • Typically done with replicas over time • Self-scaling capacity Source CSE 461 University of Washington

Overcoming Limited Capabilities (2) • Peer can send content to all other peers using a distribution tree • Typically done with replicas over time • Self-scaling capacity Source CSE 461 University of Washington

Providing Participation Incentives • Peer play two roles: • Download ( ) to help themselves, and upload ( ) to help others Source CSE 461 University of Washington

Providing Participation Incentives (2) • Couple the two roles: • I’ll upload for you if you upload for me • Encourages cooperation Source CSE 461 University of Washington

Enabling Decentralization • Peer must learn where to get content • Use DHTs (Distributed Hash Tables) • DHTs are fully-decentralized, efficient algorithms for a distributed index • Index is spread across all peers • Index lists peers to contact for content • Any peer can lookup the index • Started as academic work in 2001 CSE 461 University of Washington

BitTorrent Bram Cohen (1975—) • Main P2P system in use today • Developed by Cohen in ‘01 • Very rapid growth, large transfers • Much of the Internet traffic today! • Used for legal and illegal content • Delivers data using “torrents”: • Transfers files in pieces for parallelism • Notable for treatment of incentives • Tracker or decentralized index (DHT) By Jacob Appelbaum, CC-BY-SA-2.0, from Wikimedia Commons CSE 461 University of Washington

BitTorrent Protocol • Steps to download a torrent: • Start with torrent description • Contact tracker to join and get list of peers (with at least seed peer) • Or, use DHT index for peers • Trade pieces with different peers • Favor peers that upload to you rapidly; “choke” peers that don’t by slowing your upload to them CSE 461 University of Washington

BitTorrent Protocol (2) • All peers (except seed) retrieve torrent at the same time CSE 461 University of Washington

BitTorrent Protocol (3) • Dividing file into pieces gives parallelism for speed CSE 461 University of Washington

BitTorrent Protocol (4) • Choking unhelpful peers encourages participation XXX STOP STOP STOP CSE 461 University of Washington

BitTorrent Protocol (5) • DHT index (spread over peers) is fully decentralized DHT DHT DHT DHT DHT DHT DHT DHT CSE 461 University of Washington

P2P Outlook • Alternative to CDN-style client-server content distribution • With potential advantages • P2P and DHT technologies finding more widespread use over time • E.g., part of skype, Amazon • Expect hybrid systems in the future CSE 461 University of Washington

Chord: A Scalable Peer-to-peer Lookup Service for Internet Applications Robert Morris Ion Stoica, David Karger, M. Frans Kaashoek, Hari Balakrishnan MIT and Berkeley

A peer-to-peer storage problem • 1000 scattered music enthusiasts • Willing to store and serve replicas • How do you find the data?

The lookup problem N2 N1 N3 Key=“title” Value=MP3 data… Internet ? Client Publisher Lookup(“title”) N4 N6 N5

Centralized lookup (Napster) N2 N1 SetLoc(“title”, N4) N3 Client DB N4 Publisher@ Lookup(“title”) Key=“title” Value=MP3 data… N8 N9 N7 N6 Simple, but O(N) state and a single point of failure

Flooded queries (Gnutella) N2 N1 Lookup(“title”) N3 Client N4 Publisher@ Key=“title” Value=MP3 data… N6 N8 N7 N9 Robust, but worst case O(N) messages per lookup

Routed queries (Freenet, Chord, etc.) N2 N1 N3 Client N4 Lookup(“title”) Publisher Key=“title” Value=MP3 data… N6 N8 N7 N9

Routing challenges • Define a useful key nearness metric • Keep the hop count small • Keep the tables small • Stay robust despite rapid change • Freenet: emphasizes anonymity • Chord: emphasizes efficiency and simplicity

Chord properties • Efficient: O(log(N)) messages per lookup • N is the total number of servers • Scalable: O(log(N)) state per node • Robust: survives massive failures • Proofs are in paper / tech report • Assuming no malicious participants

Chord overview • Provides peer-to-peer hash lookup: • Lookup(key) IP address • Chord does not store the data • How does Chord route lookups? • How does Chord maintain routing tables?

Chord IDs • Key identifier = SHA-1(key) • Node identifier = SHA-1(IP address) • Both are uniformly distributed • Both exist in the same ID space • How to map key IDs to node IDs?

Consistent hashing [Karger 97] Key 5 K5 Node 105 N105 K20 Circular 7-bit ID space N32 N90 K80 A key is stored at its successor: node with next higher ID

Consistent hashing [Karger 97] Theorem: For any set of N nodes and K keys, with “high probability” Each node is responsible for at most (1+ eps) K/N keys When the (N+1)th node joins or leaves the network, responsibility for O(K/N) keys changes hands

Basic lookup N120 N10 “Where is key 80?” N105 N32 “N90 has K80” N90 K80 N60

Simple lookup algorithm Lookup(my-id, key-id) n = my successor if my-id < n < key-id call Lookup(id) on node n // next hop else return my successor // done • Correctness depends only on successors

“Finger table” allows log(N)-time lookups ½ ¼ 1/8 1/16 1/32 1/64 1/128 N80

Finger i points to successor of n+2i N120 112 ½ ¼ 1/8 1/16 1/32 1/64 1/128 N80

Lookup with fingers Lookup(my-id, key-id) look in local finger table for highest node n s.t. my-id < n < key-id if n exists call Lookup(id) on node n // next hop else return my successor // done

Lookups take O(log(N)) hops N5 N10 N110 K19 N20 N99 N32 Lookup(K19) N80 N60

Failures might cause incorrect lookup N120 N10 N113 N102 Lookup(90) N85 N80 N80 doesn’t know correct successor, so incorrect lookup

Solution: successor lists • Each node knows r immediate successors • After failure, will know first live successor • Correct successors guarantee correct lookups • Guarantee is with some probability

Choosing the successor list length • Assume 1/2 of nodes fail • P(successor list all dead) = (1/2)r • I.e. P(this node breaks the Chord ring) • Depends on independent failure • P(no broken nodes) = (1 – (1/2)r)N • r = 2log(N) makes prob. = 1 – 1/N