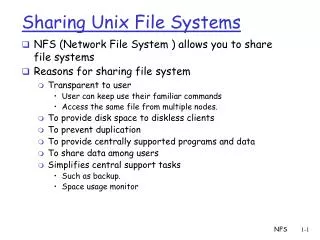

Advanced UNIX File Systems

270 likes | 467 Views

Advanced UNIX File Systems. Berkley Fast File System, Logging File Systems And RAID. Classical Unix File System. Traditional UNIX file system keeps I-node information separately from the data blocks; Accessing a file involves a long seek from I-node to the data;

Advanced UNIX File Systems

E N D

Presentation Transcript

Advanced UNIX File Systems Berkley Fast File System, Logging File Systems And RAID

Classical Unix File System • Traditional UNIX file system keeps I-node information separately from the data blocks; Accessing a file involves a long seek from I-node to the data; • Since files are grouped by directories, but I-nodes for the files from the same directory are not allocated consecutively, non-consecutive block reads are long. • Allocation of data blocks is sub-optimal because often next sequential block is on different cylinder. • Reliability is an issue, cause there is only one copy of super-block, and I-node list; • Free blocks list quickly becomes random, as files are created and destroyed • Randomness forces a lot of cylinder seek operations, and slows down the performance; • There was no way to “defragment” the file system due to small block size (512-1024)

Berkeley Fast File System • BSD Unix did a redesign in mid 80s that they called the Fast File System (FFS) McKusick, Joy, Leffler, and Fabry. • Improved disk utilization, decreased response time • Minimal block size is 4096 bytes allows to files as large as 4G to be created with only 2 levels of indirection; • Disk partition is divided into one or more areas called cylinder groups;

Data and Inode Placement • Original Unix FS had two placement problems: 1) Data blocks allocated randomly in aging file systems - Blocks for the same file allocated sequentially when FS is new - As FS “ages” and fills, need to allocate into blocks freed up when other files are deleted - Deleted files essentially randomly placed So, blocks for new files become scattered across the disk. 2) Inodes allocated far from blocks - All inodes at beginning of disk, far from data - Traversing file name paths, manipulating files, directories requires going back and forth from inodes to data blocks • Both of these problems generate many long seeks

Cylinder Groups • BSD FFS addressed these problems using the notion of a cylinder group - Disk partitioned into groups of cylinders - Data blocks in same file allocated in same cylinder - Files in same directory allocated in same cylinder - Inodes for files allocated in same cylinder as file data blocks • Free space requirement - To be able to allocate according to cylinder groups, the disk must have free space scattered across cylinders - 10% of the disk is reserved just for this purpose - Only used by root – why it is possible for “df” to report >100%

Cylinder Groups • Each cylinder group is one or more consecutive cylinders on a disk. • Each cylinder group contains a redundant copy of the super block, and I-node information, and free block list pertaining to this group. • Each group is allocated a static amount of I-nodes; • The default policy is to allocate one I-node for each 2048 bytes of space in the cylinder group on the average. • The idea of the cylinder group is to keep I-nodes of files close to their data blocks to avoid long seeks; also keep files from the same directory together. • BFFS uses varying offset from the beginning of the group to avoid having all • crucial data on one platex • The offset differs between the beginning and the information is used for data blocks.

Block Size • As block size increases the efficiency of a single transfer also increases, and the space taken up by I-nodes and block lists decreases. • However, as block size increases, the space is wasted due to internal fragmentation. • Solution: - Divide block into fragments; - Each fragment is individually addressable; - Fragment size is specified upon a file system creation; - The lower bound of the fragment size is the disk sector size;

Block Size • Small blocks (1K) caused two problems: - Low bandwidth utilization - Small max file size (function of block size) • Fix using a larger block (4K) - Very large files, only need two levels of indirection for 2^32 - Problem: internal fragmentation - Fix: Introduce “fragments” (1K pieces of a block) • Problem: Media failures - Replicate master block (superblock Parameterize FS according to device characteristics • Problem: Device oblivious Parameterize according to device characteristics

File System Integrity • Due to hardware failures, or power failures, file system can potentially enter an inconsistent state. • Metadata (e.g., directory entry update, I-node update, free blocks list updates, etc.) should be synchronous, cache operations. • A specific sequence of operations also matters. We cannot guarantee that the last operation does not fail, but we want to minimize the damage, so that the file system can be more easily restored to a consistent state.

File Creation • Consider file creation: – write directory entry, update directory I-node, allocate I-node, write allocated I-node on disk, write directory entry, update directory I-node. – But what should be the order of these synchronous operations to minimize the damage that may occur due to failures? • Correct order is: 1) allocate I-node (if needed) and write it on disk; 2) update directory on disk; 3) update I-node of the directory on disk.

Performance Considerations • • Synchronous operations for updating the metadata: – Should be synchronous, thus need seeks to I-nodes; – In BFFS is not a great problem as long as files are relatively small, cause directory, file data blocks, and Inodes should be all in the same cylinder group. • Write-back of the dirty blocks: – Real problem, because the file access pattern is random different applications use different files at the same time, and the dirty blocks are not guaranteed to be in the same cylinder group.

Logging File System • Caching is enough for good read performance • Writes is the real performance bottleneck. writing-back cached user blocks may require many random disk accesses write-through for reliability denies optimizations • Logging solves the problem for metadata. • The idea: everything is log. Think database transaction recovery log. • Each write - both data and control – is appended to the sequential log. • The problem: how to locate files and data efficiently for random access by Reads • The solution: use a floating file map

Logging File System • The Log-structured File System (LFS) was designed in response to two trends in workload and technology: • 1) Disk bandwidth scaling significantly (40% a year), Latency is not • 2) Large main memories in machines - Large buffer caches - Absorb large fraction of read requests - Can use for writes as well - Coalesce small writes into large writes • LFS takes advantage of both of these to increase FS performance Rosenblum and Ousterhout (Berkeley, ’91)

Logging File System • LFS also addresses some problems with FFS - Placement is improved, but still have many small seeks possibly related files are physically separated - nodes separated from files (small seeks) - Directory entries separate from inodes • Metadata requires synchronous writes - With small files, most writes are to metadata (synchronous) - Synchronous writes very slow

Logging File System • Treat the disk as a single log for appending • Collect writes in disk cache, write out entire collection in one large disk request • Leverages disk bandwidth • No seeks (assuming head is at end of log) • All info written to disk is appended to log - Data blocks, attributes, inodes, directories, etc. • LFS has two challenges it must address for it to be practical: 1) Locating data written to the log. - FFS places files in a location, LFS writes data “at the end” 2) Managing free space on the disk - Disk is finite, so log is finite, cannot always append - Need to recover deleted blocks in old parts of log

LFS: Finding Data • FFS uses inodes to locate data blocks - Inodes pre-allocated in each cylinder group - Directories contain locations of inodes • LFS appends inodes to end of the log just like data - Makes them hard to find • Approach - Use another level of indirection: Inode maps - Inode maps map file #s to inode location - Location of inode map blocks kept in checkpoint region - Checkpoint region has a fixed location - Cache inode maps in memory for performance

LFS: Free Space • LFS append-only quickly runs out of disk space - Need to recover deleted blocks • Approach: - Fragment log into segments - Thread segments on disk - Segments can be anywhere - Reclaim space by cleaning segments - Read segment - Copy live data to end of log - Now have free segment you can reuse • Cleaning is a big problem - Costly overhead

RAID • Redundant Array of Inexpensive Disks (RAID) - A storage system, not a file system Patterson, Katz, and Gibson (Berkeley, ’88) - Idea: Use many disks in parallel to increase storage bandwidth, improve reliability - Files are striped across disks - Each stripe portion is read/written in parallel - Bandwidth increases with more disks • Problems: - Small files (small writes less than a full stripe) - Need to read entire stripe, update with small write, then write entire segment out to disks - Reliability: more disks increases the chance of media failure (MTBF) - Turn reliability problem into a feature - Use one disk to store parity data: XOR of all data blocks in stripe - Can recover any data block from all others + parity block - “redundant” - Overhead

RAID Levels • RAID 0: Striping - Good for random access (no reliability) • RAID 1: Mirroring - Two disks, write data to both (expensive, 1X storage overhead) • RAID 5: Floating parity - Parity blocks for different stripes written to different disks - No single parity disk, hence no bottleneck at that disk • RAID “10”: Striping plus mirroring - Higher bandwidth, but still have large overhead

RAID 0 (striping) • RAID0 implements striping, which is a way of distributing reads and writes across multiple disks for improved disk performance. Striping reduces the overall load placed on each component disk in that different segments of data can be simultaneously read or written to multiple disks at once. The total amount of storage available is the sum of all component disks. • Disks of different sizes may be used, but the size of the smallest disk will limit the amount of space usable on all of the disks. Data protection and fault tolerance is not provided by RAID0, as none of the data is duplicated. A failure in any one of the disks will render the RAID unusable and data will have been lost. However, RAID0 arrays are sometimes used for read−only fileserving of already−protected data.Linux users wishing to concatenate multiple disks into a single, larger virtual device should consider. • Logical Volume Management (LVM). LVM supports striping and allows dynamically growing or shrinking logical volumes and concatenation of disks of different sizes.

RAID 1 (mirroring) • RAID1 is an implementation where all written data is duplicated (or mirrored) to each constituent disk, thus providing data protection and fault tolerance. RAID1 can also provide improved performance, as the RAID controllers have multiple disks from which to read when one or more are busy. • The total storage available to a RAID1 user, however, is equal to the smallest disk in the set, and thus RAID1 does not provide a greater storage capacity. • An optimal RAID1 configuration will usually have two identically sized disks. A failure of one of the disks will not result in data lost since all of the data exists on both disks, and the RAID will continue to operate (though in a state unprotected against a failure of the remaining disk). The faulty disk can be replaced, the data synchronized to the new disk, and the RAID1 protection restored.

RAID 2, 3 • RAID2 (bit striping) RAID2 stripes data at the bit level across disks and uses a Hamming code for parity. However, the performance of bit striping is abysmal and RAID2 is not practically used. • RAID3 (byte striping) RAID3 stripes data at the byte level and dedicates an entire disk for parity. Like RAID2, RAID3 is not practically used for performance reasons. As most any read requires more than one byte of data, reads involve operations on every disk in the set. Such disk access will easily thrash a system. Additionally, loss of the parity disk yields a system vulnerable to corrupted data.

RAID 4, 5 • RAID4 (block striping) RAID4 stripes data at the block−level and dedicates an entire disk for parity. RAID4 is similar to both RAID2 and RAID3 but significantly improves performance as any read request contained within a single block can be serviced from a single disk. RAID4 is used on a limited basis due to the storage penalty and data corruption vulnerability of dedicating an entire disk to parity. • RAID5 (block striping with striped parity) RAID5 implements block level striping like RAID4, but instead stripes the parity information across all disks as well. In this way, the total storage capacity is maximized and parity information is distributed across all disks. RAID5 also supports hot spares, which are disks that are members of the RAID but not in active use. The hot spares are activated and added to the RAID upon the detection of a failed disk. RAID5 is the most commonly used level as it provides the best combination of benefits and acceptable costs.