Particle Physics and Grid – A Virtualisation Study

Particle Physics and Grid – A Virtualisation Study. Laura Gilbert. Agenda. What are we looking for? Exciting Physics!! Overview of Particle Accelerators and the ATLAS detector. The Computing Challenge – data taking and simulation. Where do the Grid and virtualisation come in?

Particle Physics and Grid – A Virtualisation Study

E N D

Presentation Transcript

Particle Physics and Grid – A Virtualisation Study Laura Gilbert

Agenda • What are we looking for? Exciting Physics!! • Overview of Particle Accelerators and the ATLAS detector. • The Computing Challenge – data taking and simulation. • Where do the Grid and virtualisation come in? • Presentation of Results – VIRTUALISATION STUDY • DEMONSTRATION

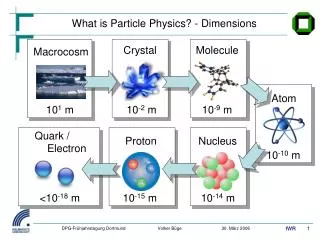

Generation: I II III Forces: u c t γ QUARKS g d s b e μ τ Z LEPTONS υe υμ υτ W What are we looking for? The Standard Model: we have detected matter and force particles. We think we know how these interact with each other.

What are we looking for? We are looking for a “Theory of everything”. So what’s missing? • Are these particles “fundamental”? • Are there more? • How do we get mass? • What is gravity? (force particle? superstrings?) • Why is there more matter than antimatter in the universe?

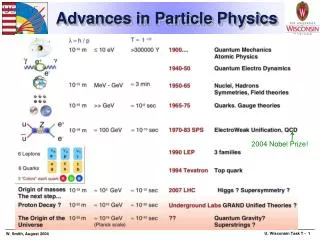

Looking for New Physics • Looking for particles we have never seen before. • If these particles exist, we haven’t seen them because we didn’t have enough energy to make them. • Mass can be created from energy (Einstein) → heavy particles can be made from lighter ones with lots of kinetic energy. • ATLAS will work at higher energies than ever before.

CERN (birthplace of the World Wide Web!) Super Proton Synchrotron (SPS) accelerates protons. Large Hadron Collider (LHC) accelerates further and collides them. A television is an accelerator in which electrons gain around 10 keV (10 000 eV). The SPS will accelerate protons to around 7 TeV (7 000 000 000 000 eV). The SPS 8.5km ATLAS – proton beams collide here The path of the LHC… 100m below ground

The experiment at ATLAS • Two protons collide at very high energy…

The Structure of a proton: The experiment at ATLAS • Two protons collide at very high energy… • Three Quarks (two “up”, one “down”) • Held together by “gluons” • “Sea” of virtual particles: individually variable, statistically constant (depending on energy).

The experiment at ATLAS • Two protons collide at very high energy • Exactly what happens at the point of collision is unknown, but models exist which mimic the outcome of real collisions ?

magnets - to curve charged particle tracks muon detectors 44m 22m hadron calorimeters Electromagnetic calorimeters physicist! ATLAS Summary • Particle physics experiment, due to start taking data in 2007 • Higher energies than ever recorded before • Largest collaborative effort ever attempted in physical sciences: 1850 physicists at 150 universities in 34 countries. Expected cost around $400 million. • Computing Hardware Budget (CERN alone) around $20 million

The Computing Challenge Modern Particle physics experiments are highly CPU intensive. • Data-Taking • Simulation • Analysis GRID

Data Taking Data-taking is the process of recording and refining the output from the detector once it is up and running. • ATLAS will have around 16 million readout channels • More than 1MB of raw data for every event recorded. Beams will collide every 25ns. • On-line trigger reduces rate of data recording to several hundred a second: selects and records only obviously useful events. • The total amount of data recorded per year will be of the order of petabytes.

Simulation Three Phases: • Event Generation • Detector Simulation • ATLFAST: • simple: smearing only • fast (~100,000 events/hr) • GEANT4 • very detailed, includes electronics response and digitisation, cooling and support structures. • slow (~1 event/hr) • simulated data equivalent in format to the “real” data. • Event Reconstruction ?

Where does Grid come in? • On-the-fly data taking (Tier 0) • Compute-intensive simulation programs (Tier 0 and 1) • Analysis, IO intensive (Tier 0, 1 and 2) • LHC Computing Grid Project (LCG) – collaboration • 5,000 CPUs • 4,000 TB of storage • 70 sites around the world • 4,000 simultaneous jobs • monitored via Grid Operations Centre (RAL) • Security issues - virtualised clusters vital. Not tested on HEP applications before.

Testing at Dell, Austin • Benchmarking of PE2650 and PE6650s with ATLAS software – GEANT simulations. PE2650 ~2x faster than 6650. • Cluster set up: PE6650 master node, eight PE2650 compute elements. • Measured overhead of: • Hyper-threading on PE2650s (2.4±1.1%) and PE6650s (3.5±2.6%) • Sun Grid Engine on PE2650s and PE6650s - negligible. • VMware (ESX) on PE2650 (11.1±3.8% for single instance) • Comparison of simulation speeds over cluster (batch jobs) with and without VMware - new study. • Increased number of instances of VMware on cluster. • Paper submitted to “19th International Parallel and Distributed Processing Symposium”

Virtualising a Cluster Comparison: • 8 physical nodes: 2 GB RAM, 2 CPUs, 2 queues → total of 8 CEs, 16 queues. • 16 virtual nodes: 1 GB RAM, 1 CPU, 1 queue → total of 16 CEs, 16 queues. • 32 virtual nodes: 0.5 GB RAM, sharing one CPU between two, 1 queue → total of 32 CEs, 32 queues. Batches of 16, 32, 64 and 92 jobs sent, total Time to completion measured.

Virtualising a Cluster • Results: • 5.0±2.2% increase 1 → 2 VMs/CPU. Not useful unless you consider MRT (7% improvement) • 8 physical → 16 virtual vms:<11% increase. • Large batches: virtualised cluster out-performs physical. Not a memory issue or re-ordering of jobs. Context switching overhead?

Further Work? • Other virtualisation software: GSX or Bochs • VMware SMP License • Hardware: more memory, processors etc. • Different applications Many thanks to the HPCC and Virtualisation teams at Dell, especially Saeed Iqbal, Ron Pepper, Garima Kochhar, Monica Kashyap, Rinku Gupta, Jenwei Hsieh, and Mark Cobban, my sponsor at Dell EMEA.