Generalization and Specialization in Reinforcement Learning

230 likes | 633 Views

Generalization and Specialization in Reinforcement Learning. A presentation by Christopher Schofield. Introduction.

Generalization and Specialization in Reinforcement Learning

E N D

Presentation Transcript

Generalization and Specialization in Reinforcement Learning A presentation by Christopher Schofield

Introduction • When learning a new task, it is important to be able to generalise from past tasks. This could be done by trial and error, but even with a small state space this is intractable because all the possible action sequences are impossible to test by a robot. • The process is much more efficient if the robot could generalise from previous experience, prioritising the testing of behaviours that have been successful in the past. • There is evidence that infants and children use similarity based measures to categorize objects (Abecassis et al, 2001, Sloutsky and Fisher, 2004). These similarities are context dependant (Jones and Smith, 1993), and can be coded at different levels of similarity (Quinn et al, 2006). • The paper aimed to explore how such similarity based generalization can be exploited during learning.

Introduction • Context, described as any piece of information that characterizes the situation, provides a way to reduce the number of possible inferences, thus making the separation of relevant facts about a given situation easier. • Studies on animals suggest that behaviours learned in one context are carried over into other contexts, but learned inhibition of a behaviour is unique to each context where the behaviour is extinguished (Bouton, 1991; Hall, 2002). • Most reinforcement learning algorithms learn to complete a single task in a single context, but animals apply what they learn in one context to other contexts. • One way to do this is to use a function approximator that generalizes not only within the context but between different contexts, usually through an ANN.

Introduction • However: • ANNs being able to generalize and degrade gracefully also gives rise to a major weakness. • Since the information in the network shares the same connection weights, the network often forgets if new data is presented without proper consideration of previously learned data. • The data will simply erase the old data (French, 1991, 1999). • Learning in a new context is likely to contradict the data already learned in a previous context and so old data is removed. • It is possible to construct a context-sensitive ANN that fulfils these demands (Balkenius & Winberg, 2004; Winberg, 2004). • The paper aimed to explore how well this algorithm works on the type of mazes often used in reinforcement learning studies.

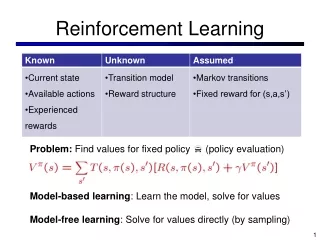

A Reinforcement Learning Framework • The framework is dividing into 3 modules: • Q • RL-CORE • SELECT • The framework was used in all experiments and implemented in the Ikaros system (Balkenius, Morén and Johansson, 2007). • Derived from the basic Q-learning model (Watkins and Dayan, 1992).

A Reinforcement Learning Framework • An advantage of such a framework, with separable components is that different modules can be exchanged to build different forms of learning systems. • Also, all timing is taken care of by delays on the connections between the different modules. • If the world is slower at producing the reward, only the different delays of the system need be changed.

A Reinforcement Learning Framework • This module is responsible for calculating the expected value of each action is state s. • It has 3 inputs and 1 output. • One input-output pair is used to calculate the expected value a of each possible action in the current state s. • The other inputs train the module on the mapping from a state delayed in 2 time steps to a target delayed by 1 time step. • Any algorithm can be used as a module Q. • Two separate inputs for training and testing mean that Q can work in two different time frames concurrently without having to know about the timing of the different signals.

A Reinforcement Learning Framework • RL-CORE is the reinforcement learning specific component of the system. • Receives information about the current world state s, the current reinforcement R, the action selected at the previous time step, and the expected value of all actions. • This is used to calculate the training target for Q. • SELECT performs action selection based on the input from RL-CORE. It may potentially have other inputs that determine the selected action, or use other heuristics. • It is also possible that the action selected is entirely independent of RL-CORE, in which case RL-CORE learns the actions performed by some other subsystem.

Simulations • Two algorithms were simulated: • Basic Q-learning algorithm • ContextQ • The learning task was a simple 2D maze. • This was chosen because a standard reinforcement learning example has a multi-dimensional state space, making the problem hard to visualise. • A 2D maze is much easier to explain and visualize while still allowing for the necessary simulations on the algorithms to be carried out. Each state can be represented using a physical location in the real world.

Simulations • When the state space is described as a 2D maze, the goal can be expressed as finding a path from the beginning to the end, with no initial knowledge of the state-space or layout of the maze itself. • This leads to the agent having to search the maze in order to find a path to the end. • The paper writes about a set of mazes chosen in the problem referred to as “17T4U” that aimed to show the strengths and weaknesses of ContextQ. • The algorithm was also tested on a large maze with a lot of repeated structures, where the benefits of generalization were likely to be seen more clearly.

Simulations • The parameters for the Q-based learning model were set as follows: • Learning rate set to 0.2 • Discount set to 0.9 • Initial weights set to 0.1 • Epsilon-greedy was used for action selection with epsilon set to 0.05

Simulations • The parameters for ContextQ were as follows: • The learning rates were: alpha+ and beta+ set to 0.1, and alpha- set to 0.0 and beta+ set to 0.7. • Discount set to 0.9. • Initial weights all set to 0.1. • Boltzmann selection was used for action selection with a temperature that gradually decreased from 0.05 to 0.005.

Simulations • The algorithm must generalize between similar states and it is therefore necessary that the state coding reflects the similarity. • Each state was coded as a vector of 18 elements, where each element codes for the absence or presence of free space at each of nine locations around the current position of the agent. • A 1 was used to indicate free space, and a 0 was used to indicate a wall for the first 9 elements. The following elements contained the same information inverted. • The current location was always coded as a 0, and was used as contextual input.

Simulations • Consistent with the idea that exceptions should be learned about particular locations in the mazes. • Each maze was tested 60 times for each of the models. • The average number of extra steps needed to reach the goal for each trial were recorded. • Note that extra moves means moves beyond the shortest path. For example, if the goal can be reached in 10 steps from the start and 12 were taken, that is 2 extra steps. • Only 30 runs were used on the very large maze.

Results • The 1-maze • Straight corridor from start to goal. • The maze demonstrates the power of generalization in the ContextQ algorithm. • On the first trial, the random walk is used. • Once the agent has been rewarded, the action of moving nearer the goal is generalized to all locations of the corridor. • As a result, the agent performed perfectly on the first trial. • Tabular Q-based learning did not perform as well. • Slowly learns to make the same action (choice of movement direction) at the same location.

Results • The 7-maze • In order to solve this maze, the agent needs to move to the corner and then up until the goal. • To Q-learning, this maze isn’t much different to the first. • To ContextQ, the situation is entirely different. • The first trial is again random until the agent learns to move upwards after being rewarded. • During the second trial, this action is incorrectly generalized to the horizontal corridor, leading to an extinction state. • Once this generalization is completely inhibited, the agent moves towards the vertical arm where the generalization is still valid. • The agent moves directly to the goal through the vertical part of the maze. • The action of moving to the right will have been generalized to all locations in the horizontal part and the action of moving upward has been generalized to all locations in the vertical part.

Results • The 4-maze • In the case of ContextQ, each time the agent reaches the goal, the action of moving right will be reinforced. • This leads to an increasing tendency for the agent not to turn upward at the choice point, and it continues right to the dead end. • Since there is no reward at the end of the lower arm, the move right will be gradually extinguished until the agent takes the upper path again. • However, once the agent reaches the goal and gets rewarded again, it will again choose to move to the right at the choice point. • Therefore this maze is very hard for ContextQ to solve, but it is still slightly faster than Q-learning at this maze. • The disadvantage of the continuously repeated incorrect generalization is smaller than the gain from the correct generalizations along the corridors

Results • The U-maze • The U-Maze was designed as a more realistic example with a number of dead ends where ContextQ could get stuck. Yet, ContextQ learns the maze at approximately the same time as tabular Q-learning.

Results • The large maze • The difference between ContextQ and tabular Q-learning is most clearly seen in the large maze. • The behaviour of ContextQ converges to an optimal behaviour in very few trials while tabular Q-learning shows no tendency to converge even after 400000 steps. • Unlike the previous mazes, the large maze contains plenty of opportunity for successful generalization and this is what gives ContextQ a great advantage in this maze.

Observations • What are the implications for epigenetic robotics for the algorithm described above? • For a robot that needs to develop autonomously, it will be necessary to learn a large number of behaviours in different situations. • Such learning can be made much faster if the robot generalizes from previous instances during learning. The interplay between generalization based on the current sensory information coded in the state and the specialization based on contextual inhibition has a number of advantages.

Observations • Generalization is maximal • Contextual inhibition makes it possible to unlearn an inappropriate behaviour in a particular situation, but it does not extinguish what was already learned • Although ContextQ is at heart a reinforcement learning algorithm, it generates efficient search paths through the state space in the case of the mazes tested in the paper. • The ContextQ algorithm performed better than tabular Q-learning in some simulations with narrow corridors which shows the ability to generalize can be used to great advantage. • This gives support for the view that it is efficient to generalize maximally from previous experiences and then gradually specialize in specific contexts.