Data Compression I

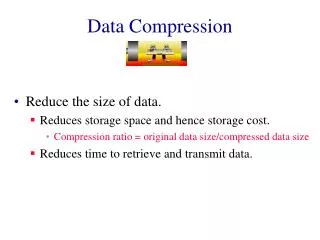

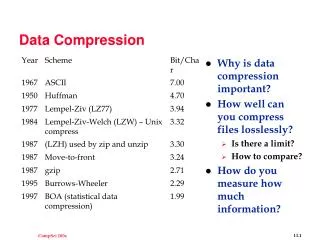

Data Compression I. Storage Space. Uncompressed graphics, audio and video use considerable space. Too much space even for today's CD and DVD technologies. The most popular compression methods are JPEG (images), MPEG (sound/video) and proprietary techniques from Microsoft and Apple.

Data Compression I

E N D

Presentation Transcript

Storage Space • Uncompressed graphics, audio and video use considerable space. Too much space even for today's CD and DVD technologies. • The most popular compression methods are JPEG (images), MPEG (sound/video) and proprietary techniques from Microsoft and Apple.

Coding Requirements • Images have higher storage requirements than text and video even more. Let's have a comparison for a 640x480 window. • Text: 2 bytes for each 8x8 pixel character. 640x480/8x8=4800 characters per page. 4800X2bytes=9600 or 9.4KByte per page.

Coding Requirements • Vector images: A typical 640x480 image has 500 lines approximately. Each line has 2 coordinates (x,y) each and an 8-bit attribute field. x requires 10 bits (log2(640)) and y requires 9 bits (log2(480)). • We need 46 bits per line (9+10+9+10+8). The storage required is 500x46/8=2875 or 2.8KByte per image.

Coding Requirements • Bitmap images: Individual pixels of a bitmap can be coded using 256 different colours, requiring 1 Byte per pixel. • 640x480x1Byte=307200 or 300KBytes per image.

Coding Requirements • Sound: Uncompressed speech of telephone quality is sampled at 8kHz and quantized using 8 bits per sample, yielding a data stream of 64 Kbits/sec. • Storage required will be (64Kbps/8)x(1sec/1024) = 8KByte for a 1 second sound.

Coding Requirements • Sound: Uncompressed stereo audio signal of CD quality is sampled at 44.1kHz and quantized using 16 bits per sample, yielding a data stream of 176,400 bytes/sec. • Storage required will be 172KByte for a 1 second sound.

Coding Requirements • Video: 25 full frames per second. Luminance and chrominance of each pixel are coded using a total of 3 bytes. • Data rate is 640x480x25x3bytes=23,040,000 bytes/sec. • Storage required will be 21.98MByte for 1 second of video.(Multiply by 5 for HDTV!)

Compression Requirements • The complexity of the technique should be minimal. • The time to decompress should be minimal (<150ms). • Fast forward and fast rewind should be available. • Random access to individual frames should be possible in less than half a second.

Compression Requirements • Decompression should be possible without interpreting all preceding data. • The technique should be independent of the frame size. • Synchronization must be possible. • Should be economical. • Should be portable.

Compression Requirements • Support for various audio and video rates. • Synchronization of audio-video streams (lip synchronization). • Compression in software implies cheaper, slower and low quality solution. • Compression in hardware implies expensive, faster and high quality solution.

Types of Compression • Entropy Coding: Lossless compression. Data prior to encoding is identical to data after encoding. • Source Coding: Takes into account the semantics of the information. Plays with frequencies and colours: Lossy compression. • Hybrid Coding: Most known techniques use a combination of the two kinds of coding.

Steps of Compression • This is the typical sequence of operations performed in the compression of still images and video and audio data streams.

Preparation (images) • Analog-to-digital conversion of the original. • Generation of the appropriate digital representation. • Image division into 8X8 blocks for example. • Fix the number of bits per pixel.

Processing (images) Why store individual pixel information for what is obviously the exact same shade of gray? • Transformation from time to frequency domain, like Discrete Cosine Transform (DCT). • The DCT helps separate the image into parts (or spectral sub-bands) of differing importance (with respect to the image's visual quality). • Motion vector computation for digital video. • Lossless process.

Quantization • Mapping real numbers to integers (reduction in precision). • Quantization techniques generally compress by compressing a range of values to a single quantum value. By reducing the number of discrete symbols in a given stream, the stream becomes more compressible. • Lossy process.

Entropy Coding • Starts with a sequential data stream of individual bits and bytes. • Different techniques are used to perform a final, lossless compression. • For example, frequently occurring long sequences of zeros can be compressed by specifying the number of occurrences followed by the zero itself.

Types of Compression • Symmetric Compression: Same time needed for decoding and encoding phases. Used for dialog mode applications • Asymmetric Compression: Compression process is performed once and enough time is available, hence compression can take longer. Decompression is performed frequently and must be done fast. Used for retrieval mode applications.

Iterative Processes • Processing and quantization steps can be repeated iteratively, such as in the case of Adaptive Differential Pulse Code Modulation (ADPCM). There can either be feedback (like delta modulation) or multiple techniques can be applied to the data one after the other (like interframe and intraframe coding in MPEG).

Basic Compression Techniques • Hybrid compression techniques are composed of several other techniques. • Simplest techniques are based on interpolation that uses the properties of the human eye or ear.

Run-Length Encoding • Content dependent coding. • Replaces the sequence of same consecutive bytes with the number of occurrences. • The number of occurrences is indicated by a special flag - “!”.

RLE Algorithm • If the same byte occurred at least 4 times then count the number of occurrences. • Write compressed data in the following format: “number of occurrences!the counted byte”.

RLE Algorithm • Example: • Uncompressed sequence - ABCCCCCCCCCDEFFFFGGG • Compressed sequence - • AB9!CDE4!FGGG (from 20 to 13 bytes)

Zero Suppression • Used to encode long binary bit strings containing mostly zeros. Each k-bit symbol tells how many 0’s occurred between consecutive 1’s. • Ex: 0000000 - 7 zeros to be encoded. • 111 (3 bit symbol) • Ex: 000100000001101 (using 3 bit symbol) • 011 111 000 001 (3-7-0-1 zeros between 1s)

Text Compression • Patterns that occur frequently can be substituted by single bytes. Like “begin”, “end”, “if”… • Algorithm: • Use an ESC byte to indicate that an encoded pattern will follow. The next byte is an index reference to one of 256 words (patterns). • Can be applied to still images, audio, video.

Diatomic Coding • Determined frequently occurring pairs of bytes. • Analysis of the English language yielded frequently used pairs: “re” “in” “th” “he”... • Replace these pairs by single bytes that do not occur anywhere in the text (like 'X'). • Reduction of more than 10% is possible.

Statistical Coding • Fixed length coding • Use equal number of bits to represent each symbol. A message of N symbols requires L >= log2(N) bits per symbol. • Good encoding for symbols with equal probability of occurrence. Not efficient if probability of each symbol is not equal.

Statistical Coding • Variable length encoding • Frequently occurring characters represented with shorter strings than seldom occurring characters. • Statistical encoding is dependent on the frequency of occurrence of a character or a sequence of data bytes.

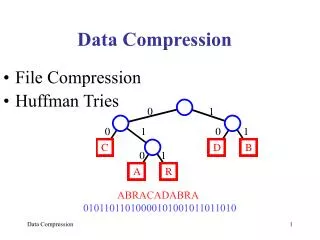

Huffman Coding • Characters are stored with their probabilities. • Number of bits of the coded characters differs. Shortest code is assigned to most frequently occurring character. • To determine Huffman code, we construct a binary tree.

Huffman Coding • Leaves are characters to be encoded. • Nodes contain occurrence probabilities of the characters belonging to the subtree. • 0 and 1 are assigned to the branches of the tree arbitrarily - therefore different Huffman codes are possible for the same data. • Huffman table is generated. • Huffman tables must be transmitted with compressed data.

Arithmetic Coding • Method for lossless data compression. Normally, a string of characters such as the words "hello there" is represented using a fixed number of bits per character, as in the ASCII code. Like Huffman coding, arithmetic coding is a form of variable-length entropy encoding that converts a string into another representation that represents frequently used characters using fewer bits and infrequently used characters using more bits, with the goal of using fewer bits in total.

Arithmetic Coding • As opposed to other entropy encoding techniques that separate the input message into its component symbols and replace each symbol with a code word, arithmetic coding encodes the entire message into a single number, a fraction n where (0.0 ≤ n < 1.0).

Differential Encoding • Consider sequences of symbols S1, S2, S3 etc. where values are not zeros but do not vary very much. • We calculate difference from previous value : S1, S2-S1, S3-S2 etc. • Differential Coding is lossy.

Differential Encoding • For still images: • Calculate difference between nearby pixels or pixel groups. • Edges characterized by large values, areas with similar luminance and chrominance are characterized by small values. • Zeros can be compressed by run-length encoding and nearby pixels with large values can be encoded as differences.

Differential Encoding • For videos: • In a newscast or video phone, the background does not change often, hence we can use run-length encoding to compress the background. • In movies, the background changes - use motion compensation. • Compare blocks of 8X8 or 16x16 in subsequent pictures. • Find areas that are similar, but shifted to the left or right. • Encode motion using a “motion vector”.

Differential Encoding (Sound) • Differential Pulse Code Modulation (DPCM). • When we use PCM, we get a sequence of PCM coded samples. • Represent first PCM sample as a whole and all the following samples as differences from the previous one.

JPEG • JPEG implementation is independent of image size and applicable to any image and pixel aspect ratio. • Image content may be of any complexity. • JPEG achieves very good compression ratio and good quality image.

JPEG • From the processing complexity of a software solution point of view: JPEG should run on as many available platforms as possible. • Sequential decoding (line-by-line) and progressive decoding (refinement of the whole image) should be possible.

JPEG Modes • Lossy Sequential DCT (Discrete Cosine Transform) based mode: Baseline process that must be supported by every JPEG implementation. • Lossless mode: Low compression ratio allows perfect reconstruction of original image. • Hierarchical mode: Accommodates images of different resolutions.

Steps of JPEG Compression • Image preparation, Picture processing, Quantization and Entropy coding.

Picture Preparation • First the compression algorithm cuts up the image in separate blocks of 8×8 pixels. • The compression algorithm is calculated for each separate block, which explains why these blocks or groups of blocks become visible when too much compression is applied.

Picture Preparation • Each image consists of components or planes. There is at least one plane and a maximum of 255. The planes can be assigned to RGB colours or YIQ or YUV signals. YUV and YIQ are preferred because luminance is more important than chrominance for human vision. • Each plane is an array of X*Y pixels.

Picture Preparation • A grey scale image has one plane, a RGB colour image three planes with the same resolution. (Y1=Y2=Y3 and X1=X2=X3) • A JPEG image using YUV or YIQ has Y1=4*Y2=4*Y3 and X1=4*X2=4*X3 (4:1:1). The 4:2:2 ratio is now the most popular ratio. • Each pixel is represented by a certain number of bits. All pixels of all components must have the same number of bits (2-12 bits).

Picture Preparation The 4:4:4 ratio

Picture Preparation The 4:2:2 ratio • X1=2X2=2X3 • Y1=Y2=Y3

Picture Preparation (DCT) • Xmax = max(Xi) • Ymax = max(Yi) Xi = Xmax * Hi / Hmax Yi = Ymax * Vi / Vmax • Hi and Vi are integers between 1 and 4. • The color values are changed by coefficients that are relative to the average of the entire matrix that is being analyzed.

DCT-Based Mode • Coefficient values are then shifted to central values (-128 to 127). • Formula is applied 64 times per unit.

DCT-Based Mode • Forward Discrete Cosine Transform. • x and y range from 0 to 7 (8x8 pixels). • 64 Svu per unit. • S00 is the base color (DC), the rest are called AC.