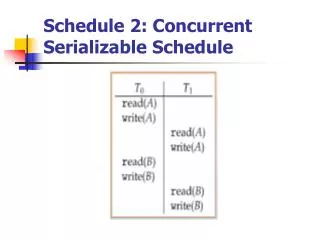

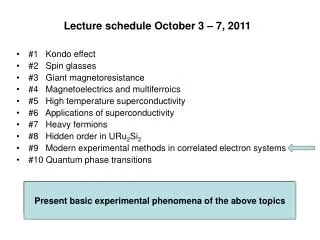

Schedule 2: Concurrent Serializable Schedule

Schedule 2: Concurrent Serializable Schedule. Timestamp Timestamp-based Protocols. Select order among transactions in advance – timestamp-ordering Transaction Ti associated with timestamp TS(Ti) before Tistarts TS(Ti) < TS(Tj) if Ti entered system before Tj

Schedule 2: Concurrent Serializable Schedule

E N D

Presentation Transcript

Timestamp Timestamp-based Protocols • Select order among transactions in advance –timestamp-ordering Transaction Ti associated with timestamp TS(Ti) before Tistarts • TS(Ti) < TS(Tj) if Ti entered system before Tj • TS can be generated from system clock or as logical counter incremented at each entry of transaction

Timestamp Timestamp-based Protocols • Timestamps determine serializability order • If TS(Ti) < TS(Tj), system must ensure produced schedule equivalent to serial schedule where Ti appears before Tj

Timestamp Timestamp-based Protocols Data item Q gets two timestamps • W-timestamp(Q) –largest timestamp of any transaction that executed write(Q) successfully • R-timestamp(Q) –largest timestamp of successful read(Q) Updated whenever read(Q) or write(Q) executed

Timestamp Timestamp-based Protocols • Suppose Ti executes read(Q) • If TS(Ti) < W-timestamp(Q), Ti needs to read value of Q that was already overwritten Read operation rejected and Ti rolled back • If TS(Ti) ≥W-timestamp(Q) Read executed, R-timestamp(Q) set to max(R-timestamp(Q), TS(Ti))

Timestamp Timestamp-based Protocols Suppose Ti executes write(Q) • If TS(Ti) < R-timestamp(Q), value Q produced by Ti was needed previously and Ti assumed it would never be produced Write operation rejected, Ti rolled back • If TS(Ti) < W-tiimestamp(Q), Ti attempting to write obsolete value of Q Write operation rejected and Ti rolled back • Otherwise, write executed

Timestamp Timestamp-based Protocols • Any rolled back transaction Ti is assigned new timestamp and restarted • Algorithm ensures conflict serializability and freedom from deadlock

Exam 1 Review Bernard Chen Spring 2007

Chapter 1 Introduction • Chapter 3 Processes

The Process Process: a program in execution • Text section: program code • program counter (PC) • Stack: to save temporary data • Data section: store global variables • Heap: for memory management

Stack and Queue • Stack: First in, last out • Queue: First in, first out • Do: push(8) push(17) push(41) pop() push(23) push(66) pop() pop() pop()

Heap (Max Heap) Provide O(logN) to find the max 97 53 59 26 41 58 31 16 21 36

The Process • Program itself is not a process, it’s a passive entity • A program becomes a process when an executable (.exe) file is loaded into memory. • Process: a program in execution

Schedulers • Short-term scheduler is invoked very frequently (milliseconds) ⇒(must be fast) • Long-term scheduler is invoked very infrequently (seconds, minutes) ⇒(may be slow) • The long-term scheduler controls the degree of multiprogramming

Schedulers Processes can be described as either: • I/O-bound process–spends more time doing I/O than computations, many short CPU bursts • CPU-bound process–spends more time doing computations; few very long CPU bursts

Schedulers • On some systems, the long-term scheduler maybe absent or minimal • Just simply put every new process in memory for short-term scheduler • The stability depends on physical limitation or self-adjustment nature of human users

Schedulers • Sometimes it can be advantage to remove process from memory and thus decrease the degree of multiprogrammimg • This scheme is called swapping

Interprocess Cpmmunication (IPC) • Two fundamental models • Share Memory • Message Passing

Share Memory Parallelization System Example m_set_procs(number): prepare number of child for execution m_fork(function): childes execute “function” m_kill_procs(); terminate childs

Real Example main(argc , argv) { int nprocs=9; m_set_procs(nprocs); /* prepare to launch this many processes */ m_fork(slaveproc); /* fork out processes */ m_kill_procs(); /* kill activated processes */ } void slaveproc() { int id; id = m_get_myid(); m_lock(); printf(" Hello world from process %d\n",id); printf(" 2nd line: Hello world from process %d\n",id); m_unlock(); }

Real Example int array_size=1000 int global_array[array_size] main(argc , argv) { int nprocs=4; m_set_procs(nprocs); /* prepare to launch this many processes */ m_fork(sum); /* fork out processes */ m_kill_procs(); /* kill activated processes */ } void sum() { int id; id = m_get_myid(); for (i=id*(array_size/nprocs); i<(id+1)*(array_size/nprocs); i++) global_array[id*array_size/nprocs]+=global_array[i]; }

Shared-Memory Systems • Unbounded Buffer: the consumer may have to wait for new items, but producer can always produce new items. • Bounded Buffer: the consumer have to wait if buffer is empty, the producer have to wait if buffer is full

Bounded Buffer #define BUFFER_SIZE 6 Typedefstruct { . . . } item; item buffer[BUFFER_SIZE]; intin = 0; intout = 0;

Bounded Buffer (producer iew) while (true) { /* Produce an item */ while (((in = (in + 1) % BUFFER SIZE count) == out) ; /* do nothing --no free buffers */ buffer[in] = item; in = (in + 1) % BUFFER SIZE; }

Bounded Buffer (Consumer view) while (true) { while (in == out) ; // do nothing --nothing to consume // until remove an item from the buffer item = buffer[out]; out = (out + 1) % BUFFER SIZE; return item; }

Message-Passing Systems • A message passing facility provides at least two operations: send(message),receive(message)

Message-Passing Systems • If 2 processes want to communicate, a communication link must exist It has the following variations: • Direct or indirect communication • Synchronize or asynchronize communication • Automatic or explicit buffering

Message-Passing Systems • Direct communication send(P, message) receive(Q, message) Properties: • A link is established automatically • A link is associated with exactly 2 processes • Between each pair, there exists exactly one link

Message-Passing Systems • Indirect communication: the messages are sent to and received from mailbox send(A, message) receive(A, message)

Message-Passing Systems Properties: • A link is established only if both members of the pair have a shared mailbox • A link is associated with more than 2 processes • Between each pair, there exists a number of links

Message-Passing Systems • Synchronization: synchronous and asynchronous Blocking is considered synchronous • Blocking send has the sender block until the message is received • Blocking receive has the receiver block until a message is available

Message-Passing Systems • Non-blocking is considered asynchronous • Non-blocking send has the sender send the message and continue • Non-blocking receive has the receiver receive a valid message or null

MPI Program example #include "mpi.h" #include <math.h> #include <stdio.h> #include <stdlib.h> int main (int argc, char *argv[]) { int id; /* Process rank */ int p; /* Number of processes */ int i,j; int array_size=100; int array[array_size]; /* or *array and then use malloc or vector to increase the size */ int local_array[array_size/p]; int sum=0; MPI_Status stat; MPI_Comm_rank (MPI_COMM_WORLD, &id); MPI_Comm_size (MPI_COMM_WORLD, &p);

MPI Program example if (id==0) { for(i=0; i<array_size; i++) array[i]=i; /* initialize array*/ for(i=1; i<p; i++) MPI_Send(&array[i*array_size/p], /* Start from*/ array_size/p, /* Message size*/ MPI_INT, /* Data type*/ i, /* Send to which process*/ MPI_COMM_WORLD); for(i=0;i<array_size/p;i++) local_array[i]=array[i]; } else MPI_Recv(&local_array[0],array_size/p,MPI_INT,0,0,MPI_COMM_WORLD,&stat);

MPI Program example for(i=0;i<array_size/p;i++) sum+=local_array[i]; MPI_Reduce (&sum, &sum, 1, MPI_INT, MPI_SUM, 0, MPI_COMM_WORLD); if (id==0) printf("%d ",sum); }

Chapter4 • A thread is a basic unit of CPU utilization. • Traditional (single-thread) process has only one single thread control • Multithreaded process can perform more than one task at a time example: word may have a thread for displaying graphics, another respond for key strokes and a third for performing spelling and grammar checking

Multithreading Models • Support for threads may be provided either at the user level, for user threads, or by the kernel, for kernel threads • User threads are supported above kernel and are managed without kernel support • Kernel threads are supported and managed directly by the operating system

Multithreading Models • Ultimately, there must exist a relationship between user thread and kernel thread • User-level threads are managed by a thread library, and the kernel is unaware of them • To run in a CPU, user-level thread must be mapped to an associated kernel-level thread

Many-to-one Model User Threads Kernel thread

Many-to-one Model • It maps many user-level threads to one kernel thread • Thread management is done by the thread library in user space, so it is efficient • But the entire system may block makes a block system call. • Besides multiple threads are unable to run in parallel on multiprocessors

One-to-one Model User Threads Kernel threads

One-to-one Model • It provide more concurrency than the many-to-one model by allowing another thread to run when a thread makes a blocking system call • It allows to run in parallel on multiprocessors • The only drawback is that creating a user thread requires creating the corresponding kernel thread • Most implementation restrict the number of threads create by user

Many-to-many Model User Threads Kernel threads

Many-to-many Model • Multiplexes many user-level threads to a smaller or equal number of kernel threads • User can create as many threads as they want • When a block system called by a thread, the kernel can schedule another thread for execution

Chapter 5 Outline • Basic Concepts • Scheduling Criteria • Scheduling Algorithms

CPU Scheduler • Whenever the CPU becomes idle, the OS must select one of the processes in the ready queue to be executed • The selection process is carried out by the short-term scheduler

Dispatcher • Dispatcher module gives control of the CPU to the process selected by the short-term scheduler • It should work as fast as possible, since it is invoked during every process switch • Dispatch latency– time it takes for the dispatcher to stop one process and start another running

Scheduling Criteria CPU utilization – keep the CPU as busy as possible (from 0% to 100%) Throughput – # of processes that complete their execution per time unit Turnaround time – amount of time to execute a particular Process Waiting time – amount of time a process has been waiting in the ready queue Response time – amount of time it takes from when a request was submitted until the first response is produced

Scheduling Algorithems • First Come First Serve Scheduling • Shortest Job First Scheduling • Priority Scheduling • Round-Robin Scheduling • Multilevel Queue Scheduling • Multilevel Feedback-Queue Scheduling