Convergence of Sequential Monte Carlo Methods for Bayesian Inference

140 likes | 275 Views

This document explores the convergence of Sequential Monte Carlo (SMC) methods in Bayesian inference, illustrating the relationship between prediction and observation processes. It establishes a framework where the signal follows specific evolution equations, and observations adhere to Bayes' recursion. Key steps involve sequential importance sampling, empirical measure formulation, and Markov Chain Monte Carlo (MCMC) techniques for optimizing particle selection based on importance weights. The findings highlight the mean square error convergence under both general and restrictive conditions, aiming to enhance SMC method robustness.

Convergence of Sequential Monte Carlo Methods for Bayesian Inference

E N D

Presentation Transcript

Convergence of Sequential Monte Carlo Methods Dan Crisan, Arnaud Doucet

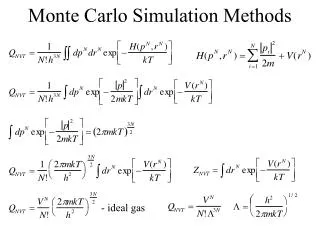

Problem Statement • X: signal, Y: observation process • X satisfies and evolves according to the following equation, • Y satisfies

Bayes’ recursion • Prediction • Updating

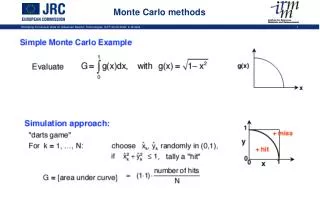

A Sequential Monte Carlo Methods • Empirical measure • Transition kernel • Importance distribution • : abs. continuous with respect to • : strictly positive Radon Nykodym derivative • Then is also continuous w.r.t. and

Algorithm • Step 1:Sequential importance sampling • sample: • evaluate normalized importance weights and let

Step 2: Selection step • multiply/discard particles with high/low importance weights to obtain N particles let assoc.empirical measure • Step 3: MCMC step • sample ,where K is a Markov kernel of invariant distribution and let

Convergence Study • denote • convergence to 0 of average mean square error under quite general conditions • Then prove (almost sure) convergence of toward under more restrictive conditions

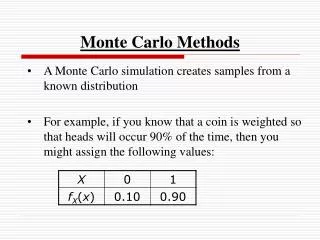

Bounds for mean square errors • Assumptions • 1.-A Importance distribution and weights • is assumed abs.continuous with respect to for all is a bounded function in argument define

There exists a constant s. t. for all there exists with s.t. • There exists s. t. and a constant s.t.

First Assumption ensures that • Importance function is chosen so that the corresponding importance weights are bounded above. • Sampling kernel and importance weights depend “ continuously” on the measure variable. • Second assumption ensures that • Selection scheme does not introduce too strong a “discrepancy”.

Lemma 1 • Let us assume that for any then after step 1, for any • Lemma 2 • Let us assume that for any then for any

Lemma 3 • Let us assume that for any then after step 2, for any • Lemma 4 • Let us assume that for any then for any

Theorem 1 • For all , there exists independent of s.t. for any