Evolving Insider Threat Detection

Evolving Insider Threat Detection. Pallabi Parveen Dr. Bhavani Thuraisingham (Advisor) Dept of Computer Science University of Texas at Dallas Funded by AFOSR. Evolving Insider threat Detection Unsupervised Learning Supervised learning. Outline. Evolving Insider Threat Detection.

Evolving Insider Threat Detection

E N D

Presentation Transcript

Evolving Insider Threat Detection Pallabi Parveen Dr. Bhavani Thuraisingham (Advisor) Dept of Computer Science University of Texas at Dallas Funded by AFOSR

Evolving Insider threat Detection • Unsupervised Learning • Supervised learning Outline

Evolving Insider Threat Detection System log Testing on Data from weeki+1 j Anomaly? Feature Extraction & Selection Online learning System traces System Traces Unsupervised - Graph based Anomaly detection, GBAD weeki+1 Feature Extraction & Selection weeki Learning algorithm Gather Data from Weeki Update models Supervised - One class SVM, OCSVM Ensemble of Models Ensemble based Stream Mining

Insider Threat Detection using unsupervised Learning based on Graph

Insider Threat • Related Work • Proposed Method • Experiments & Results Outlines: Unsupervised Learning

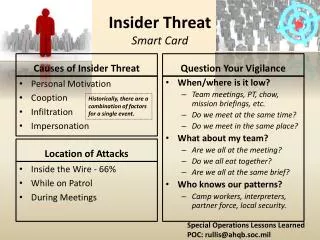

An Insider is someone who exploits, or has the intention to exploit, their legitimate access to assets for unauthorised purposes Definition of an Insider

Computer Crime and Security Survey 2001 • $377 million financial losses due to attacks • 49% reported incidents of unauthorized network access by insiders Insider Threat is a real threat

Insider threat • Detection • Prevention • Detection based approach: • Unsupervised learning, Graph Based Anomaly Detection • Ensembles based Stream Mining Insider Threat : Continue

"Intrusion Detection Using Sequences of System Calls," Supervised learning by Hofmeyr • "Mining for Structural Anomalies in Graph-Based Data Representations (GBAD) for Insider Threat Detection." Unsupervised learning by Staniford-Chen and Lawrence Holder • All are static in nature. Cannot learn from evolving Data stream Related work

One approach to detecting insider threat is supervised learning where models are built from training data. • Approximately .03% of the training data is associated with insider threats (minority class) • While 99.97% of the training data is associated with non insider threat (majority class). • Unsupervised learning is an alternative for this. Why Unsupervised Learning?

All are static in nature. Cannot learn from evolving Data stream Current decision boundary Data Stream Data Chunk Previous decision boundary Anomaly Data Normal Data Instances victim of concept drift Why Stream Mining

Graph based anomaly detection (GBAD, Unsupervised learning) [2] + Ensemble based Stream Mining Proposed Method

Determine normative pattern S using SUBDUE minimum description length (MDL) heuristic that minimizes: M(S,G) = DL(G|S) + DL(S) GBAD Approach

S1 Unsupervised Pattern Discovery Graph compression and the minimum description length (MDL) principle • The best graphical pattern Sminimizes the description length of S and the description length of the graph G compressed with pattern S • where description length DL(S) is the minimum number of bits needed to represent S (SUBDUE) • Compression can be based on inexact matches to pattern S1 S1 S1 S1 S2 S2 S2

Three algorithms for handling each of the different anomaly categories using Graph compression and the minimum description length (MDL) principle: • GBAD-MDL finds anomalous modifications • GBAD-P (Probability) finds anomalous insertions • GBAD-MPS (Maximum Partial Substructure) finds anomalous deletions Three types of anomalies

A B A B C D G A B C A G D D G D C B A C C B G D E GBAD-P (insertion) Example of graph with normative pattern and different types of anomalies GBAD-MPS (Deletion) GBAD-MDL (modification) Normative Structure

Graph based anomaly detection (GBAD, Unsupervised learning) + Ensemble based Stream Mining Proposed Method

Continuous flow of data • Examples: Network traffic Characteristics of Data Stream Sensor data Call center records

Single Model Incremental classification • Ensemble Model based classification Ensemble based is more effective than incremental approach. DataStream Classification

C1 + C2 + + x,? C3 - input Individual outputs voting Ensemble output Classifier Ensemble of Classifiers

Maintain K GBAD models • q normative patterns • Majority Voting • Updated Ensembles • Always maintain K models • Drop least accurate model Proposed Ensemble based Insider Threat Detection (EIT)

D1 D2 D3 D5 D4 C4 C3 C5 C2 C1 Prediction • Build a model (with q normative patterns) from each data chunk • Keep the best K such model-ensemble • Example: for K = 3 Data chunks D4 D6 D5 Update Ensemble Testing chunk Model with Normative Patterns C5 C4 Ensemble based Classification of Data Streams (unsupervised Learning--GBAD) C1 C2 C4 C3 C5 Ensemble

Ensemble (Ensemble A, test Graph t, Chunk S) • LABEL/TEST THE NEW MODEL • 1: Compute new model with q normative • Substructure using GBAD from S • 2: Add new model to A • 3: For each model M in A • 4: For each Class/ normative substructure, q in M • 5: Results1 Run GBAD-P with test Graph t & q • 6: Results2 Run GBAD-MDL with test Graph t & q • 7: Result3 Run GBAD-MPS with test Graph t & q • 8: Anomalies Parse Results (Results1, Results2, Results3) • End For • End For • 9: For each anomaly N in Anomalies • 10: If greater than half of the models agree • 11: Agreed Anomalies N 12: Add 1 to incorrect values of the disagreeing models • 13: Add 1 to correct values of the agreeing models • End For • UPDATE THE ENSEMBLE: • 14: Remove model with • lowest (correct/(correct + incorrect)) ratio • End Ensemble EIT –U pseudocode

1998 MIT Lincoln Laboratory • 500,000+ vertices • K =1,3,5,7,9 Models • q= 5 Normative substructures per model/ Chunk • 9 weeks • Each chunk covers 1 week Experiments

header,150,2, execve(2),,Fri Jul 31 07:46:33 1998, + 652468777 msec path,/usr/lib/fs/ufs/quota attribute,104555,root,bin,8388614,187986,0 exec_args,1, /usr/sbin/quota subject,2110,root,rjm,2110,rjm,280,272,0-0-172.16.112.50 return,success,0 trailer,150 A Sample system call record from MIT Lincoln Dataset

Performance Total Ensemble Accuracy

0 false negatives • Significant decrease in false positives • Number of Model increases • False positive decreases slowly after k=3 Performance Contd..

Performance Contd.. Distribution of False Positives

Performance Contd.. Summary of Dataset A & B

Performance Contd.. The effect of q on TP rates for fixed K = 6 on dataset A The effect of q on FP rates for fixed K = 6 on dataset A The effect of q on runtime For fixed K = 6 on Dataset A

True Positive vs # normative substructure for fixed K=6 on dataset A True Positive vs # normative substructure for fixed K=6 on dataset A Performance Contd.. The effect of K on runtime for fixed q = 4 on Dataset A The effect of K on TP rates for fixed q = 4 on dataset A

Related Work • Proposed Method • Experiments & Results Outlines: Supervised Learning

Insider threat data is minority class • Traditional support vector machines (SVM) trained from such an imbalanced dataset are likely to perform poorly on test datasets specially on minority class • One-class SVMs (OCSVM) addresses the rare-class issue by building a model that considers only normal data (i.e., non-threat data). • During the testing phase, test data is classified as normal or anomalous based on geometric deviations from the model. Why one class SVM

One class SVM (OCSVM) , Supervised learning + Ensemble based Stream Mining Proposed Method

Maps training data into a high dimensional feature space (via a kernel). • Then iteratively finds the maximal margin hyper plane which best separates the training data from the origin corresponds to the classification rule: • For testing, f(x) < 0. we label x as an anomaly, otherwise as normal data • f(X) = <w,x> + b • where w is the normal vector and b is a bias term One class SVM (OCSVM)

Maintain K number of OCSVM (One class SVM) models • Majority Voting • Updated Ensemble • Always maintain K models • Drop least accurate model Proposed Ensemble based Insider Threat Detection (EIT)

D1 D2 D3 D5 D4 C5 C3 C4 C2 C1 Prediction • Divide the data stream into equal sized chunks • Train a classifier from each data chunk • Keep the best K OCSVM classifier-ensemble • Example: for K= 3 D5 D4 D6 Labeled chunk Data chunks Unlabeled chunk Addresses infinite length and concept-drift C5 C4 Classifiers Ensemble based Classification of Data Streams (supervised Learning) C1 C4 C2 C5 C3 Ensemble

Algorithm 1 Testing Input: A← Build-initial-ensemble() Du← latest chunk of unlabeled instances Output: Prediction/Label of Du • 1: FuExtract&Select-Features(Du) • //Feature set for Du • 2: for each xj∈ Fudo • 3.ResultsNULL • 4. for each model M in A • 5. Results Results U Prediction (xj, M) • end for • 6. Anomalies Majority Voting (Results) • end for EIT –S pseudo code (Testing)

Algorithm 2 Updating the classifier ensemble Input: Dn: the most recently labeled data chunks, A: the current ensemble of best K classifiers Output: an updated ensemble A • 1: for each model M ∈ Ado • 2: Test M on Dn and compute its expected error • 3: end for • 4: Mn Newly trained 1-class SVM classifier (OCSVM) from data Dn • 5: Test Mn on Dn and compute its expected error • 6: A best K classifiers from Mn ∪ A based on expected error EIT –S pseudocode

Time, userID, machine IP, command, argument, path, return 1 1:29669 6:1 8:1 21:1 32:1 36:0 Feature Set extracted

Performance Contd.. Updating vs Non-updating stream approach

Performance Contd.. Supervised (EIT-S) vs. Unsupervised(EIT-U) Learning Summary of Dataset A

Conclusion: Evolving Insider threat detection using • Stream Mining • Unsupervised learning and supervised learning Future Work: • Misuse detection in mobile device • Cloud computing for improving processing time. Conclusion & Future Work

Conference Papers: Pallabi Parveen, Jonathan Evans, Bhavani Thuraisingham, Kevin W. Hamlen, Latifur Khan, “ Insider Threat Detection Using Stream Mining and Graph Mining,” in Proc. of the Third IEEE International Conference on Information Privacy, Security, Risk and Trust (PASSAT 2011), October 2011, MIT, Boston, USA (full paper acceptance rate: 13%). Pallabi Parveen, Zackary R Weger, Bhavani Thuraisingham, Kevin Hamlen and Latifur Khan Supervised Learning for Insider Threat Detection Using Stream Mining, to appear in 23rd IEEE International Conference on Tools with Artificial Intelligence (ICTAI2011), Nov. 7-9, 2011, Boca Raton, Florida, USA (acceptance rate is 30%) Pallabi Parveen, Bhavani M. Thuraisingham: Face Recognition Using Multiple Classifiers. ICTAI 2006, 179- 186 Journal: Jeffrey Partyka, Pallabi Parveen, Latifur Khan, Bhavani M. Thuraisingham, Shashi Shekhar: Enhanced geographically typed semantic schema matching. J. Web Sem. 9(1): 52-70 (2011). Others: Neda Alipanah, Pallabi Parveen, Sheetal Menezes, Latifur Khan, Steven Seida, Bhavani M. Thuraisingham: Ontology-driven query expansion methods to facilitate federated queries. SOCA 2010, 1- 8 Neda Alipanah, Piyush Srivastava, Pallabi Parveen, Bhavani M. Thuraisingham: Ranking Ontologies Using Verified Entities to Facilitate Federated Queries. Web Intelligence 2010: 332-337 Publication

W. Eberle and L. Holder, Anomaly detection in Data Represented as Graphs, Intelligent Data Analysis, Volume 11, Number 6, 2007. http://ailab.wsu.edu/subdue • W. Ling Chen, Shan Zhang, Li Tu: An Algorithm for Mining Frequent Items on Data Stream Using Fading Factor. COMPSAC(2) 2009: 172-177 • S. A. Hofmeyr, S. Forrest, and A. Somayaji, “Intrusion Detection Using Sequences of System Calls,” Journal of Computer Security, vol. 6, pp. 151-180, 1998. • M. Masud, J. Gao, L. Khan, J. Han, B. Thuraisingham, “A Practical Approach to Classify Evolving Data Streams: Training with Limited Amount of Labeled Data,”Int.Conf. on Data Mining, Pisa, Italy, December 2010. References