Understanding Validity in Single System Designs: Challenges and Considerations

This text explores various factors impacting the validity of single-system designs in treatment evaluations. It discusses the role of extraneous events, maturation effects, testing biases, instrumentation issues, client dropout, statistical regression, diffusion of interventions, interaction effects, and practitioner style on outcomes. Each point is illustrated with relevant examples, highlighting how these elements can influence results and interpretations, making it essential for practitioners and researchers to consider these variables when conducting and assessing single-system interventions.

Understanding Validity in Single System Designs: Challenges and Considerations

E N D

Presentation Transcript

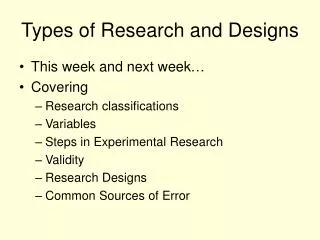

Internal Experimental Validity: Single System Designs • History. Did any other events occur during the time of the practitioner-client contacts that may be responsible for the particular outcome? Since single-system designs by definition extend over time, there is ample opportunity for such extraneous events to occur. Example: During the course of marital therapy, Andrew loses his job while Ann obtains a better position than she had before. Such events, though independent of the therapy, may have as much or more to do with the outcome than did the therapy. • Maturation. Did any psychological or physiological change occur within the client that might have affected the outcome during the time he or she was in a treatment situation? Example: Bobby was in a treatment program from the ages of 13 to 16. But when the program ended, it was impossible to tell if Bobby had simply matured as a teenager or if the program was effective. • Testing. Taking a test or filling out a questionnaire the first time may sensitize the client so that subsequent scores are influenced. Example: The staff of the agency filled out the evaluation questionnaire the first week, but most staff members couldn’t help remembering what they said the first time so subsequent evaluations were biased by the first set of responses. • Instrumentation. Do changes in the measurement devices themselves or changes in the observers or in the way the measurement devices are used have an impact on the outcome? Example: Phyllis, a public health nurse, was involved in evaluating a home health program in which she interviewed elderly clients every 4 months for a 3-year period. About halfway through, Phyllis made plans to be married and was preoccupied with this coming event. She thought she had memorized the questionnaire, but in fact she forgot to ask blocks of questions, and coders of the data complained to her that they couldn’t always make out which response she had circled on the sheets.

Continued • Dropout. Are the results of an intervention program distorted because clients drop out of treatment perhaps due to anxiety, low motivation, and so on, leaving a different sample of persons being measured? Example: While studying the impact of relocation on nursing home patients, a researcher noticed that some patients were refusing to continue to participate in the research interview because they said that the questions made them very anxious and upset. This left the overall results dependent on only the remaining patients. • Statistical regression. Statistically, any extreme score on an original test will tend to become less extreme upon retesting. Example: Hank was tested in the vocational rehabilitation office and received one of the lowest scores ever recorded there, a fact that attracted some attention from the counselors who worked with him. However, because of a bus strike, Hank couldn’t get to the center very often, and yet upon retesting, he scored much better than he had before. Had Hank been receiving counseling during that period, it might have appeared as though the counseling produced the changes in score. • Diffusion or imitation of intervention. When intervention involves some type of informational program and when clients from different interventions or different practitioners communicate with each other about the program, clients may learn about information intended for others. Example: Harvey was seeing a practitioner for help with his depression at the same time as his wife Jean was seeing a practitioner for help with anger management. Although their intervention programs were different, their discussions with each other led them each to try aspects of each other’s program.

External Experimental Validity: Single System Designs • Interaction between the intervention and other variables. Any intervention applied to new clients, in new settings, by new practitioners will be different from the original intervention in varying degrees, thus reducing the likelihood of identical results. Example: Esther’s friend Louise was much taken with her tales of using self-monitoring with adolescent outpatients, so she tried to adapt the technique to youngsters in her school who were having problems related to hyperactivity. Even though she followed Esther’s suggestions as closely as possible, the results did not come out as well as they did in the other setting. • Practitioner effect. The practitioner’s style of practice influences outcomes, so different practitioners applying the same intervention may have different effects to some degree. Example: Arnold had been substitute teaching on many occasions over several years in Marie’s class and knew her course materials quite well. However, when he had to take over her class on a permanent basis because she resigned with a serious illness, he was surprised that he wasn’t as effective in teaching her class as he had expected. • Different dependent (target or outcome) variables. There may be differences in how the target variables are conceptualized and operationalized in different studies, thus reducing the likelihood of identical results. Example: Ernesto’s community action group defined “neighborhood solidarity” in terms of its effectiveness in obtaining municipal services, defined as trash removal, rat control, and the expansion of bus service during early morning hours. While Juan used the same term, neighborhood solidarity, with a similar ethnic group, the meaning of the term had to do with the neighbors presenting a united front in the face of the school board’s arbitrary removal of a locally popular principal.

Continued • Interaction of history and intervention. If extraneous events occur concurrently during the intervention, they may not be present at other times, thus reducing generalizability of the results of the original study. Example: The first community mental health center opened amid the shock of recent suicides of several young unemployed men. It was forced to respond to the needs of persons facing socioeconomic pressures. However, when a new center opened in a nearby area, there was no similar population recognition of this type of counseling, and considerably fewer persons went to the second center than to the first. • Measurement Differences. To the extent that differences exist between two evaluations regarding how the same process and outcome variables are measured, so too are the results likely to be different. Example: The standardized scales used by Lee with his majority group clients were viewed by the several minority group members as being too offensive and asking questions that were too personal, so he had to devise ways of obtaining the same information in less obvious or offensive ways. The results continued to show considerable differences between majority and minority clients. • Differences in Clients.The characteristics of the client-age, sex, ethnicity, social class, and so on-strongly affect the extent to which results can be generalized. Example: Nick devised a counseling program that was very successful in decreasing dropouts in one school with mainly middle-class students. But when Nick tried to implement his program in a different school, one with largely low-income students, he found the dropout rate was hardly affected.

Continued • Interaction between testing and intervention.The effects of the early testing-for example, the baseline observations-could sensitize the client to the intervention so that generalization could be expected to occur only when subsequent clients are given the same test prior to intervention. Example: All members of an assertion training group met for 3 weeks and role-played several situations in which they were not being assertive. The formal intervention of assertion training started the fourth week but by then all group members were highly sensitized to what to expect. • Reactive effects to evaluation. Simple awareness by clients that they are in a study could lead to changes in client performance. Example: All employees at a public agency providing services to the elderly were participants in a study comparing their work with work performed by practitioners in a private agency. Knowing they were in the study, and knowing they had to do their best the public agency practitioners put in extra time, clouding the issue as to whether their typical intervention efforts would produce similar results.

Best Design toEliminate Alternative Explanations Solomon Four Group Design