Parallel distributed computing techniques

Parallel distributed computing techniques. Sinh viên: Lê Trọng Tín Mai Văn Ninh Phùng Quang Chánh Nguyễn Đức Cảnh Đặng Trung Tín. GVHD: Phạm Trần Vũ. Pipelined Computations. Embarrassingly Parallel Computations. Partitioning and Divide-and-Conquer Strategies.

Parallel distributed computing techniques

E N D

Presentation Transcript

Parallel distributed computing techniques Sinh viên: Lê Trọng Tín Mai Văn Ninh Phùng Quang Chánh Nguyễn Đức Cảnh Đặng Trung Tín GVHD: Phạm TrầnVũ

Pipelined Computations Embarrassingly Parallel Computations Partitioning and Divide-and-Conquer Strategies Synchronous Computations Load Balancing and Termination Detection Parallel Computing Techniques Motivation of Parallel Computing Techniques Message-passing computing Contents

Pipelined Computations Embarrassingly Parallel Computations Partitioning and Divide-and-Conquer Strategies Synchronous Computations Load Balancing and Termination Detection Parallel Computing Techniques Motivation of Parallel Computing Techniques Message-passing computing Contents

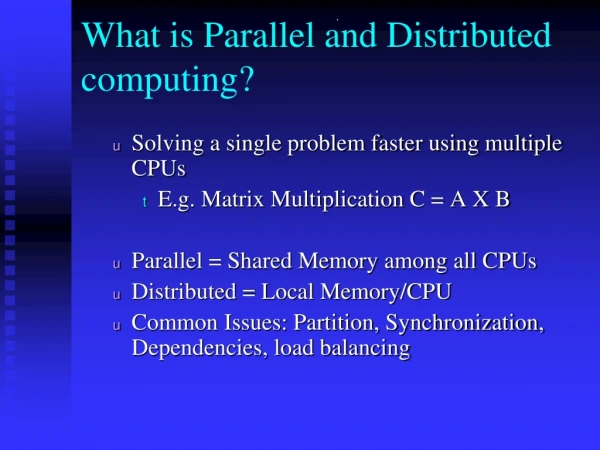

Motivation of Parallel Computing Techniques Demand for Computational Speed • Continual demand for greater computational speed from a computer system than is currently possible • Areas requiring great computational speed include numerical modeling and simulation of scientific and engineering problems. • Computations must be completed within a “reasonable” time period.

Pipelined Computations Embarrassingly Parallel Computations Partitioning and Divide-and-Conquer Strategies Synchronous Computations Load Balancing and Termination Detection Parallel Computing Techniques Motivation of Parallel Computing Techniques Message-passing computing Contents

Message-Passing Computing • Basics of Message-Passing Programming using user-level message passing libraries • Two primary mechanisms needed: • A method of creating separate processes for execution on different computers • A method of sending and receiving messages

Message-Passing Computing Static process creation: Source file Basic MPI way Compile to suit processor Source file Source file executables Processor n-1 Processor 0

Message-Passing Computing Dynamic process creation: Processor 1 . spawn() . . . . . PVM way Start execution of process 2 Processor 2 . . . . . . . time

Message-Passing Computing Method of sending and receiving messages?

Pipelined Computations Embarrassingly Parallel Computations Partitioning and Divide-and-Conquer Strategies Synchronous Computations Load Balancing and Termination Detection Parallel Computing Techniques Motivation of Parallel Computing Techniques Message-passing computing Contents

Pipelined Computation • Problem divided into a series of tasks that have to be completed one after the other (the basis of sequential programming). • Each task executed by a separate process or processor.

Pipelined Computation Where pipelining can be used to good effect • 1-If more than one instance of the complete problem is to be executed • 2-If a series of data items must be processed, each requiring multiple operations • 3-If information to start the next process can be passed forward before the process has completed all its internal operations

Pipelined Computation Execution time = m + p - 1 cycles for a p-stage pipeline and m instances

Pipelined Computations Embarrassingly Parallel Computations Partitioning and Divide-and-Conquer Strategies Synchronous Computations Load Balancing and Termination Detection Parallel Computing Techniques Motivation of Parallel Computing Techniques Message-passing computing Contents

Ideal Parallel Computation • A computation that can obviously be devided into a number of completely independent parts • Each of which can be executed by a separate processor Each process can do its tasks without any interaction with other process

Ideal Parallel Computation • Practical embarrassingly parallel computation with static process creation and master – slave approach

Ideal Parallel Computation • Practical embarrassingly parallel computation with dynamic process creation and master – slave approach

Embarrassingly parallel examples • Geometrical Transformations of Images • Mandelbrot set • Monte Carlo Method

Geometrical Transformations of Images • Performing on the coordinates of each pixel to move the position of the pixel without affecting its value • The transformation on each pixel is totally independent from other pixels • Some geometrical operations • Shifting • Scaling • Rotation • Clipping

Geometrical Transformations of Images Process Process • Partitioning into regions for individual processes Square region for each process Row region for each process Map Map 80 640 640 80 480 480 10

Mandelbrot Set • Set of points in a complex plane that are quasi-stable when computed by iterating the function where is the (k + 1)th iteration of the complex number z = a + bi and c is a complex number giving position of point in the complex plane. The initial value for z is zero. • Iterations continued until magnitude of z is greater than 2 or number of iterations reaches arbitrary limit. Magnitude of z is the length of the vector given by

Mandelbrot Set c.real = real_min + x * (real_max - real_min)/disp_width c.imag = imag_min + y * (imag_max - imag_min)/disp_height • Static Task Assignment • Simply divide the region into fixed number of parts, each computed by a separate processor • Not very successful because different regions require different numbers of iterations and time • Dynamic Task Assignment • Have processor request regions after computing previouos regions

Mandelbrot Set • Dynamic Task Assignment • Have processor request regions after computing previouos regions

Monte Carlo Method • Another embarrassingly parallel computation • Monte Carlo methods use of random selections • Example – To calculate ∏ • Circle formed within a square, with unit radius so that square has side 2x2. Ratio of the area of the circle to the square given by

Monte Carlo Method • One quadrant of the construction can be described by integral • Random pairs of numbers, (xr,yr) generated, each between 0 and 1. Counted as in circle if ; that is,

Monte Carlo Method • Alternative method to compute integral • Use random values of x to compute f(x) and sum values of f(x) • where xr are randomly generated values of x between x1 and x2 • Monte Carlo method very useful if the function cannot be integrated numerically (maybe having a large number of variables)

Monte Carlo Method • Example – computing the integral • Sequential code Routine randv(x1, x2) returns a pseudorandom number between x1 and x2

Monte Carlo Method • Parallel Monte Carlo integration Master Partial sum Request Slaves Random number Random-number process

Pipelined Computations Embarrassingly Parallel Computations Partitioning and Divide-and-Conquer Strategies Synchronous Computations Load Balancing and Termination Detection Parallel Computing Techniques Motivation of Parallel Computing Techniques Message-passing computing Contents

Partitioning • and Divide-and-Conquer • Strategies

Partitioning • Partitioning simply divides the problem into parts. • It is the basic of all parallel programming. • Partitioning can be applied to the program data (data partitioning or domain decomposition) and the functions of a program (functional decomposition). • It is much less mommon to find concurrent functions in a problem, but data partitioning is a main strategy for parallel programming.

Partitioning (cont) A sequence of numbers, x0 ,…, xn-1 , are to be added xn/p … x2(n/p)-1 x(p-1)n/p … xn-1 x0 … x(n/p)-1 + + + Partial sums n: number of items p: number of processors + Sum Partitioning a sequence of numbers into parts and adding them

Divide and Conquer • Characterized by dividing problem into subproblems of same form as larger problem. Further divisions into still smaller sub-problems, usually done by recursion. • Recursive divide and conquer amenable to parallelization because separate processes can be used for divided parts. Also usually data is naturally localized.

Divide and Conquer (cont) A sequential recursive definition for adding a list of numbers is int add(int *s) // add list of numbers, s { if(number(s) <= 2) return (n1 + n2); else { Divide (s, s1, s2); // divide s into two part, s1, s2 part_sum1 = add(s1);// recursive calls to add sub lists part_sum2 = add(s2); return (part_sum1 + part_sum2); } }

Divide and Conquer (cont) Initial problem Divide problem Final task Tree construction www.cse.hcmut.edu.vn 42

Divide and Conquer (cont) Original list Initial problem P0 P0 P4 Divide problem P0 P2 P4 P6 P0 P1 P2 P3 P4 P5 P6 P7 Final task x0 xn-1

Partitioning/Divide and Conquer Examples Many possibilities. • Operations on sequences of number such as simply adding them together • Several sorting algorithms can often be partitioned or constructed in a recursive fashion • Numerical integration • N-body problem

Bucket sort • One “bucket” assigned to hold numbers that fall within each region. • Numbers in each bucket sorted using a sequential sorting algorithm. • Sequental sorting time complexity: O(nlog(n/m). • Works well if the original numbers uniformly distributed across a known interval, say 0 to a - 1. n: number of items m: number of buckets

Parallel version of bucket sort Simple approach • Assign one processor for each bucket.

Further Parallelization • Partition sequence into m regions, one region for each processor. • Each processor maintains p “small” buckets and separates the numbers in its region into its own small buckets. • Small buckets then emptied into p final buckets for sorting, whichrequires each processor to send one small bucket to each of the other processors (bucket i to processor i).

Another parallel version of bucket sort • Introduces new message-passing operation - all-to-all broadcast.

“all-to-all” broadcast routine • Sends data from each process to every other process

“all-to-all” broadcast routine (cont) • “all-to-all” routine actually transfers rows of an array to columns: • Tranposes a matrix.