Generalised linear models

Generalised linear models. Generalised linear model Exponential family Example: Log-linear model - Poisson distribution Example: logistic model- Binomial distribution Deviances Model selection R commands for generalised linear models. Shortcomings of general linear model.

Generalised linear models

E N D

Presentation Transcript

Generalised linear models • Generalised linear model • Exponential family • Example: Log-linear model - Poisson distribution • Example: logistic model- Binomial distribution • Deviances • Model selection • R commands for generalised linear models

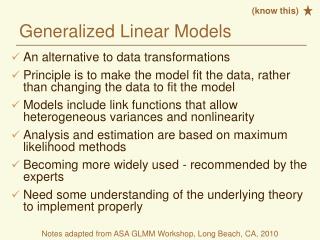

Shortcomings of general linear model One of the main assumptions for linear model is that errors are additive. I.e. observations are equal to their expectation value plus an error.. Another assumption used in test statistics for linear model is that distribution of observations is normal. What happens if these assumptions break down, e.g. errors are additive for some function of the expected value and distributions are not normal? There are class of problems that are widely being used in such fields as medicine, biosciences. They are especially important when observations are categorical, i.e. they have discrete values. This class of problems are usually dealt with using generalised linear models. Let us consider these problems. First let us consider generalised exponential family.

Generalised linear model Linear models are useful when the distributions of the observations are or can be approximated with normal distribution. Even if it is not the case, for large number of observations normal distribution is a safe assumption. However there are many cases when different model should be used. Generalised linear model is a way of generalising linear models to a wide range of distributions. If the distribution of the observations is the from the family of generalised exponential family and mean value (or some function of it) of this distribution is linear on the input parameters then generalised linear model can be used. Generalised exponential family has a form: Following distributions belong to the generalised exponential family (note that parameters we are considering are the mean values and for simplicity take S()=1). Other members of this family include: gamma, exponential and many others.

Generalised linear model: Exponential family Natural exponential family of distributions has a form: S() is a scale parameter. We can replace A() with by change of variables. In this case is called canonical parameter Many distributions including normal, binomial, Poisson, exponential distributions belong to this family. Moment generating function is: Then the first moment (mean value) and the second central moments are:

Generalised linear model If the distribution of observations is one of the distributions from the exponential family and some function of the expected value of the observations is a linear function of the parameters then generalised linear model can be used: Function g is called the link function. That is a function that links observations with parameters of interest. Or it links predictors with responses. Here is a list of the popular distribution and corresponding link functions: binomial - logit = ln(p/(1-p)) normal - identity Gamma - inverse Poisson - log All good statistical packages have implementation of several generalised linear models. To fit using generalised linear model, likelihood function is written

Link function and parameters Canonical link function for exponential families are equal to canonical parameter: For example for normal distribution it is identity function: For binomial distribution it is logit function: For Poisson distribution it is

Generalised linear model: maximum likelihood To estimate parameters in generalised linear models with maximum likelihood is used. Let us write it with canonical parameter with natural link function Here we assumed that the form of the distributions for different observations are the same but parameters are different. It is a non-linear optimisation problem. This type of problems are usually solved iteratively. One of he techniques used is iteratively weighted least-squares technique. Unfortunately closed form relations (unbiasedness of mean, equations for covariance estimator) that hold for linear models cannot be used here.

Poisson distribution: log-linear model If the distribution of the observations is Poisson then log-linear model could be used. Recall that Poisson distribution is from exponential family and the function A of the mean value is logarithm. It can be handled using generalised linear model. When log-linear model is appropriate: When outcomes are frequencies (expressed as integers) then log-linear model is appropriate. When we fit log-linear model then we can find estimated mean using exponential function: Example: Relation between gray hair and age Age gray hair under 40 over 40 yes 27 18 no 33 22

Binomial distribution: logistic model If the distribution of the results of experiment is binomial, i.e. outcomes are 0 or 1 (success or failure) then logistic model can be used. Recall that a function of mean value has the form: This function has a special name – logit. It has several advantages: If logit() has been estimated then we can find and it is between 0 and 1. If probability of success is larger than failure then this function is positive, otherwise it is negative. Changing places of success and failure changes only the sign of this function. This model can be used when outcomes are binary (0 and 1). If logit() is linear then we can find : For logistic model either grouped variables (fraction of successes) or individual items (every individual have success (1) or failure (0)) can be used. Ratio of the probability of success to the probability of failure is also called odds.

Tests for generalised linear models Tests applied for linear model are not easily extended to generalised linear models. In linear models such statistics as t.test, F.test are in common use. Validity of these tests are justified if the distributions of observations are normal. One of the general statistical tests that is used in many different applications is likelihood ratio test. What is the likelihood ratio test?

Likelihood ratio test Let us assume that we have a sample of size n (x=(x1,,,,xn)) and we want to estimate a parameter vector =(1,2). Both 1 and 2can also be vectors. We want to test null-hypothesis against alternative one: Let us assume that likelihood function is L(x| ). Then likelihood ratio test works as follows: 1) Maximise the likelihood function under null-hypothesis (I.e. fix parameter(s) 1 equal to 10 , find the value of likelihood at the maximum, 2)maximise the likelihood under alternative hypothesis (I.e. unconditional maximisation), find the value of the likelihood at the maximum, then find the ratio: w is the likelihood ratio statistic. Tests carried out using this statistic are called likelihood ratio tests. In this case it is clear that: If the value of w is small then null-hypothesis is rejected. If g(w) is the the density of the distribution for w then critical region can be calculated using:

Deviances In linear model, we maximise the likelihood with full model and under the hypothesis. The ratio of the values of the likelihood function under two hypotheses (null and alternative) is related to F-distribution. Interpretation is that how much variance would increase if we would remove part of the model (null hypothesis). In logisitc and log-linear models, again likelihood function is maximised under the null-and alternative hypotheses. Then logarithm (deviance) of ratio of the values of the likelihood under these two hypotheses asymptotically has chi-squared distribution: That is the difference between maximum achievable log-likelihood and the value of likelihood at the estimated paramters That is the reason why in log-linear and logistic regressions it is usual to talk about deviances and chi-squared statistics instead of variances and F-statistics. Analysis based on log-linear and logistic models (in general for generalised linear models) is usually called analyisis of deviances. Reason for this is that chi-squared is related to deviation of the fitted model and observations. Another test is based on Pearson’s chi-squared test. It approaches asympttically to chi-squared with n observtion minus n parameter degree of freedom.

Example Let us take the data esoph from R. data(esoph) That is a data set “from a case-control study of (o)esophageal cancer in Ile-et-Vilaine, France” attach(esoph) model1 = glm(cbind(ncases,ncontrols) ~ agegp + tobgp * alcgp,data = esoph, family = binomial()) summary(model1) gives all sort of information about each parameters. They meant to show significance of each etimated parameter. It also gives information about deviances. Null deviance corresponds to the fit with one parameter and residual deviance with all parameters.

R commands for log-linear model log-linear model can be analysed using generalised linear model. Once the factors, the data and the formula have been decided then we can use: result <- glm(data~formula,family=‘poisson’) It will give us fitted model. Then we can use plot(result) summary(result) Interpretation of the results is similar to that for linear model ANOVA tables. Degrees of freedom is defined similarly. Only difference is that instead of sum of squares deviances are used.

R commands for logistic regression Similar to log-linear model: Decide what are the data, the factors and what formula should be used. Then use generalised linear model to fit. result <- glm(data~formula,family=‘binomial’) then analyse using anova(result,test=“Chisq”) summary(result) plot(result)

Bootstrap There are different ways of applying bootstrap for these cases: • Sample the original observation with design matrix • Sample the residuals and add them to the fitted values (for each member of family and each link function it should be done differently) • Use estimated parameters and do parametric sampling. • fit the model (using glm and family of distributions) • For each cell in the design matrix find the parameters of the distribution • Sample using the distribution with this parameter • Fit the model again and save coefficients (or any other statistics of interest) • Repeat 3 and 4 B times • Build distributions and other properties

Model selection problem There are at least two techniques for model selection. The first one is well known Akaike’s Information Criterion (AIC). It has the form: AIC = 2p-2log(L) Where p is the number of parameters of the model and L is the value of the likelihood function at the maximum. AIC attempts to combine two conflicting factors. If we increase the number of parameters then likelihood function should not decrease. So AIC tries to tell if increase in the likelihood justifies the increase of the number of parameters. The second way for model selection is use of more general purpose cross-validation.

Model selection: Cross validation Cross validation would work as follows: • Select one of the models • Divide data randomly into K roughly equal sizes • For subset k=1,K fit the model using all data excluding k-th subset • Calculate prediction error for k-th subset • Repeat 3) and 4) for all subsets and calculate overall prediction error • Go to step 1) and do all steps for all the models under consideration • Select the model that gives lowest prediction error Note that calculating prediction error may not be a straightforward task

Exercise Exercise will be ready on Friday.

References • McCullagh P. and Nelder, J. A. (1989) Generalized Linear Models. London: Chapman and Hall. • Myers, RM, Montgomery, DC and Vining GG. Generalized linear models: with application in Engineering and the Sciences • McCullagh CP, Searle, (2001) Generalized, linear and mixed models