Efficient Sorting Methods and Analysis for Data Structures

Learn about insertion sort, red-black tree, heapsort, quicksort, analysis of running times, recurrence trees, randomized quicksort, and comparison models for sorting algorithms. Understand the complexity and strategies for efficient sorting.

Efficient Sorting Methods and Analysis for Data Structures

E N D

Presentation Transcript

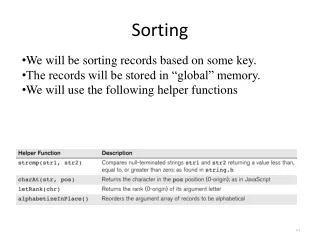

Sorting • We have actually seen already two efficient ways to sort:

A kind of “insertion” sort • Insert the elements into a red-black tree one by one • Traverse the tree in in-order and collect the keys • Takes O(nlog(n)) time

Heapsort (Willians, Floyd, 1964) • Put the elements in an array • Make the array into a heap • Do a deletemin and put the deleted element at the last position of the array

Put the elements in the heap 79 65 26 19 15 29 24 23 33 40 7 79 65 26 24 19 15 29 23 33 40 7 Q

Make the elements into a heap 79 65 26 19 15 29 24 23 33 40 7 79 65 26 24 19 15 29 23 33 40 7 Q

Make the elements into a heap Heapify-down(Q,4) 79 65 26 19 15 29 24 23 33 40 7 79 65 26 24 19 15 29 23 33 40 7 Q

Heapify-down(Q,4) 79 65 26 7 15 29 24 23 33 40 19 79 65 26 24 7 15 29 23 33 40 19 Q

Heapify-down(Q,3) 79 65 26 7 15 29 24 23 33 40 19 79 65 26 24 7 15 29 23 33 40 19 Q

Heapify-down(Q,3) 79 65 26 7 15 29 23 24 33 40 19 79 65 26 23 7 15 29 24 33 40 19 Q

Heapify-down(Q,2) 79 65 26 7 15 29 23 24 33 40 19 79 65 26 23 7 15 29 24 33 40 19 Q

Heapify-down(Q,2) 79 65 15 7 26 29 23 24 33 40 19 79 65 15 23 7 26 29 24 33 40 19 Q

Heapify-down(Q,1) 79 65 15 7 26 29 23 24 33 40 19 79 65 15 23 7 26 29 24 33 40 19 Q

Heapify-down(Q,1) 79 7 15 65 26 29 23 24 33 40 19 79 7 15 23 65 26 29 24 33 40 19 Q

Heapify-down(Q,1) 79 7 15 19 26 29 23 24 33 40 65 79 7 15 23 19 26 29 24 33 40 65 Q

Heapify-down(Q,0) 79 7 15 19 26 29 23 24 33 40 65 79 7 15 23 19 26 29 24 33 40 65 Q

Heapify-down(Q,0) 7 79 15 19 26 29 23 24 33 40 65 7 79 15 23 19 26 29 24 33 40 65 Q

Heapify-down(Q,0) 7 19 15 79 26 29 23 24 33 40 65 7 19 15 23 79 26 29 24 33 40 65 Q

Heapify-down(Q,0) 7 19 15 40 26 29 23 24 33 79 65 7 19 15 23 40 26 29 24 33 79 65 Q

Summery • We can build the heap in linear time (we already did this analysis) • We still have to deletemin the elements one by one in order to sort that will take O(nlog(n))

quicksort Input: an array A[p, r] Quicksort (A, p, r) if (p < r) then q = Partition (A, p, r) //q is the position of the pivot element Quicksort(A, p, q-1) Quicksort(A, q+1, r)

p r j i 2 8 7 1 3 5 6 4 j i 2 8 7 1 3 5 6 4 j i 2 8 7 1 3 5 6 4 j i 2 1 7 8 3 5 6 4 i j 2 8 7 1 3 5 6 4

j i 2 1 7 8 3 5 6 4 j i 2 1 3 8 7 5 6 4 j i 2 1 3 8 7 5 6 4 j i 2 1 3 8 7 5 6 4 j i 2 1 3 4 7 5 6 8

2 8 7 1 3 5 6 4 r p Partition(A, p, r) x ←A[r] i ← p-1 for j ← p to r-1 do if A[j] ≤ x then i ← i+1 exchange A[i] ↔ A[j] exchange A[i+1] ↔A[r] return i+1

Analysis • Running time is proportional to the number of comparisons • Each pair is compared at most once O(n2) • In fact for each n there is an input of size n on which quicksort takes cn2 Ω(n2)

But • Assume that the split is even in each iteration

T(n) = 2T(n/2) + bn How do we solve linear recurrences like this ? (read Chapter 4)

Recurrence tree bn T(n/2) T(n/2)

Recurrence tree bn bn/2 bn/2 T(n/4) T(n/4) T(n/4) T(n/4)

Recurrence tree bn bn/2 bn/2 logn T(n/4) T(n/4) T(n/4) T(n/4) In every level we do bn comparisons So the total number of comparisons is O(nlogn)

Observations • We can’t guarantee good splits • But intuitively on random inputs we will get good splits

Randomized quicksort • Use randomized-partition rather than partition Randomized-partition (A, p, r) i ← random(p,r) exchange A[r] ↔ A[i] return partition(A,p,r)

On the same input we will get a different running time in each run ! • Look at the average for one particular input of all these running times

Expected # of comparisons Let X be the expected # of comparisons This is a random variable Want to know E(X)

Expected # of comparisons Let z1,z2,.....,zn the elements in sorted order Let Xij = 1 if zi is compared to zj and 0 otherwise So,

Consider zi,zi+1,.......,zj ≡ Zij Claim: zi and zj are compared either zi or zj is the first chosen in Zij Proof: 3 cases: • {zi, …, zj} Compared on this partition, and never again. • {zi, …, zj} the same • {zi, …, zk, …, zj} Not compared on this partition. Partition separates them, so no future partition uses both.

just explained = Pr{zi or zj is first pivot chosen from Zij} = Pr{zi is first pivot chosen from Zij} + Pr{zj is first pivot chosen from Zij} mutually exclusive possibilities Pr{zi is compared to zj} = 1/(j-i+1) + 1/(j-i+1) = 2/(j-i+1)

Simplify with a change of variable, k=j-i+1. Simplify and overestimate, by adding terms.

A lower bound • Comparison model: We assume that the operation from which we deduce order among keys are comparisons • Then we prove that we need Ω(nlogn) comparisons on the worst case

1 2 1 2 2 1 1 2 3 3 2 1 3 1 3 2 2 1 2 1 2 3 3 1 1 2 1 2 3 2 3 1 1 2 3 3 Model the algorithm as a decision tree 1

Important Observations • Every algorithm can be represented as a (binary) tree like this • Each path corresponds to a run on some input • The worst case # of comparisons corresponds to the longest path

The lower bound Let d be the length of the longest path n! ≤ #leaves ≤ 2d log2(n!) ≤d

Lower Bound for Sorting • Any sorting algorithm based on comparisons between elements requires (N log N) comparisons.

Beating the lower bound • We can beat the lower bound if we can deduce order relations between keys not by comparisons Examples: • Count sort • Radix sort

Linear time sorting • Or assume something about the input: random, “almost sorted”

Sorting an almost sorted input • Suppose we know that the input is “almost” sorted • Let I be the number of “inversions” in the input: The number of pairs ai,ajsuch that i<j and ai>aj

Example 1, 4 , 5 , 8 , 3 I=3 I=10 8, 7 , 5 , 3 , 1