A Simpler Minimum Spanning Tree Verification Algorithm

A Simpler Minimum Spanning Tree Verification Algorithm. Valerie King July 31,1995. Agenda. Abstract Introduction Boruvka tree property Komlós algorithm for full branching tree Implementation of Komlós’s algorithm Analysis. Abstract .

A Simpler Minimum Spanning Tree Verification Algorithm

E N D

Presentation Transcript

A Simpler Minimum Spanning Tree Verification Algorithm Valerie King July 31,1995

Agenda • Abstract • Introduction • Boruvka tree property • Komlós algorithm for full branching tree • Implementation of Komlós’s algorithm • Analysis

Abstract • The Problem considered here is that of determining whether a given spanning tree is a minimal spanning tree. • 1984, Komlós presented an algorithm which required only a linear number of comparisons, but nonlinear overhead to determine which comparisons to make. • This paper simplifies Komlós’s algorithm, and gives a linear time procedure( use table lookup functions ) for its implementation .

Introduction(1/5) • Paper research history • Robert E. Tarjan --Department of Computer SciencePrinceton University --Achievements in the design and analysisof algorithms and data structures. --Turing Award

Introduction(2/5) • DRT algorithm step1.Decompose--separates the tree into a large subtree and many “microtrees” step2.Vertify each root of adjacent microtrees has shortest path between them. step3.Find the MST in each microtrees • MST algorithm of KKT randomized algorithm

Introduction(3/5) • János Komlós --Department of Mathematics Rutgers, The State University of New Jersey • Komlós’s algorithm was the first to use a linear number of comparisons, but no linear time method of deciding which comparisons to make has been known.

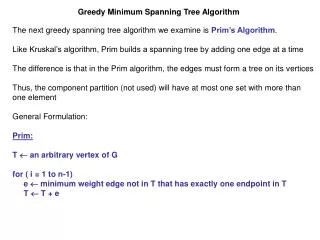

Introduction(4/5) • A spanning tree is a minimum spanning tree iff the weight of each nontree edge {u,v} is at least the weight of the heaviest edge in the path in the tree between u and v. • Query paths --”tree path” problem of finding the heaviest edges in the paths between specified pairs of nodes. • Full branching tree

Introduction(5/5) • If T is a spanning tree, then there is a simple O(n) algorithm to construct a full branching tree B with no more than 2n edges . • The weight of the heaviest edge in T(x,y) is the weight of the heaviest edge in B(x,y).

Boruvka Tree Property (1/12) • Let T be a spanning tree with n nodes. • Tree B is the tree of the components that are formed when Boruvka algorithm is applied to T. • Boruvak algorithm • Initially there are n blue trees consisting of the nodes of V and no edges. • Repeat edge contraction until there is one blue tree.

Boruvka Tree Property (2/12) • Tree B is constructed with node set W and edge set F by adding nodes and edges to B after each phase of Boruvka algorithm. • Initial phase • For each node v V, create a leaf f(v) of B.

Boruvka Tree Property (3/12) • Adding phase • Let A be the set of blue trees which are joined into one blue tree t in a phase i. • Add a new node f(t) to W and add edge to F.

Boruvka Tree Property (4/12) 1 3 8 2 3 11 10 4 4 5 6 6 7 9 7 8 9 1 2 3 6 4 7 8 5 9

Boruvka Tree Property (5/12) 1 3 8 2 3 11 10 4 t1 t2 t3 t4 4 5 6 3 4 6 7 9 3 4 9 6 7 6 9 7 8 9 1 2 3 6 4 7 8 5 9

Boruvka Tree Property (6/12) t t1 8 10 8 8 11 11 10 t3 t4 t2 t1 t2 t3 t4 3 4 6 7 9 3 4 9 6 1 2 3 6 4 7 8 5 9

Boruvka Tree Property (7/12) • The number of blue trees drops by a factor of at least two after each phase. • Note that B is a full branching tree • i.e., it is rooted and all leaves are on the same level and each internal node has at least two children.

Boruvka Tree Property (8/12) • Theorem 1 • Let T be any spanning tree and let B be the tree constructed as described above. • For any pair of nodes x and y in T, the weight of the heaviest edge in T(x,y) equals the weight of the heaviest edge in B(f(x), f(y)).

Boruvka Tree Property (9/12) • First, we prove that for every edge , there is an edge such that w(e’)≥w(e). • Let e = {a,b} and let a be the endpoint of e. • Then a = f(t) for some blue tree t which contains either x or y.

Boruvka Tree Property (10/12) • Let e’ be the edge in T(x,y) with exactly one endpoint inblue tree t. Since t had the option of selecting e’,w(e’)≥w(e). e B e’’ r a f(x) b Blue tree t f(y) e’ a f(x)

Boruvka Tree Property (11/12) • Claim: Let e be a heaviest edge in T(x,y). Then there is an edge of the same weight in B(f(x), f(y)). • If e is selected by a blue tree which contains x or y then an edge in B(f(x), f(y)) is labeled with w(e). • On the contrary, assume that e is selected by a blue tree which does not contain x or y. This blue tree contained one endpoint of e and thus one intermediate node on the path from x to y.

Boruvka Tree Property (12/12) • Therefore it is incident to at least two edges on the path. Then e is the heavier of two, giving a contradiction. y x i e t

Komlos’s Algorithm for a Full Branching Tree • The goal is to find the heaviest edge on the path between each pair • Break up each path into two half-paths extending from the leaf up to the lowest common ancestor of the pair and find the heaviest edge in each half-path • The heaviest edge in each query path is determined with one additional comparison per path

Komlos’s Algorithm for a Full Branching Tree (cont’d) • Let A(v) be the set of the paths which contain v and is restricted to the interval [root, v] intersecting the query paths • Starting with the root, descend level by level to determine the heaviest edge in each path in the set A(v)

Komlos’s Algorithm for a Full Branching Tree (cont’d) • Let p be the parent of v, and assume that the heaviest edge in each path in the set A(p) is known • We need only to compare w({v,p}) to each of these weights in order to determine the heaviest edge in each path in A(v) • Note that it can be done by binary search

Data Structure (1/4) • Node Labels • Node: DFS (leaves 0, 1, 2, …; internal longest all 0’s suffix) • lgn bits • Edge Tags • Edge: <distance(v), i(v)> • distance(v): v’s distance from the root. • i(v): the index of the rightmost 1 in v’s label. • Label Property • Given the tag of any edge e and the label of any node on the path from e to any leaf, e can be located in constant time.

Full Branching Tree 0(0000) <1, 0> <1, 4> 0(0000) 8(1000) <2, 0> <2, 2> <2, 4> <2, 2> <2, 3> 0(0000) 4(0100) 8(1000) 10(1010) 6(0110) <3, 2> <3, 3> <3, 1> <3, 1> <3, 4> <3, 1> <3, 1> <3, 2> <3, 0> <3, 1> <3, 2> <3, 1> 0(0000) 1(0001) 2(0010) 3(0011) 4(0100) 5(0101) 6(0110) 7(0111) 8(1000) 9(1001) 10(1010) 11(1011)

Data Structure (2/4) • LCA • LCA(v) is a vector of length wordsize whose ith bit is 1 iff there is a path in A(v) whose upper endpoint is at distance i from the root. • That is, there is a query path with exactly one endpoint contained in the subtree rooted at v, such that the lowest common ancestor of its two endpoints is at distance i form the root. • A(v)’s representation

Data Structure (3/4) • BigLists & SmallLists • For any node v, the ith longest path in A(v) will be denoted by Ai(v). • The weight of an edge e is denoted w(e). • V is big if |A(v)| > (wordsize/tagsize); O.W v is small. • For each big node v, we keep an ordered list whose ith element is the tag of the heaviest edge in Ai(v) for i = 1, …, |A(v)|. • This list is a referred to as bigList(v). • BigList(v) is stored in ┌|A(v) / (wordsize/tagsize)|┐

Data Structure (4/4) • BigLists & SmallLists • For each small v, let a be the nearest big ancestor of v. For each such v, we keep an ordered list, smallList(v), whose ith element is • either the tag of the heaviest edge e in Ai(v), • or if e is in the interval [a, root], then the j such that Ai(v|a) = Aj(a). • That is, j is a pointer to the entry of bigList(a) which contains the tag for e. • Once a tag appear in a smallList, all the later entries in the list are tags. (a pointer to the first tag) • SmallList is stored in a single word. (logn)

The Algorithm (1/6) • The goal is to generate bigList(v) or smallList(v) in time proportional to log|A(v)|, so that time spent implementing Komlos’s algorithm at each node does not exceed the worst case number of comparisons needed at each node. • We show that • If v is big O(loglogn) • If v is small O(1).

The Algorithm (2/6) • Initially, A(root) = Ø. We proceed down the tree, from the parent p to each of the children v. • Depending on |A(v)|, we generate either bigList(v|p) or smallList(v|p). • Compare w({v, p}) to the weights of these edges, by performing binary search on the list, and insert the tag of {v, p} in the appropriate places to form bigList(v) or smallList(v). • Continue until the leaves are reached.

The Algorithm (3/6) • selectr • Ex: select(010100, 110000) = (1,0) • selectSr • Ex: selectS((01), (t1, t2)) = (t2) • weightr • Ex: weight(011000) = 2 • indexr • Ex: index(011000) = (2, 3) (2, 3 are pointers) • subword1 • (00100100)

The Algorithm (4/6) • Let v be any node, p is its parent, and a its nearest big ancestor. To compute A(v|p): • If v is small • If p is small create smallList(v|p) from smallList(p) in O(1) time. • ex:Let LCA(v) = (01001000), LCA(p) = (11000000). Let smallList(p) be (t1, t2). Then L = select(11000000, 01001000) = (01); and smallList(v|p) = selectS((01), (t1, t2)) = (t2) • If p is big create smallList(v|p) from LCA(v) and LCA(p) in O(1) time. • ex:Let LCA(p) = (01101110), LCA(v) = (01001000). Then smallList(v|p) = index(select(01101110, 01001000)) = index(10100) = (1, 3).

The Algorithm (5/6) • If (v has a big ancestor) • create bigList(v|a) from bigList(a),LCA(v), and LCA(a) in time O(lglgn). • Ex: Let LCA(a) = (01101110), LCA(v) = (00100101), and let bigList(a) be (t1, t2, t3, t4, t5). Then L = (01010); L1 = (01), L2 = (01), and L3 = (0); b1 = (t1, t2), b2 = (t3, t4), b3 = (t5). Then (t2) = selectS((01), (t1, t2)); (t4) = selectS((01), (t3, t4)); and () = selectS(t5). Thus bigList = (t2, t4). • If (p != a) create bigList(v|p) from bigList(v|a) and smallList(p) in time O(lglgn). • Ex: bigList(v|a) = (t2, t4), smallList(p) = (2, t’). Then bigList(v|p) = (t2, t’). • If (v doesn’t have a big ancestor) • bigList(v|p)← smallList(p).

The Algorithm (6/6) • To insert a tag in its appropriate places in the list: • Let e = {v, p}, • And let i be the rank of w(e) compared with the heaviest edges of A(v|p). • Then we insert the tag for e in position i through |A(v)|, into our list data structure for v • in time O(1) if v is small, • or O(loglogn) if v is big. • Ex: Let smallList(v|p) = (1, 3). Then t is the tag of {v, p}. To put t into positions 1 to j = |A(v)| = 2, we compute t * subword1 = t * 00100100 = (t, t) followed some extra 0 bits, which are discarded to get smallList(v|p) = (t, t).