Introduction to Reinforcement Learning: Concepts and Applications

This course provides a comprehensive introduction to reinforcement learning (RL), focusing on learning through interaction with environments to achieve specific goals. Topics include Markov Decision Processes (MDPs), Partially Observable MDPs (POMDPs), and feedback control systems. Assessments consist of homework and exams, designed to enhance understanding of RL principles through practical applications such as robot navigation. This course is suitable for students with a background in mathematics and some exposure to machine learning.

Introduction to Reinforcement Learning: Concepts and Applications

E N D

Presentation Transcript

Reinforcement LearningIntroduction Subramanian Ramamoorthy School of Informatics 17 January 2012

Admin Lecturer: Subramanian (Ram) Ramamoorthy IPAB, School of Informatics s.ramamoorthy@ed (preferred method of contact) Informatics Forum 1.41, x505119 Main Tutor: Majd Hawasly, M.Hawasly@sms.ed, IF 1.43 Class representative? Mailing list: Are you on it – I will use it for announcements! Reinforcement Learning

Admin Lectures: • Tuesday and Friday 12:10 - 13:00 (FH 3.D02 and AT 2.14) Assessment: Homework/Exam 10+10% / 80% • HW1: Out 7 Feb, Due 23 Feb • Use MDP based methods in a robot navigation problem • HW2: Out 6 Mar, Due 29 Mar • POMDP version of the previous exercise Reinforcement Learning

Admin Tutorials (M. Hawasly, K. Etessami, M. von Rossum), tentatively: T1 [Warm-up: Formulation, bandits] - week of 30th Jan T2 [Dyn. Prog.] - week of 6th Feb T3 [MC methods] - week of 13th Feb T4 [TD methods] - week of 27th Feb T5 [POMDP] - week of 12th Mar • We’ll assign questions (combination of pen & paper and computational exercises) – you attempt them before sessions. • Tutor will discuss and clarify concepts underlying exercises • Tutorials are not assessed; gain feedback from participation Reinforcement Learning

Admin Webpage: www.informatics.ed.ac.uk/teaching/courses/rl • Lecture slides will be uploaded as they become available Readings: • R. Sutton and A. Barto, Reinforcement Learning, MIT Press, 1998 • S. Thrun, W. Burgard, D. Fox, Probabilistic Robotics, MIT Press, 2006 • Other readings: uploaded to web page as needed Background: Mathematics, Matlab, Exposure to machine learning? Reinforcement Learning

Problem of Learning from Interaction • with environment • to achieve some goal • Baby playing. No teacher. Sensorimotor connection to environment. – Cause – effect – Action – consequences – How to achieve goals • Learning to drive car, hold conversation, etc. • Environment’s response affects our subsequent actions • We find out the effects of our actions later Reinforcement Learning

Rough History of RL Ideas • Psychology – learning by trial and error … actions followed by good or bad outcomes have their tendency to be reselected altered accordingly • Selectional: try alternatives and pick good ones • Associative: associate alternatives with particular situations • Computational studies (e.g., credit assignment problem) • Minsky’s SNARC, 1950 • Michie’s MENACE, BOXES, etc. 1960s • Temporal Difference learning (Minsky, Samuel, Shannon, …) • Driven by differences between successive estimates over time Reinforcement Learning

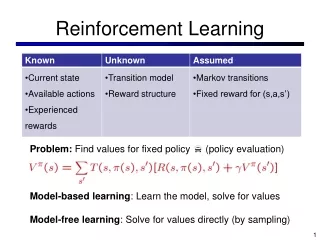

Rough History of RL, contd. • In 1970-80, many researchers, e.g., Klopf, Sutton & Barto,…, looked seriously at issues of “getting results from the environment” as opposed to supervised learning (distinction is subtle!) • Although supervised learning methods such as backpropagation were sometimes used, emphasis was different • Stochastic optimal control (mathematics, operations research) • Deep roots: Hamilton-Jacobi → Bellman/Howard • By the 1980s, people began to realize the connection between MDPs and the RL problem as above… Reinforcement Learning

What is the Nature of the Problem? • As you can tell from the history, many ways to understand the problem – you will see this as we proceed through course • One unifying perspective: Stochastic optimization over time • Given (a) Environment to interact with, (b) Goal • Formulate cost (or reward) • Objective: Maximize rewards over time • The catch: Reward may not be rich enough as optimization is over time – selecting entire paths • Let us unpack this through a few application examples… Reinforcement Learning

Motivating RL Problem 1: Control Reinforcement Learning

The Notion of Feedback Control Compute corrective actions so as to minimise a measured error Design involves the following: • What is a good policy for determining the corrections? • What performance specifications are achievable by such systems? Reinforcement Learning

Feedback Control • The Proportional-Integral-Derivative Controller Architecture • ‘Model-free’ technique, works reasonably in simple (typically first & second order) systems • More general: consider feedback architecture, u= - Kx • When applied to a linear system, closed-loop dynamics: • Using basic linear algebra, you can study dynamic properties • e.g., choose Kto place the eigenvalues and eigenvectors of the closed-loop system Reinforcement Learning

The Optimal Feedback Controller • Begin with the following: • Dynamics: “Velocity” = f(State, Control) • Cost: Integral involving State/Control squared (e.g., x’Qx) • Basic idea: Optimal control actions correspond to a cost or value surface in an augmented state space • Computation: What is the path equivalent of f’(x) = 0? • In the special case of linear dynamics and quadratic cost, we can explicitly solve the resulting Ricatti equation Reinforcement Learning

The Linear Quadratic Regulator Reinforcement Learning

Idea in Pictures Main point to takeaway: notion of value surface Reinforcement Learning

Connection between Reinforcement Learning and Control Problems • RL has close connection to stochastic control (and OR) • Main differences seem to arise from what is ‘given’ • Also, motivations such as adaptation • In RL, we emphasize sample-based computation, stochastic approximation Reinforcement Learning

Example Application 2: Inventory Control • Objective: Minimize total inventory cost • Decisions: • How much to order? • When to order? Reinforcement Learning

Components of Total Cost • Cost of items • Cost of ordering • Cost of carrying or holding inventory • Cost of stockouts • Cost of safety stock (extra inventory held to help avoid stockouts) Reinforcement Learning

The Economic Order Quantity Model- How Much to Order? • Demand is known and constant • Lead time is known and constant • Receipt of inventory is instantaneous • Quantity discounts are not available • Variable costs are limited to: ordering cost and carrying (or holding) cost • If orders are placed at the right time, stockouts can be avoided Reinforcement Learning

Inventory Level Over Time Based on EOQ Assumptions Reinforcement Learning

EOQ Model Total Cost At optimal order quantity (Q*): Carrying cost = Ordering cost Demand Costs Reinforcement Learning

Realistically, How Much to Order –If these Assumptions Didn’t Hold? • Demand is known and constant • Lead time is known and constant • Receipt of inventory is instantaneous • Quantity discounts are not available • Variable costs are limited to: ordering cost and carrying (or holding) cost • If orders are placed at right time, stockouts can be avoided The result may be need for a more detailed stochastic optimization. Reinforcement Learning

Example Application 3: Dialogue Mgmt. [S. Singh et al., JAIR 2002] Reinforcement Learning

Dialogue Management: What is Going On? • System is interacting with the user by choosing things to say • Possible policies for things to say is huge, e.g., 242 in NJFun Some questions: • What is the model of dynamics? • What is being optimized? • How much experimentation is possible? Reinforcement Learning

The Dialogue Management Loop Reinforcement Learning

Common Themes in these Examples • Stochastic Optimization – make decisions! Over time; may not be immediately obvious how we’re doing • Some notion of cost/reward is implicit in problem – defining this, and constraints to defining this, are key! • Often, we may need to work with models that can only generate sample traces from experiments Reinforcement Learning