Fast Multi-Threading on Shared Memory Multi-Processors

Fast Multi-Threading on Shared Memory Multi-Processors. Joseph Cordina B.Sc. Computer Science and Physics Year IV. Aims of Project. Implementation of MESH, a user level threads package, on to a shared memory multi-processor machine

Fast Multi-Threading on Shared Memory Multi-Processors

E N D

Presentation Transcript

Fast Multi-Threading on Shared Memory Multi-Processors Joseph Cordina B.Sc. Computer Science and Physics Year IV

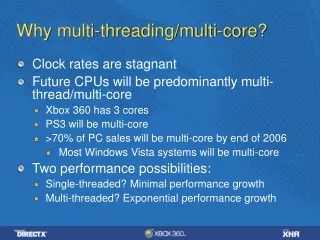

Aims of Project • Implementation of MESH, a user level threads package, on to a shared memory multi-processor machine • Take advantage of concurrent processing while maintaining the advantages of fine grain user level thread scheduling with low latency context switching • Enable concurrent inter-process communication on same machine and on an Ethernet network through the NIC

What Is MESH ? • A tightly coupled fine grain uni-processor user level thread scheduler for the C language • MESH provides an environment in which to manage user level threads • Makes use of inline active context switching relying on the compiler knowledge of the registers in use at any one time (min.c.s/w 55ns) • Direct hardware access close to maximum theoretical limit when using jumbo frames • Communication API supports message pools, ports and poolports

Concurrent Resource Access • Scheduler entry points are explicit • Scheduler entry occurs concurrently when using more than one thread of execution • Access to global data needs to be protected from concurrency • Data read access does not need to be protected • Data write access cannot occur concurrently with data reads • Spin-lock protected resources with small critical section providing minimum busy wait time • Spin on read to preserve cache

Scheduling in SMP-MESH • Shared run queue to store user level threads descriptors at 32 levels of priority • Multiple Kernel level threads access it to retrieve threads and place new ones and can lead to data corruption • Lock protected run queue forces synchronisation • Fine thread granularity increases contention for run queue lock

Scheduling in SMP-MESH (2) Linux does not provide a private memory area for each Kernel Level Thread unlike SunOS LWPs • Kernel level threads need knowledge of self identification achieved through comparing stack space • Kernel level thread should equal number of processors for best utilization

Well Behaved Idling • Upon finding the run queue empty, kernel level threads sleep in the kernel giving up the processor for other applications to execute • Sleeping on a semaphore removes risk of lost wakeup unlike signals and message passing • Upon re-awaking, the new user level threads are passed directly to the sleeping thread, without invoking run queue access

Load Balancing • No Kernel Level Thread is idle when a user level thread is on the shared run-queue • The run-queue’s FIFO structure ensures that oldest threads will be executed first • Cache consistency is not ensured when using shared run-queue

Communication in SMP-MESH • Inter thread communication on same system and in between different systems • All instances of message pools, ports and poolports have a private lock providing maximum concurrent communication • Consecutive memory needs to be protected when creating messages • Message transmission to NIC using a lock, reception from NIC using no lock

Results • 500,000 context switches at differing thread granularity • Contention for shared resources at fine thread granularity gives worse performance on an SMP machine than on a uni-processor machine

Conclusion • SMP-MESH takes advantage of multi-processors for fine grain multi-threading • Concurrency is encouraged in all areas unless risk of data corruption exists • Overheads on the uni-processor MESH are expected yet counter balanced as number of processors are increased • Considerable speedup is available at minimum cost, the main disadvantage is requiring more careful synchronisation in application design