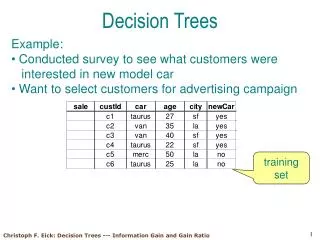

Decision Trees Tutorial: Information Gain and Pruning Techniques

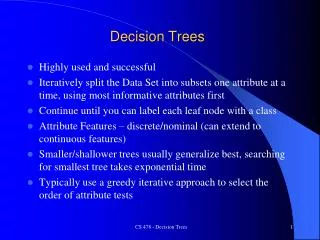

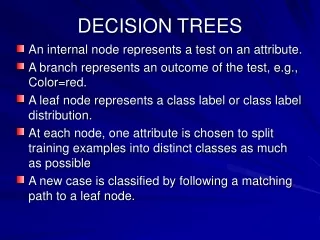

Learn about Decision Trees, Information Gain, and Node-based Pruning techniques for data analysis and classification. Understand logical expressions, entropy, and maximizing accuracy.

Decision Trees Tutorial: Information Gain and Pruning Techniques

E N D

Presentation Transcript

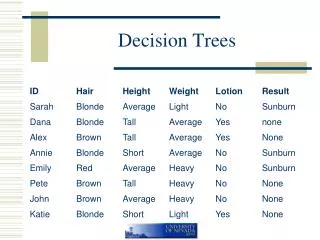

Decision Trees • 10-601 Recitation • 1/17/08 • Mary McGlohon • mmcgloho+10601@cs.cmu.edu

Announcements • HW 1 out- DTs and basic probability • Due Mon, Jan 28 at start of class • Matlab • High-level language, specialized for matrices • Built-in plotting software, lots of math libraries • On campus lab machines • Interest in tutorial? • Smiley Award Plug

AttendClass? Raining Represent as a logical expression. • Represent this tree as logical expression. • AttendClass = Yes If • Raining = False OR • Material = New AND Before10am = False OR • Is10601 = Yes True False Yes Is10601 True False Yes Material New Old No Before10 True False No Yes True False Yes

AttendClass? Raining Represent as a logical expression. • Represent this tree as logical expression. • AttendClass = Yes If • Raining = False OR • Material = New AND Before10am = False OR • Is10601 = Yes True False Yes Is10601 AttendClass = Yes if: (Raining = False) OR (Is10601 = True) OR (Material = New AND Before10 =False) True False Yes Material New Old No Before10 True False No Yes True False Yes

Split decisions • There are other trees logically equivalent. • How do we know which one to use?

Split decisions • There are other trees logically equivalent. • How do we know which one to use? • Depends on what is important to us.

Information Gain • Classically we rely on “information gain”, which uses the principle that we want to use the least number of bits, on average, to get our idea across. • Suppose I want to send a weather forecast with 4 possible outcomes: Rain, Sun, Snow, and Tornado. 4 outcomes = 2 bits. • In Pittsburgh there’s Rain 90% of the time, Snow 5%, Sun 4.9%, and Tornado .01%. So if you assign Rain to a 1-bit message, you rarely send >1 bit.

Entropy Set S has 6 positive, 2 negative examples. H(S) = -.75 log2(.75) - .25 log2(.25) =

Conditional Entropy “The average number of bits it would take to encode a message Y, given knowledge of X”

Conditional Entropy H(Attend | Rain) = H(Attend | Rain=T)*P(Rain=T) + H(Attend|Rain=F)*P(Rain=F)

Conditional Entropy H(Attend | Rain) = H(Attend | Rain=T)*P(Rain=T) + H(Attend|Rain=F)*P(Rain=F)=1 * 0.5 + 0 * 0.5 = 0.5 Entropy of this set = 1 Entropy of this set = 0

Information Gain IG(S,A) = H(S) - H(S|A) “How much conditioning on attribute A increases our knowledge (decreases entropy) of S.

Information Gain IG(Attend,Rain) = H(Attend) - H(Attend|Rain)= .8113 - .5 = .3113

What about this? For some dataset, could we ever build this DT? Material New Old Raining Before10 True False True False Yes Yes Is10601 Raining True False True False Yes No Yes Yes Is10601 True False Yes No

What about this? For some dataset, could we ever build this DT? Material New Old Raining Before10 True False True False Yes Yes Is10601 Raining True False True False Yes No Yes Yes Is10601 What if you were taking 20 classes, and it rains 90% of the time? True False Yes No

What about this? For some dataset, could we ever build this DT? Material New Old Raining Before10 If most information is gained from Material or Before10, we won’t ever need to traverse to 10-601. So even a bigger tree (node-wise) may be “simpler”, for some sets of data. True False True False Yes Yes Is10601 Raining True False True False Yes No Yes Yes Is10601 What if you were taking 20 classes, and it rains 90% of the time? True False Yes No

Node-based pruning • Until further pruning is harmful, • For each node n in trained tree T, • Let Tn’ be T without n (and descendents). Assign removed node to be “best choice” under that traversal. • Record error of Tn’ on validation set. • Let T= Tk’ where Tk’ is pruned tree with best performance on validation set.

Node-based pruning For each node, record performance on validation set of tree without node. Material New Old Suppose our initial tree has 0.7 accurate performance on validation. Raining Before10 True False True False Yes Yes Is10601 Raining True False True False Yes No Yes Is10601 True False Yes No

Node-based pruning For each node, record performance on validation set of tree without node. Material New Old Suppose our initial tree has 0.7 accurate performance on validation. Raining Before10 True False True False Let’s test this node... Yes Yes Is10601 Raining True False True False Yes No Yes Is10601 True False Yes No

Node-based pruning For each node, record performance on validation set of tree without node. Material New Old Suppose our initial tree has 0.7 accurate performance on validation. Raining Before10 Text True False True False Yes Yes Is10601 Yes Suppose that most examples where Material=New and Before10=True are “Yes”. Our new subtree has “Yes” here. True False Yes No

Node-based pruning For each node, record performance on validation set of tree without node. Material New Old Suppose our initial tree has 0.7 accurate performance on validation. Raining Before10 Text True False True False Yes Yes Is10601 Yes Suppose that most examples where Material=New and Before10=True are “Yes”. Our new subtree has “Yes” here. True False Yes No Now, test this tree!

Node-based pruning For each node, record performance on validation set of tree without node. Material New Old Suppose our initial tree has 0.7 accurate performance on validation. Raining Before10 Text True False True False Yes Yes Is10601 Yes Suppose that most examples where Material=New and Before10=True are “Yes”. Our new subtree has “Yes” here. True False Yes No Now, test this tree!

Node-based pruning For each node, record performance on validation set of tree without node. Material New Old Suppose our initial tree has 0.7 accurate performance on validation. Raining Before10 Text True False True False Yes Yes Is10601 Yes Suppose that most examples where Material=New and Before10=True are “Yes”. Our new subtree has “Yes” here. True False Yes No Suppose we get accuracy of 0.73 on this pruned tree. Repeat the test procedure by removing a different node from the original tree...

Node-based pruning Material Try this tree (with a different node pruned)... New Old Raining Before10 True False True False Yes Yes Is10601 Raining True False True False Yes No Yes Is10601 True False Yes No

Node-based pruning Material Try this tree (with a different node pruned)... New Old Raining Before10 True False True False Yes Yes No Raining Now, test this tree and record its accuracy. True False Yes Is10601 True False Yes No

Node-based pruning Material Try this tree (with a different node pruned)... New Old Once we test all possible prunings, modify our tree T with the pruning that has the best performance. Repeat the entire pruning selection procedure on new T, replacing T each time with the best performing pruned tree, until we no longer gain anything by pruning. Raining Before10 True False True False Yes Yes No Raining Now, test this tree and record its accuracy. True False Yes Is10601 True False Yes No

Rule-based pruning Material New Old Raining Before10 True False True False Yes Yes Is10601 Raining 1. Convert tree to rules, one for each leaf: IF Material=Old AND Raining = False THEN Attend = Yes IF Material=Old AND Raining=True AND Is601=True THEN Attend=Yes ... True False True False Yes No Yes Is10601 True False Yes No

Rule-based pruning • 2. Prune each rule. For instance, to prune this rule: • IF Material=Old AND Raining = F THEN Attend = T • Test potential rule without preconditions on validation set, compare to performance of original rule on set. • IF Material=OLD THEN Attend=T • IF Raining=F THEN Attend = T

Rule-based pruning • Suppose we got the following accuracy for each rule: • IF Material=Old AND Raining = F THEN Attend = T -- 0.6 • IF Material=OLD THEN Attend=T -- 0.5 • IF Raining=F THEN Attend = T -- 0.7

Rule-based pruning • Suppose we got the following accuracy for each rule: • IF Material=Old AND Raining = F THEN Attend = T -- 0.6 • IF Material=OLD THEN Attend=T -- 0.5 • IF Raining=F THEN Attend = T -- 0.7 • Then, we would keep the best one and drop the others.

Rule-based pruning • Repeat for next rule, comparing the original rule with each rule with one precondition removed. • IF Material=Old AND Raining=T AND Is601=T then Attend=T • If Material=Old AND Raining=T then Attend=T • If Material=Old AND Is601=T then Attend=T • If Raining=T and Is601=T then Attend=T

Rule-based pruning • Repeat for next rule, comparing the original rule with each rule with one precondition removed. • IF Material=Old AND Raining=T AND Is601=T then Attend=T-- 0.6 • If Material=Old AND Raining=T then Attend=T-- 0.7 • If Material=Old AND Is601=T then Attend=T-- 0.3 • If Raining=T and Is601=T then Attend=T-- 0.65

Rule-based pruning • Repeat for next rule, comparing the original rule with each rule with one precondition removed. • IF Material=Old AND Raining=T AND Is601=T then Attend=T-- 0.6 • If Material=Old AND Raining=T then Attend=T-- 0.7 • If Material=Old AND Is601=T then Attend=T-- 0.3 • If Raining=T and Is601=T then Attend=T-- 0.65 • If a shorter rule works better, we may also choose to further prune on this step before moving on to next leaf. • If Material=Old AND Raining=T then Attend=T-- 0.7 • If Material=Old then Attend=T-- 0.3 • If Raining = T then Attend = T-- 0.2

Rule-based pruning • Repeat for next rule, comparing the original rule with each rule with one precondition removed. • IF Material=Old AND Raining=T AND Is601=T then Attend=T-- 0.6 • If Material=Old AND Raining=T then Attend=T-- 0.75 • If Material=Old AND Is601=T then Attend=T-- 0.3 • If Raining=T and Is601=T then Attend=T-- 0.65 • If a shorter rule works better, we may also choose to further prune on this step before moving on to next leaf. • If Material=Old AND Raining=T then Attend=T-- 0.75 • If Material=Old then Attend=T-- 0.3 • If Raining = T then Attend = T-- 0.2 Well, maybe not this time!

Rule-based pruning • Once we have done the same pruning procedure for each rule in the tree.... • 3. Order the ‘kept rules’ by their accuracy, and do all subsequent classification with that priority. • -IF Material=Old AND Raining=T THEN Attend=T-- 0.75 • -IF Raining=F THEN Attend = T -- 0.7 • -....(and so on for other pruned rules)... • (Note that you may wind up with a differently-structured DT than before, as discussed in class)

Adding randomness Raining What if you didn’t know if you had new material? For instance, you wanted to classify this: True False Yes Is10601 True False Yes Material New Old No Before10 True False No Yes

Adding randomness Raining What if you didn’t know if you had new material? For instance, you wanted to classify this: True False Yes Is10601 True False Yes Material where to go? New Old No You could look at training set, and see that when Rain=T an 10601=F, p fraction of the examples had new material. Then flip a p-biased coin and descend the appropriate branch. But that might not be the best idea. Why not? Before10 True False No Yes

Adding randomness Also, you may have missing data in the training set. Raining True False There are also methods to deal with this using probability. “Well, 60% of the time when Rain and not 601, there’s new material (when we know there is new material). So we’ll just randomly select 60% of rainy, non-601 examples where we don’t know the material, to be old material. Yes Is10601 True False Yes ?

Adventures in Probability • That approach tends to work well. Still, we may have the following trouble. • What if there aren’t very many training examples where Rain = True and 10601=False? Wouldn’t we still want to use examples where Rain=False to get the missing value? • Well, it “depends”. Stay tuned for lecture next week!