Autonomous Agents

Autonomous Agents . Overview . Topics. Theories: logic based formalisms for the explanation, analysis, or specification of autonomous agents. Languages: agent-based programming languages.

Autonomous Agents

E N D

Presentation Transcript

Autonomous Agents Overview

Topics • Theories: logic based formalisms for the explanation, analysis, or specification of autonomous agents. • Languages: agent-based programming languages. • Architectures: integration of different components into a coherent control framework for an individual agent.

Topics • Multi-agent architectures: methodologies and architectures for group of agents (could be from different architectures) • Agent modeling: modeling other agents’ behavior or mental state from the perspective of an individual agent • Agent capabilities • Agent testbeds and evaluation

Agent Theories, Languages, and Architectures Wooldridge & Jennings (ATAL 1994, LNAI 890)

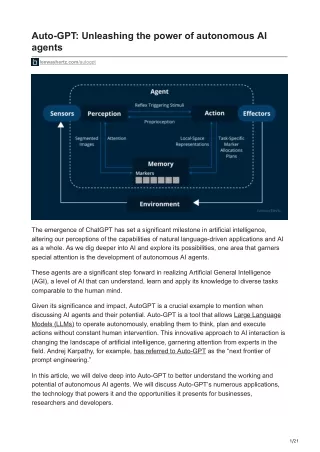

What is an agent? • Weak: • Autonomy • Social ability • Reactivity • Pro-activities • Strong: • Mental properties such as knowledge, belief, intention, obligation • Emotional • Others attributes: mobility, veracity, benevolence, rationality

Agent Theories • How to conceptualize agents? • What properties should agents have? • How to formally represent and reason about agent properties?

Agent Theories • Definition: an agent theory is a specification for an agent. Formalisms for representing and reasoning about agent properties • Starting point: agent = entity ‘which appears to be the subject of beliefs, desires, etc.’

Intentional system • An intentional system whose behavior can be predicted by the method of attributing belief, desires, and rational acumen • Proved that can be used to describe almost everything • Good as an abstract tool for describing, explaining, and predicting the behavior of complex systems

Intentional system - Examples • One studies hard because one wants to get good GPA. • One takes the course ‘cs579-robotic’ because one believes that it will be fun. • One takes the course ‘cs579-robotic’ because there is no 500-level course offered. • One takes the course ‘cs579-robotic’ because one believes that the course is easy

Agent Attitudes • Information attitudes: related to the information that an agent has about the environment • Belief • Knowledge • Pro-attitudes: guide the agent’s actions • Desire • Intention • Obligation • Commitment • Choice • An agent should be represented in terms of at least one info-attitude and one pro-attitude. Why?

Representing intentional notions Representing Jan believes Cronos is the father of Zeus naïve translation into FOL: Believe(Jan, Father(Zeus,Cronos)) • Problems: • No nested predicate • Zeus = Jupiter • Believe(Jan, Father(Jupiter,Cronos)) [Wrong] Conclusion: FOL is not suitable since intention is context dependent.

Possible World Semantics • Hintikka: 1962 – Agent’s belief can be characterized as a set of possible worlds. • Example: • A door opener robot: door is closed, lock needs to be unlocked but the robot does not know if the lock is unlocked or not – two possibilities: • {closed, locked} • {closed, unlocked} • Card player (poker): ? • UNIX Ping command: ?

Possible World Semantics • Each world represents a state that the agent believes it might be in given what it knows. • Each world is called a epistemic alternative. • The agent believes in something is true in all possible worlds. • Problem: logical omniscience – agent believes all the logical consequences of its belief impossible to compute.

Alternatives to PWS • Levesque – belief and awareness: explicit belief (small) from implicit belief (large). • No nested belief • The notion of a situation is unclear • Under certain situation: unrealistic prediction • Konolige – the deduction model: modeling the belief of a symbolic AI system (database of beliefs and an inference system). • Simple

Others • Meta-language: one in which it is possible to represent the properties of another language • Problem: inconsistency • Pro-attitudes: goals and desires – adapting possible world semantics to model goals and desires • Problem: side effects

Theory of agency • Realistic agent: • combination of different components • dynamic aspect • Moore – knowledge and action: study the problem of knowledge precondition for actions • I needs to know the telephone number of my friend Enrico in order to call him. • I can find the telephone number in the telephone book. • I needs to know that the course is easy before I sign up for it

Theory of agency • Cohen and Levesque – belief and goal: originally developed as a pre-requisite for a theory of speech acts but proved very useful in analysis of conflict and cooperation in multi-agent diaglogue, cooperative problem solving

Theory of agency • Rao and Georgeff – belief, desire, intention (BDI) architecture: logical framework for agent theory based on BDI, used a branching model of time • Singh: logics for representing intention, belief, knowledge, know-how, communication in a branching-time framework

Theory of agency • Werner: general model of agency based on work in economics, game theory, situated automate, situated semantics, philosophy. • Wooldridge: modeling multi-agent system

Agent Architectures • Construction of computer systems with properties specified by an agent theory. • Three well-know architectures: • Deliberative • Reactive • Hybrid

Deliberative architecture • View agent as a particular type of knowledge based system – known as symbolic AI • Contains an explicit represented, symbolic model of the world • Decision is made via logical reasoning (pattern matching, symbolic manipulation) • Properties: • Attractive from the logical point of view • High computational complexity (FOL: not decidable, with modalities: highly undecidable)

Sense • Assimilate • Sensing results • Reasoning • Symbolic • representation • of the world • Determine what • to do next • Act • Execute the • action generated • by the reasoning • module ENVIRONMENT Deliberative architecture in picture

Deliberative architecture • Examples: • Planning agents: a planner is an essential component of any artificial agent • Main problem: intractability – addressed by techniques such as hierarchical, non-linear planning. • IRMA (Intelligent Resource-bounded machine architecture): explicit representations of BDI & planning library, a reasoner, opportunity analyser, a filtering process, a deliberation process (mainly: reduced the time to deliberate)

Deliberative architecture • HOMER: a prototype of an agent with linguistic capability, planning and acting capability. • GRATE*: layered architecture in which the behavior of an agent is guided by the mental attitudes of beliefs, desires, intentions, and joint intention.

Reactive architecture • Proposed to overcome the weakness of symbolic AI • Main features: • does not include any kind of central symbolic world model • does not use complex reasoning

Sense • Assimilate • Sensing results • Reasoning • Determine what • to do next • Act • Execute the • action generated • by the reasoning • module ENVIRONMENT Reactive architecture in picture

Reactive architecture • Brook - behavior language: subsumption architecture • Hierarchy of task-accomplishing behaviors • Each behavior competes with others • Lower layer represents more primitive task and has precedence over upper layers • Very simple • Demonstrate that it can do a lot • Multiple subsumption agents

Reactive architecture • Arge and Chapman – PENGI: most everyday activity is ‘routine’ • Once learned, a task becomes routine and can be executed with little or no modification • Routines can be compiled into a program and then updated from time to time (e.g. after new tasks are added)

Reactive architecture • Rosenschein and Kaelbling - Situated automata • Agent is specified in declarative terms which are then compiled into digital machine • Correctness of the machine can be proved • No symbol manipulation in situated automata, thus efficient • Maes – Agent network architecture: an agent is a network of competency modules

Hybrid architecture • Combine deliberative and reactive architecture – exploit the best out of the two • Georgeff and Lansky – Procedural Reasoning System: BDI & plan library, explicit symbolic representation of BDI • Beliefs are facts – FOL • Desires are represented by behavior • Each plan in the plan library is associated with invocation condition reactive • Intention – the set of currently active plans

Environment Plan Library Belief: FOL System Interpreter P1: Invocation I1 Desire: System beha. Pn: Invocation In Intention: Active Pi: Invocation Ii Pj: Invocation Ij PRS in picture

Hybrid architecture • Ferguson – TOURINGMACHINES: • Perception and action subsystem – interact directly with the environment • Control framework system: three control layers – each is independent, activity producing, concurrently executing process • Reactive layer (response to events that happen too quickly for other to response) • Planning layer (select plan, actions to achieve goal) • Modeling layer (symbolic representation, use to resolve goal conflict)

Hybrid architecture • Burmeister et al. – COSY: hybrid BDI with features of PRS and IRMA, for a multi-agent testbed called DASEDIS • Mueller et at. – INTERRAP: layered architecture, each layer is divided into knowledge and control vertical part

Agent language • A system that allows one to program hardware and software computer systems in terms of some of the concepts developed by agent theorists. • Shoham – agent-oriented programming: • A logical system for defining the mental state of agents • An interpreted programming language for programming agents • An ‘agentification’ process, for compiling agent program into low-level executable systems Agent0: first two features

Agent language • Thomas – PLACA (Planning communicating agent language) • Fisher – Concurrent METATEM: correctness of the agents with respect to their specification • IMAGINE project: ESPIRIT • General Magic, Inc. – TELESCRIPT • Connah and Wavish - ABLE