NCACHE

NCACHE. The fast web cache server base on nginx Use aio sendfile and epoll modules The self sort share mem hash index High performance and large storage Low cpu cost and low iowait Record lock instead of process lock Without http headers cache. OVERVIEW. F5. NGINX PROXY. NCACHE.

NCACHE

E N D

Presentation Transcript

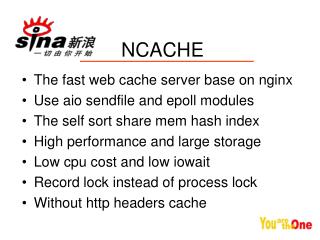

NCACHE • The fast web cache server base on nginx • Use aio sendfile and epoll modules • The self sort share mem hash index • High performance and large storage • Low cpu cost and low iowait • Record lock instead of process lock • Without http headers cache

OVERVIEW F5 NGINX PROXY NCACHE BACKEND BACKEND BACKEND

Ngx worker process Ngx worker process hash index Init by Ngx master process when nginx is start on Disk Files Read / write by file system or raw dev Body filter Be proxy Get the proxy content and save into the disk by aio Backend server Backend server Backend server STRUCTURE

Request Request Not found Find cache in index found yes Timeout? not Proxy backend Sendfile output Body filter Writev output Aiowrite fresh index Logic Diagram

The self sort share mem hash index First floor of hash index 1(6) Top:0 List to solve the conflict of the hash 2(5) Index[1]+2 = 7 3(4) 2(7) 16777216 1(6) Hash_malloc 3(4) If arrived at the bottom of the share memory then ncache will return to the 16777216 point and find which can be reused 33554432

Record lock Sync file Read Mem index Worker process Do not need to lock any worker process or request Mmapauto sync not cause wait Worker process cause wait Write not cause wait Worker process

Performance between SQUID 1 First: cpu last: io Blue is ncache

Performance between SQUID 2 SQUID NCACHE

Future • The aio_sendfile function • Compress share memory hash index • Memory cache the hottest data • Raw device read and write • Distribute storage system • Aio queue with lio_listio function

The end • Google code: • http://code.google.com/p/ncache/ • Nginx wiki: • http://wiki.codemongers.com/ • Our mail: • pangfan@staff.sina.com.cn • shuiyang@staff.sina.com.cn