A Smart Direction Guide that Provides Users with Additional Destination-dependent Information

130 likes | 453 Views

A Smart Direction Guide that Provides Users with Additional Destination-dependent Information Angela Xian December 12, 2002 Objective

A Smart Direction Guide that Provides Users with Additional Destination-dependent Information

E N D

Presentation Transcript

A Smart Direction Guide that Provides Users with Additional Destination-dependent Information Angela Xian December 12, 2002

Objective • Based on the direction stored on mobile devices, predict users’ actions according to the destination (and purpose of the trip, if available), and thus provide users with additional meaningful information • The prediction uses common sense reasoning, where the common sense knowledge may be inputted by users

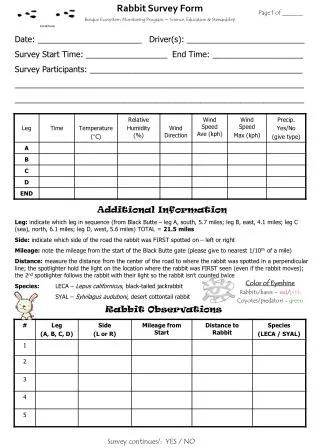

Major Components User Direction Selector Direction file storage direction destination record input Destination Extractor destination Learning Engine Reasoning Engine Output Destination beans Destination Record Storage

A Sample Direction The file direction0.txt contains the following text: From home to Galleria Start from 70 Pacific St going North Turn right at Sidney St Turn left at Mass Ave Get to Central Square T station Take Red line Inbound to Park St Change to Green line Take Green line to Museum of Science Turn right at Cambridge St Go Straight for two blocks Galleria is on your left

Destination Bean Object 1) PURPOSE - the purpose of the trip, such as shopping, party at friend’s house, etc. 2) DESTINATION NAME – the name of the destination, such as “Cambridgeside Galleria” or “Logan” 3) DESTINATION TYPE – what kind of place the user is going to, e.g. shopping mall or airport 4) RELATED INFORMATION – the additional information related to the destination and the purpose of the trip that may be useful to the user, such as web URLs of the businesses that the user is going to 5) LIKELIHOOD – the ratio (in the scale of 1 to 10) that indicates how likely the related information is relevant if only the destination name but not the purpose of the trip is available.

Areas of Improvements • Use a real database to store Destination Bean objects instead of using a sequential-access file (due to the unavailability of database software) • Add intelligence to the reasoning engine, such as updating the likelihood ratio • Add a learning engine that learns user-specific common sense from user’s input and behavior • Add more functionalities in the application such as creating a new direction file, allowing multiple sources for the additional information

Existing Project: Providing Direction using Images and Speech Recognizer • Take pictures of landmarks along a route, at the same time, speak the instruction to the handheld e.g. image: picture of the sign “Kendall Square”; instruction: “Get off at the Kendall Square station.” • The handheld processes the speech input, associates the instruction with the picture • User can also manually input or correct the instructions A complete direction with image and text

The Question is -- How to make this software agent smarter?

One Possible Approach: Predict Users’ Actions according to the Destination • A direction is packaged with some extra information that users might find useful • The following rules can be add into Openmind:

User Scenario I • Lisa goes to South Station to catch a train to NYC • She has the direction from her apartment to her friend’s place in NYC (through South Station and Grand Central) on her handheld • On her way to South Station, her handheld tells her that her train will be delayed for 20 minutes • She now has time to stop by a gift store on the way and get a little present for her friend

User Scenario II • Bob arrived in Logan at 8pm, with an empty stomach • Using the direction stored on his handheld, he heads to Hyatt • On his way, he checks the dinner menu in Hyatt (it comes with the direction) • He does not like what Hyatt provide, so he looks at menus of restaurants nearby • He picks the restaurant he likes and makes a reservation even before he arrives in the hotel