Machine Learning: Decision Trees Homework 4 assigned

Machine Learning: Decision Trees Homework 4 assigned. What is machine learning?. It is very hard to write programs that solve problems like recognizing a face. We don’t know what program to write because we don’t know how its done.

Machine Learning: Decision Trees Homework 4 assigned

E N D

Presentation Transcript

Machine Learning: Decision TreesHomework 4 assigned courtesy: Geoffrey Hinton, YannLeCun, Tan, Steinbach, Kumar

What is machine learning? • It is very hard to write programs that solve problems like recognizing a face. • We don’t know what program to write because we don’t know how its done. • Even if we had a good idea about how to do it, the program might be horrendously complicated. • Instead of writing a program by hand, we collect lots of examples that specify the correct output for a given input. • A machine learning algorithm then takes these examples and produces a program that does the job. • The program produced by the learning algorithm may look very different from a typical hand-written program. It may contain millions of numbers. • If we do it right, the program works for new cases as well as the ones we trained it on.

Different types of learning • Supervised Learning: given training examples of inputs and corresponding outputs, produce the “correct” outputs for new inputs. Example: character recognition. • Unsupervised Learning: given only inputs as training, find structure in the world: discover clusters, manifolds, characterize the areas of the space to which the observed inputs belong • Reinforcement Learning: an agent takes inputs from the environment, and takes actions that affect the environment. Occasionally, the agent gets a scalar reward or punishment. The goal is to learn to produce action sequences that maximize the expected reward.

Applications • handwriting recognition, OCR: reading checks and zip codes, handwriting recognition for tablet PCs. • speech recognition, speaker recognition/verification • security: face detection and recognition, event detection in videos. • text classification: indexing, web search. • computer vision: object detection and recognition. • diagnosis: medical diagnosis (e.g. pap smears processing) • adaptive control: locomotion control for legged robots, navigation for mobile robots, minimizing pollutant emissions for chemical plants, predicting consumption for utilities... • fraud detection: e.g. detection of “unusual” usage patterns for credit cards or calling cards. • database marketing: predicting who is more likely to respond to an ad campaign. (...and the antidote) spam filtering. • games (e.g. backgammon). • Financial prediction (many people on Wall Street use machine learning).

Learning ≠ memorization • rote learning is easy: just memorize all the training examples and their corresponding outputs. • when a new input comes in, compare it to all the memorized samples, and produce the output associated with the matching sample. • PROBLEM: in general, new inputs are different from training samples. The ability to produce correct outputs or behavior on previously unseen inputs is called GENERALIZATION. • rote learning is memorization without generalization. • The big question of Learning Theory (and practice): how to get good generalization with a limited number of examples.

Look ahead • Supervised learning • Decision trees • Linear models • Neural networks • Unsupervised learning • K-means clustering http://www-stat.stanford.edu/~tibs/ElemStatLearn/ Full text in PDF

Examples of Classification Task • Handwriting recognition • Face detection • Speech recognition • Object recognition

Uses different biases in predicting Russel’s waiting habbits Decision Trees --Examples are used to --Learn topology --Order of questions K-nearest neighbors If patrons=full and day=Friday then wait (0.3/0.7) If wait>60 and Reservation=no then wait (0.4/0.9) Association rules --Examples are used to --Learn support and confidence of association rules SVMs Neural Nets --Examples are used to --Learn topology --Learn edge weights Naïve bayes (bayesnet learning) --Examples are used to --Learn topology --Learn CPTs

Decision Tree Classification Task Decision Tree

Refund Yes No NO MarSt Married Single, Divorced TaxInc NO < 80K > 80K YES NO Apply Model to Test Data Test Data Start from the root of tree.

Refund Yes No NO MarSt Married Single, Divorced TaxInc NO < 80K > 80K YES NO Apply Model to Test Data Test Data

Apply Model to Test Data Test Data Refund Yes No NO MarSt Married Single, Divorced TaxInc NO < 80K > 80K YES NO

Apply Model to Test Data Test Data Refund Yes No NO MarSt Married Single, Divorced TaxInc NO < 80K > 80K YES NO

Apply Model to Test Data Test Data Refund Yes No NO MarSt Married Single, Divorced TaxInc NO < 80K > 80K YES NO

Apply Model to Test Data Test Data Refund Yes No NO MarSt Assign Cheat to “No” Married Single, Divorced TaxInc NO < 80K > 80K YES NO

Decision Tree Classification Task Decision Tree

Decision Tree Induction • Many Algorithms: • Hunt’s Algorithm (one of the earliest) • CART • ID3, C4.5 http://www2.cs.uregina.ca/~dbd/cs831/notes/ml/dtrees/c4.5/tutorial.html • SLIQ,SPRINT http://www.cs.waikato.ac.nz/ml/weka/

Tree Induction • Greedy strategy. • Split the records based on an attribute test that optimizes certain criterion. • Issues • Determine how to split the records • How to specify the attribute test condition? • How to determine the best split? • Determine when to stop splitting

Tree Induction • Greedy strategy. • Split the records based on an attribute test that optimizes certain criterion. • Issues • Determine how to split the records • How to specify the attribute test condition? • How to determine the best split? • Determine when to stop splitting

How to Specify Test Condition? • Depends on attribute types • Nominal • Ordinal • Continuous • Depends on number of ways to split • 2-way split • Multi-way split

CarType Family Luxury Sports CarType CarType {Sports, Luxury} {Family, Luxury} {Family} {Sports} Splitting Based on Nominal Attributes • Multi-way split: Use as many partitions as distinct values. • Binary split: Divides values into two subsets. Need to find optimal partitioning. OR

Splitting Based on Continuous Attributes • Different ways of handling • Discretization to form an ordinal categorical attribute • Static – discretize once at the beginning • Dynamic – ranges can be found by equal interval bucketing, equal frequency bucketing (percentiles), or clustering. • Binary Decision: (A < v) or (A v) • consider all possible splits and finds the best cut • can be more compute intensive

Tree Induction • Greedy strategy. • Split the records based on an attribute test that optimizes certain criterion. • Issues • Determine how to split the records • How to specify the attribute test condition? • How to determine the best split? • Determine when to stop splitting

How to determine the Best Split • Greedy approach: • Nodes with homogeneous class distribution are preferred • Need a measure of node impurity: Non-homogeneous, High degree of impurity Homogeneous, Low degree of impurity

Entropy • The entropy of a random variable V with values vk, each with probability P(vk) is: )

Splitting Criteria based on INFO • Entropy at a given node t: (NOTE: p( j | t) is the relative frequency of class j at node t). • Measures homogeneity of a node. • Maximum (log nc) when records are equally distributed among all classes implying least information • Minimum (0.0) when all records belong to one class, implying most information

M0 M2 M3 M4 M1 M12 M34 How to Find the Best Split Before Splitting: A? B? Yes No Yes No Node N1 Node N2 Node N3 Node N4 Gain = M0 – M12 vs M0 – M34

Examples for computing Entropy P(C1) = 0/6 = 0 P(C2) = 6/6 = 1 Entropy = – 0 log 0– 1 log 1 = – 0 – 0 = 0 P(C1) = 1/6 P(C2) = 5/6 Entropy = – (1/6) log2 (1/6)– (5/6) log2 (1/6) = 0.65 P(C1) = 2/6 P(C2) = 4/6 Entropy = – (2/6) log2 (2/6)– (4/6) log2 (4/6) = 0.92

Splitting Based on INFO... • Information Gain: Parent Node, p is split into k partitions; ni is number of records in partition i • Measures Reduction in Entropy achieved because of the split. Choose the split that achieves most reduction (maximizes GAIN) • Used in ID3 and C4.5

N+ N- Splitting on feature f N2+ N2- Nk+ Nk- N1+ N1- I(P1+ ,, P1-) I(P2+ ,, P2-) I(Pk+ ,, Pk-) k S [Ni+ + Ni- ]/[N+ + N-]I(Pi+ ,, Pi-) i=1 The Information Gain Computation P+ : N+ /(N++N-) P- : N- /(N++N-) I(P+ ,, P-) = -P+ log(P+) - P- log(P- ) The difference is the information gain So, pick the feature with the largest Info Gain I.e. smallest residual info Given k mutually exclusive and exhaustive events E1….Ek whose probabilities are p1….pk The “information” content (entropy) is defined as S i -pi log2 pi A split is good if it reduces the entropy..

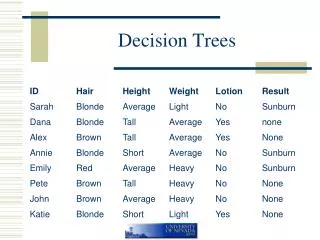

I(1/2,1/2) = -1/2 *log 1/2 -1/2 *log 1/2 = 1/2 + 1/2 =1 I(1,0) = 1*log 1 + 0 * log 0 = 0 A simple example V(M) = 2/4 * I(1/2,1/2) + 2/4 * I(1/2,1/2) = 1 V(A) = 2/4 * I(1,0) + 2/4 * I(0,1) = 0 V(N) = 2/4 * I(1/2,1/2) + 2/4 * I(1/2,1/2) = 1 So Anxious is the best attribute to split on Once you split on Anxious, the problem is solved

Decision Tree Based Classification • Advantages: • Inexpensive to construct • Extremely fast at classifying unknown records • Easy to interpret for small-sized trees • Accuracy is comparable to other classification techniques for many simple data sets

Tree Induction • Greedy strategy. • Split the records based on an attribute test that optimizes certain criterion. • Issues • Determine how to split the records • How to specify the attribute test condition? • How to determine the best split? • Determine when to stop splitting

Underfitting and Overfitting Overfitting Underfitting: when model is too simple, both training and test errors are large

Overfitting due to Noise Decision boundary is distorted by noise point

Notes on Overfitting • Overfitting results in decision trees that are more complex than necessary • Training error no longer provides a good estimate of how well the tree will perform on previously unseen records • Need new ways for estimating errors

How to Address Overfitting • Pre-Pruning (Early Stopping Rule) • Stop the algorithm before it becomes a fully-grown tree • Typical stopping conditions for a node: • Stop if all instances belong to the same class • Stop if all the attribute values are the same • More restrictive conditions: • Stop if number of instances is less than some user-specified threshold • Stop if class distribution of instances are independent of the available features (e.g., using 2 test) • Stop if expanding the current node does not improve impurity measures (e.g., Gini or information gain).

How to Address Overfitting… • Post-pruning • Grow decision tree to its entirety • Trim the nodes of the decision tree in a bottom-up fashion • If generalization error improves after trimming, replace sub-tree by a leaf node. • Class label of leaf node is determined from majority class of instances in the sub-tree

Decision Boundary • Border line between two neighboring regions of different classes is known as decision boundary • Decision boundary is parallel to axes because test condition involves a single attribute at-a-time

x + y < 1 Class = + Class = Oblique Decision Trees • Test condition may involve multiple attributes • More expressive representation • Finding optimal test condition is computationally expensive