Reliability and Validity

Reliability and Validity. Criteria of Measurement Quality. How do we judge the relative success (or failure) in measuring various concepts? Reliability – consistency of measurement Validity – confidence in measures and design. Reliability and Validity. Reliability focuses on measurement

Reliability and Validity

E N D

Presentation Transcript

Criteria of Measurement Quality • How do we judge the relative success (or failure) in measuring various concepts? • Reliability – consistency of measurement • Validity – confidence in measures and design

Reliability and Validity • Reliability focuses on measurement • Validity also extends to: • Precision in the design of the study – ability to isolate causal agents while controlling other factors • (Internal Validity) • Ability to generalized from the unique and idiosyncratic settings, procedures and participants to other populations and conditions • (External Validity)

Reliability • Consistency of Measurement • Reproducibility over time • Consistency between different coders/observers • Consistency among multiple indicators • Estimates of Reliability • Statistical coefficients that tell use how consistently we measured something

Measurement Validity • Are we really measuring concept we defined? • Is it a valid way to measure the concept? • Many different approaches to validation • Judgmental as well as empirical aspects

Key to Reliability and Validity • Concept explication • Thorough meaning analysis • Conceptual definition: • Defining what a concept means • Operational definition: • Spelling out how we are going to measure concept

Four Aspects of Reliability: • 1. Stability • 2. Reproducibility • 3. Homogeneity • 4. Accuracy

1. Stability • Consistency across time • repeating a measure at a later time to examine the consistency • Compare time 1 and time 2

2. Reproducibility • Consistency between observers • Equivalent application of measuring device • Do observers reach the same conclusion? • If we don’t get the same results, what are we measuring? • Lack of reliability can compromise validity

3. Homogeneity • Consistency between different measures of the same concept • Different items used to tap a given concept show similar results – ex. open-ended and closed-ended questions

4. Accuracy • Lack of mistakes in measurement • Increased by clear, defined procedures • Reduce complications that lead to errors • Observers must have sufficient: • Training • Motivation • Concentration

Increasing Reliability • General: • Training coders/interviewers/lab personnel • More careful concept explication (definitions) • Specification of procedures/rules • Reduce subjectivity (room for interpretation) • Survey measurement: • Increase the number of items in scale • Weeding out bad items from “item pool” • Content analysis coding: • Improve definition of content categories • Eliminate bad coders

Indicators of Reliability • Test-retest • Make measurements more than once and see if they yield the same result • Split-half • If you have multiple measures of a concept, split items into two scales, which should then be correlated • Cronbach’s Alpha or Mean Item-total Correlation

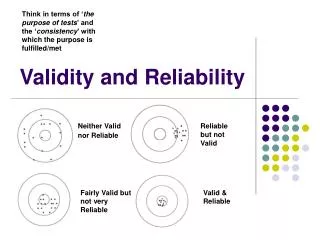

Reliability and Validity • Reliability is a necessary condition for validity • If it is not reliable it cannot be valid • Reliability is NOT a sufficient condition for validity • If it is reliable it may not necessarily be valid • Example: • Bathroom scale, old springs

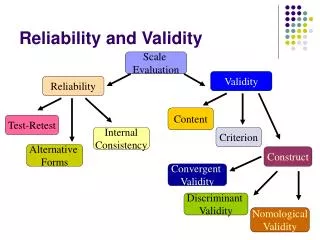

Types of Validity • 1. Face validity • 2. Content validity • 3. Pragmatic (criterion) validity • A. Concurrent validity • B. Predictive validity • 4. Construct validity • A. Testing of hypotheses • B. Convergent validity • C. Discriminant validity

Face Validity • Subjective judgment of experts about: • “what’s there” • Do the measures make sense? • Compare each item to conceptual definition • Do it represent the concept in question? • If not, it should be dropped • Is the measure valid “on its face”

Content Validity • Subjective judgment of experts about: • “what is not there” • Start with conceptual definition of each dimension: • Is it represented by indicators at the operational level? • Are some over or underrepresented? • If current indicators are insufficient: • develop and add more indicators • Example--Civic Participation questions: • Did you vote in the last election? • Do you belong to any civic groups? • Have you ever attended a city council meeting? • What about “protest participation” or “online organizing”?

Pragmatic Validity • Empirical evidence used to test validity • Compare measure to other indicators • 1. Concurrent validity • Does a measure predict simultaneous criterion? • Validating new measure by comparing to existing measure • E.g., Does new intelligence test correlate with established test • 2. Predictive validity • Does a measure predict future criterion? • E.g., SAT scores: Do they predict college GPA?

Construct Validity • Encompasses other elements of validity • Do measurements: • A. Represent all dimensions of the concept • B. Distinguish concept from other similar concepts • Tied to meaning analysis of the concept • Specifies the dimensions and indicators to be tested • Assessing construct validity • A. Testing hypotheses • B. Convergent validity • C. Discriminant validity

A. Testing Hypotheses • When measurements are put into practice: • Are hypotheses that are theoretically derived, supported by observations? • If not, there is a problem with: • A. Theory • B. Research design (internal validity) • C. Measurement (construct validity?) • In seeking to examine construct validity: • Examine theoretical linkages of the concept to others • Must identify antecedent and consequences • What leads to the concept? • What are the effects of the concept?

B. Convergent Validity • Measuring a concept with different methods • If different methods yield the same results: • than convergent validity is supported • E.g., Survey items measuring Participation: • Voting • Donating to money to candidates • Signing petitions • Writing letters to the editor • Civic group memberships • Volunteer activities

C. Discriminant (Divergent) Validity • Measuring a concept to discriminate that concept from other closely related concepts • E.g., Measuring Maternalism and Paternalism as distinct concepts

Dimensions of Validity for Research Design • Internal • Validity of research design • Validity of sampling, measurement, procedures • External • Given the research design, how valid are • Inferences made from the conclusions • Implications for real world

Internal and External Validity in Experimental Design • Internal validity: • Did the experimental treatment make a difference? • Or is there an internal design flaw that invalidates the results? • External validity: • Are the results generalizable? • Generalizable to: • What populations? • What situations? • Without internal validity, there is no external validity