TGFs Table Generating Functions

TGFs Table Generating Functions. Ali Ghodsi Reynold Xin UC Berkeley Databricks. Unifying Platform: Spark. Long-term goal Single platform unifying big data frameworks Spark Shark GraphX SparkStreaming MLlib. This talk. Make SQL ↔ Spark easy Shark (SQL) → Spark

TGFs Table Generating Functions

E N D

Presentation Transcript

TGFsTable Generating Functions Ali Ghodsi ReynoldXin UC Berkeley Databricks

Unifying Platform: Spark • Long-term goal • Single platform unifying big data frameworks • Spark • Shark • GraphX • SparkStreaming • MLlib

This talk • Make SQL ↔ Spark easy • Shark (SQL) → Spark • Table Generating Functions (TGFs) • Spark ← Shark (SQL) • Better API (sqlRdd, saveAsTable) • Future: Catalyst project for Spark

RDDs to Tables • Save RDD as table • Provide table name and column names valrdd=sc.parallelize((1 to 10).map( I => (“num” + i, i))) rdd.saveAsTable(“myTableName”, Seq(“alpha”, “num”))

Tables to RDDs • Converting SQL tables to RDDs • Uses Hive schema to convert to RDD of tuple of primitive types • Example: RDD[Tuple2[String, int]] // sc.runSql(“CREATE TABLE person (n string, a int)”) valrdd = sc.sqlRdd[String, Int](“select * from table”) val (name, age) = rdd.first

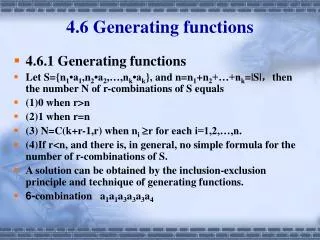

Table Generating Functions (TGFs) • Scalafunctions that take parameters and output a table objectmyTGF { def apply(<parameters…>) = { <table> } }

Calling TGFs • New SQL syntax to call TGF • Gets the TGF (similar to a SELECT *) • Save the output of the TGF in a SQL table GENERATEmyTGF(input) GENERATEmyTGF(input) AS my_table_name objectmyTGF { def apply(<parameters…>) = { <table> } }

TGF inputs • Any primitive types, ints, floats, etc • Any RDD[(_)] converted to tables objectmyTGF { def apply(inTable: RDD[(int, String)], k: int) = { <table> } }

TGF outputs • Output must be RDDSchema • Pair of RDD[(_)] and string with schema val output = RDDSchema(rdd, “name string, age int”)

Calling MLlib objectMyTGF { importorg.apache.spark.mllib.clustering._ def apply(input: RDD[(String, Double)], k: Int) = { valdata = input.map{ case (_, x) => Array[Double](x) } val model = KMeans.train(data, k, 2, 2) valrdd = input.map{ case (n, x) => List(n, model.predict(Array(x))) } RDDSchema(rdd.asInstanceOf[RDD[Seq[_]]], ”n string, d double”) } } GENERATEMyTGF(myTable, 5) AS myTable2

Future • Catalyst project in Spark valrdd2 = sql”SELECT * from $rdd”