Program Evaluation

Program Evaluation. Planning Programs for Adult Learners Chapter 11: Formulating Evaluation Plans. Cafarella (2002). Program Evaluation : a process used to determine whether the design and delivery of a program were effective and whether the proposed outcomes were met.

Program Evaluation

E N D

Presentation Transcript

Planning Programs for Adult LearnersChapter 11: Formulating Evaluation Plans Cafarella (2002)

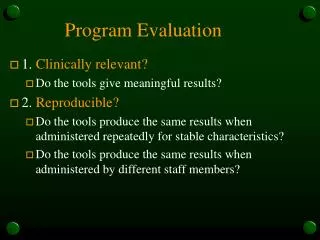

Program Evaluation: a process used to determine whether the design and delivery of a program were effective and whether the proposed outcomes were met. • Formative Evaluation: Evaluation done to improve or change a program while it is in progress. • Summative Evaluation: Evaluation focuses on the results or outcomes of a program. Definitions in Program Evaluation

Formative Summative • Were changes occurring in knowledge and skill levels as they go through the program? • What context issues came up that might have changed the nature of the program? • Were changes evident from pre / post data? • How applicable would this program data be for other settings / contexts? Formative vs. Summative

Judging the value or worth of an education/ training program (cost/benefit analysis). • Demonstrate that program outcomes are ties to what happened in the program. • Develop clear criteria to make your judgments that can be changed as the program evolves. • Be aware of the audience for which the program data will be shared and the population from which it will collected. Each will have their own agendas. • Program met the original objective set forth by administration and/or participants. • Program did not have any unintended consequences • Learners are able to transfer the information obtained to another setting. • Usually specific to the program and not as interested in generalizability. Goals of Program Evaluation

Secure support for the evaluation from the stakeholders. • Identify who will plan and oversee the evaluation. • Define the purpose of the evaluation and the use of the results. • Specify what is judged and formulate evaluation questions. • Determine who supplies needed evidence and/or currently available data. • Delineate the evaluation approach. • Choose data collection and use plan. • Indicate the analysis procedure. • Stipulate how judgments are made. • Determine timeline, budge, needed resources. • Monitor and complete the evaluation and make judgments. Elements of a Systematic Evaluation

Observations • Interviews • Written Questionnaires • Tests • Surveys • Product Reviews • Performance Reviews • Review of Records • Portfolios • Cost-benefit Analysis • Focus Groups • Self-Assessment How to Collect Data

Evaluate • Participant reactions • Participant learning • Behavior change or use new knowledge/skills • Results or outcomes • Focus • Primarily on participant reactions and changes Levels of Evaluation Approach

Evaluate • Skills/ knowledge/ attitudes • Achievement of broad objectives • Learning, tasks, and materials. • Anticipated changes • Evidence of change • Focus • Data collected at each level of program. • What should you know or believe at the end? • What were the techniques to reinforce the objectives? Accountability Planner Approach

Evaluate • Review of program • Reflection on criteria (who determines and how) • Product Review • Performance Review • Focus • Programming (what and how) • Valuing (who decides what is valuable and how) • Knowledge Construction (what counts as evidence) • Utilization of Evaluation Findings (for what ends and by whom) Situated Evaluation Framework

Evaluate • Written Questionnaires • Cost-benefit Analysis • Focus • Educational unit function • Efficiency of use of resources Systems Evaluations

Evaluate • Observations • Interviews • Review of Records • Qualitative Data • Focus • What does the program look like from the different individuals involved • Participants • Staff • Sponsors • Stakeholders • How was it implemented and received Case Study Method

Evaluation • Interviews • Product Reviews • Tests • Cost-Benefit Analyses • Review of Records • Focus • Quality determined through adversarial hearings • Panel of judges determines effectiveness based on presented evidence Quasi-Legal Evaluation

Evaluate • Interviews • Review of Records • Focus • Panel of experts evaluate based on set of standards • Often done for large education and training programs Professional or Expert Review

Evaluate • Qualitative Focus • Interviews, Observations, Story-telling • Building of life histories and memoirs • Focus • Enter and close, prolonged interaction with people in their everyday lives • Purpose: placing actions and changes within a larger context Ethnography