Statistical Inference

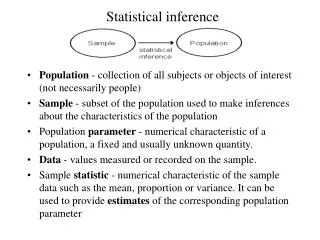

Statistical Inference. Estimation and Hypothesis Testing. Statistical Inference consists of two important components: Estimation Hypothesis testing. Estimation. Everyday Examples: There is a 60% chance that tomorrow’s rainfall will be 1.0 – 1.5 inches.

Statistical Inference

E N D

Presentation Transcript

Estimation and Hypothesis Testing • Statistical Inference consists of two important components: • Estimation • Hypothesis testing

Estimation • Everyday Examples: • There is a 60% chance that tomorrow’s rainfall will be 1.0 – 1.5 inches. • “I think I should be done between 3 and 3:30 pm.” • “If all the exams are like the one I just took, I’m pretty sure I should get an ‘A’ in this course.” • Estimation deals with the assessment or prediction of a population parameter based on a sample statistic • It also typically sets limits to such prediction along with a probability

Hypothesis Testing • Everyday Examples: • This coin has turned up Heads the last six times – I wonder if it is a fair coin. • Barry Bonds holds the record for the most number of home runs in a single season – 73 (2001). McGuire is next, with 70 (1998). Does this mean that Bonds is the bigger hitter? • Hypothesis testing deals with comparison of an observed result with an expected one or of two results. • What are the chances that any difference between the observed and expected results is entirely due to chance (i.e., not a real difference).

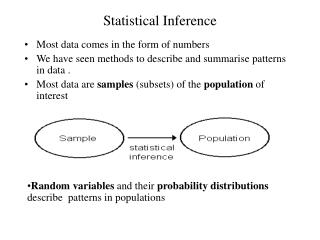

Estimation • Let us first look at Estimation. • As we just saw, this is the process of estimating the value of a parameter (because it is unknown). • For example, how much milk does a typical 2-year old Jersey cow yield in a year? What we are looking for, of course, is the population mean, .

Estimation • can be estimated by obtaining a sample of 2 year old Jersey cows, measuring the yield in one year, computing the mean yield (Y-bar) for that sample, and assuming that = Y-bar. • Of course, if you do the same with another sample, then that sample mean, Y-bar2, is likely to be at least slightly different from the one from the first sample (Y-bar1). So which Y-bar is equal to?

Estimation • Therefore, rather than simply equating the parameter to the statistic, as above (called a point-estimate), we can estimate the value of the parameter within an interval (interval-estimate). • This interval is clearly dependant on the spread of the Y-bars, in other words, on the standard deviation of the Y-bars. • The standard deviation of a statistic is called a standard error

Estimation • Equally obvious is the fact that greater this interval, the greater our confidence that the parameter must lie within it • Think of darts – you are much more confident of hitting the board somewhere on the surface (OK, you are more confident of hitting the wall) than you are of hitting the bull’s eye.

Estimation • Thus, we can obtain an estimate of by means of considering the value of Y-bar and extending this value on either side using the standard error. • How far to extend this interval depends upon how confident you want to be in your inference, and this in turn, is determined by the standard normal tables. • Remember, the probability of a value lying in the interval ± 1.96σ = 0.95, based on the standard normal tables.

Estimation • Wait! • Why Standard normal tables? • We are not talking about the standard deviation of the original variable anymore, but the standard error of the sample mean.

Estimation • This means that the sample mean Y-bar is our variable now, and not the original variable (milk yield of individual cows). • (Assume that you have many samples of a given size drawn from the population. Each sample has its own mean – this is our variable now.)

Estimation • So the question is: if the original variable follows the normal distribution (with mean and standard deviation, σ), does it follow that the sample means also follow the normal distribution – and with what mean and standard error? • And what happens if the original variable does not follow the normal distribution?

Estimation • Let us find out. • Let us first plot the original variables.

Distribution of Wing Length and Milk Yield • Clearly, • Wing length appears normally distributed, while • Milk yield deviates strongly from normality.

Distribution of Wing Length and Milk Yield • Let us now take repeated samples from these two distributions, and see check out the distribution of the sample means. • First we take 1400 samples of size, n = 5 each.

Distribution of Sample Means (Wing Length and Milk Yield, n = 5) • From the histograms and the normal quantile plots, it is clear that • When n = 5, Y-bar for wing length follows the normal distribution, while • When n = 5, Y-bar for wing length does not follow the normal,

Distribution of Sample Means (Wing Length and Milk Yield, n = 35) • Let us now see what happens if we increase the sample size, with n = 35.

Distribution of Sample Means (Wing Length and Milk Yield, n = 35) • From the histograms and the normal quantile plots, it is clear that when n = 35, • Y-bar for wing length continues to follow the normal distribution, but surprise, surprise… • Y-bar for wing length also now follows the normal distribution

What happened here? • The only difference between the two graphs for milk yield is the sample size. • So what does this tell us?

Take-home message • Means of samples from a normal distribution are normally distributed, irrespective of the sample size. • Means of samples from a positively skewed distribution will approach the normal distribution as the sample size increases.

The Central Limit Theorem • Indeed, means of samples from any distribution will approach the normal distribution as the sample size increases. • This is known as the central limit theorem. • Its importance is that the normal distribution tables can be used even when the original variable deviates strongly from normality

Effect on Dispersion • Now let us see what effect sample size, n, has on the dispersion of the distribution (that is, standard deviation).

Sample size = 35 Sample size = 5 Effect on Dispersion

Sample size = 35 Sample size = 5 Effect on Dispersion

Effect on Dispersion Range What is happening here?

The range of the sample means is reduced when compared to the range of the individual observations. • The range of the sample means reduces as the sample size increases.

Dispersion is reduced Standard deviation

Dispersion is reduced • The dispersion of the means • depends upon the dispersion in the original variable • reduces as the sample size increases

Expected Value • How good a statistic is as an estimator of a parameter depends upon how much variation there is in that statistic. • From the population of observations, one can obtain many samples of a given size, and thus, many values of a statistic (e.g., mean) – one from each sample. • Thus, the “population” of means can be approximated by taking a very large (“infinite”) number of samples from the population and obtaining the mean for each sample.

Expected Value • The average of all those infinite means is known as the expected value of the means. The expected value of the sample mean is the population mean. • (This simply means that your sample mean belongs to a distribution whose mean is the population mean.) • The variation among those infinite means is the expected value of the standard error of the means.

Expected Value of the standard deviation of sample means Sample #1 Sample #2 POPULATION Sample #3 Sample #4 Sample #5 etc.

Expected Value of the standard deviation • This means that • While the expected value of Y-bar is the population mean, • The expected value of the standard deviation of the means is not equal to the population standard deviation • The larger the sample, the smaller the expected value of the standard deviation of the means.

Let’s be real now! • Of course, in reality we are rarely going to do repeated sampling. • However, it is good to know that if we increase our sample size the value of the mean would be more or less the same irrespective of which sample we take.

Let’s be real now! • In reality, what we have usually is one sample, and therefore: • one standard deviation, s, and • one sample size, n. • Therefore, we estimate the expected value of the standard error by:

Expected Value of the standard deviation • There is one major difference between the standard deviation of individual observations (s) and the standard deviation of means (standard error, ) • The value of s stabilizes as n increases but does not necessarily decrease in magnitude. • However, decreases as n increases.