Lecture 5 Support Vector Machines

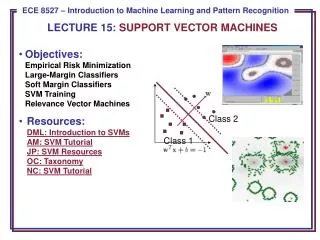

This lecture explores Support Vector Machines (SVMs) with a focus on large-margin linear classifiers and their application in both separable and non-separable cases. It discusses the concept of an optimal hyperplane, margins, and the role of slack variables when dealing with non-linearly separable data. The kernel trick is introduced, highlighting its ability to transform feature spaces and enhance classifier flexibility without explicitly calculating high-dimensional representations. Key computational methods, including the Lagrange function and the importance of support vectors, are also covered.

Lecture 5 Support Vector Machines

E N D

Presentation Transcript

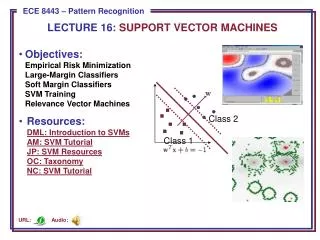

Lecture 5Support Vector Machines Large-margin linear classifier Non-separable case The Kernel trick

Large-margin linear classifier Let’s assume the linearly separable case. The optimal separating hyperplane separates the two classes and maximizes the distance to the closest point. f(x)=wtx+w0 • Unique solution • Better test sample performance

Large-margin linear classifier f(x)=wtx+w0=r||w||

Large-margin linear classifier {x1, ..., xn}: our training dataset in d-dimension yiÎ {1,-1}: class label Our goal: Among all f(x) such that Find the optimal separating hyperplane Find the largest margin M,

Large-margin linear classifier The border is M away from the hyperplane. M is called “margin”. Drop the ||β||=1 requirement, Let M=1 / ||β||, then the easier version is:

Non-separable case When two classes are not linearly separable, allow slack variables for the points on the wrong side of the border:

Non-separable case The optimization problem becomes: ξ=0 when the point is on the correct side of the margin; ξ>1 when the point passes the hyperplane to the wrong side; 0<ξ<1 when the point is in the margin but still on the correct side.

Non-separable case When a point is outside the boundary, ξ=0. It doesn’t play a big role in determining the boundary ---- not forcing any special class of distribution.

Computation equivalent C replaces the constant. For separable case, C=∞.

Computation The Lagrange function is: Take derivatives of β, β0, ξi, set to zero: And positivity constraints:

Computation Substitute 12.10~12.12 into 12.9, the Lagrangian dual objective function: Karush-Kuhn-Tucker conditions include

Computation From , The solution of β has the form: Non-zero coefficients only for those points i for which These are called “support vectors”. Some will lie on the edge of the margin the remainder have , They are on the wrong side of the margin.

Computation Smaller C. 85% of the points are support points.

Support Vector Machines Enlarge the feature space to make the procedure more flexible. Basis functions Use the same procedure to construct SV classifier The decision is made by

SVM Recall in linear space: With new basis:

SVM h(x) is involved ONLY in the form of inner product! So as long as we define the kernel function Which computes the inner product in the transformed space, we don’t need to know what h(x) itself is! “Kernel trick” Some commonly used Kernels:

SVM Recall αi=0 for non-support vectors, f(x) depends only on the support vectors.

SVM K(x,x’) can be seen as a similarity measure between x and x’. The decision is made essentially by a weighted sum of similarity of the object to all the support vectors.

SVM Using kernel trick brings the feature space to very high dimension many many parameters. Why doesn’t the method suffer from the curse of dimensionality or overfitting??? Vapnic argues that the number of parameters alone, or dimensions alone, is not a true reflection of how flexible the classifier is. Compare two functions in 1-dimension: f(x)=α+βx g(x)=sin(αx)

SVM g(x)=sin(αx) is a really flexible classifier in 1-dimension, although it has only one parameter. f(x)=α+βx can only promise to separate two points every time, although it has one more parameter ?

SVM Vapnic-Chernovenkis dimension: The VC dimension of a class of classifiers {f(x,α)} is defined to be the largest number of points that can be shattered by members of {f(x,α)} A set of points is said to be shattered by a class of function if, no matter how the class labels are assigned, a member of the class can separate them perfectly.

SVM Linear classifier is rigid. A hyperplane classifier has VC dimension of d+1, where d is the feature dimension.

SVM The class sin(αx) has infinite VC dimension. By appropriate choice of α, any number of points can be shattered. The VC dimension of the nearest neighbor classifier is infinity --- you can always get perfect classification in training data. For many classifiers, it is difficult to compute VC dimension exactly. But this doesn’t diminish its value for theoretical arguments. Th VC dimension is a measure of complexity of the class of functions by assessing how wiggly the function can be.

SVM Strengths of SVM: flexibility scales well for high-dimensional data can control complexity and error trade-off explicitly as long as a kernel can be defined, non-traditional (vector) data, like strings, trees can be input Weakness how to choose a good kernel? (a low degree polynomial or radial basis function can be a good start)