SAC 306 PROJECT REPORT.

SAC 306 PROJECT REPORT. A Time Series Analysis of the Apple Inc. Stock Price. PRESENTED BY. Malit Keldine Owande ……………..………………………I07/2930/2009 June Agnes Njeri Mwangi…………………………………I07/28782/2009 Omondi Joseph Ochieng ……..………………….………I07/2931/2009

SAC 306 PROJECT REPORT.

E N D

Presentation Transcript

SAC 306PROJECT REPORT. A Time Series Analysis of the Apple Inc. Stock Price.

PRESENTED BY • MalitKeldineOwande……………..………………………I07/2930/2009 • June Agnes Njeri Mwangi…………………………………I07/28782/2009 • Omondi Joseph Ochieng……..………………….………I07/2931/2009 • Kyalo Lydia Mukonyo………………………………….……I07/29037/2009 • Kihuni Margaret Wanjiku …………………………..……I07/28988/2009 PRESENTED TO; Dr. IviviMwaniki DATE: 11th May, 2012.

Table of contents CHAPTER ONE: INTRODUCTION • 1.1Background of the study • 1.2 Research Objectives • 1.3 Significance of the study • 1.4 Scope of the study CHAPTER TWO: LITERATURE REVIEW • 2.1 Introduction • 2.2 Methodology • 2.2.1 ARIMA Process • 2.2.2 Stochastic Process • 2.2.3 Spectral Density • 2.2.4 Measures of central tendency • 2.2.5 Autocorrelation function • 2.2.6 Partial Autocorrelation Function CHAPTER THREE: DATA ANALYSIS AND INTERPRATATION • 3.1Introduction • 3.2 Basic Statistics • 3.3 Data Cleaning • 3.4 Time Series Analysis • 3.5 The Normal Q-Q Plot • 3.6 Kernel Density Estimation • 3.6 Residuals CHAPTER 4: • CONCLUSION • REFERENCES

Chapter 1: introduction 1.1Background of the study • In the financial field, and any field for that matter, Information is key in the decision making process. Financial analysts, fund managers and individual investors aim to make numerous decisions ranging from what best to invest in, when best to invest and countless others. This decision making process is made complex by the fact that numerous factors, including social, political and economic factors, influence the investment market. It is therefore near impossible to predict market movements with a 100% certainty. A detailed scientific study helps to reduce the uncertainty and therefore investors are able to make well informed decisions. In our case of studying Apple Inc.’s stock options and stock price movements, a regression and time series analysis, will help especially with the identification of the main components; trend, seasonality, cyclical nature and distribution of random or irregular nature of the stock. From that we`ll be able to get the put-call parity and also predict future stock prices given past data. • Apple Inc. is an American multinational corporation that designs and sells consumer electronics, computer software, and personal computers. The company's best-known hardware products are the Macintosh line of computers, the iPod, the iPhone and the iPad. • It is the largest publicly traded company in the world by market capitalization, as well as the largest technology company in the world by revenue and profit, as of May 2012 with worldwide annual revenue of $108 billion in 2011. • Apple Inc. is headquartered in California. Its stock is listed on the New York Stock Exchange and is part of S&P 500 Index, the Russell 1000 Index and the Russell 1000 Growth Stock Index (NYSE, 2011).

1.2 Research Objectives The main objectives of the study were as follows; Carry out a time series analysis on a historical set of data. Figure out the trend, cyclicality and come up with summary statistics on the data like the mean, median, mode, variance and the correlations between the values in the data set. Investigate the residuals and find out whether they have a normal or non-normal distribution. Fit a time series model and come up with a graphical representation of the data being analyzed and forecast future behaviour, using information and properties obtained from analysis of the residuals. 1.3 Significance of the study This study on the historical share prices will help in determining the trend of the stock and forecast share prices. This information coupled with some knowledge of the stock market will give an investor more confidence on whether to buy or sell the stock. 1.4 Scope of the study The study has taken a sample of 3110 observations of the stock price of Apple Inc. trading in the New York Stock Exchange. The data was observed from 1nd January 2000 to 10th May 2012. The stock price is the adjusted closing price of the stock.

CHAPTER TWO: LITERATURE REVIEW 2.1 Introduction We referred to a number of books and websites in the study of Apple Inc. stock prices and options. These include: • Time Series with Applications in R by Jonathan D. Cryer and Kung-Sik Chan. • This provided relevant R codes for the analysis of the process. • CT6 Statistical Methods ActEd Notes, for an in-depth understanding of the various Time Series models. • Practical Regression and ANOVA using R by Julian Faraway, to help us with various codes used in analyzing and fitting the data. • http://www.marketwatch.com/optionscenter, to help us with understanding the operations of stock options and for provision of data • http://en.wikipedia.org/wiki/Apple_Inc. and www.apple.com for info on Apple in. and its stock options • www.finance.yahoo.com or the historical Apple inc.’s share price data.

2.2 Methodology • Studying of the autocorrelation function (ACF) and partial autocorrelation function (PACF) in order to fit an ARMA model of the data. Autocorrelation describes the correlation between different values of the process at different points in time, as a function of the two times or as a function of the time difference, if process is stationary. • Differencing of the data to eliminate the trend which the data takes, whether linear, quadratic or nonlinear. • Studying of the residual properties with the help of a QQ plot and histogram. • Smoothing of the data by an analysis of the trend, seasonality, cyclicality, and noise through the use of the spragga command. • Use of visual aids like histograms, boxplots and line graphs to help in our analysis. • Studying of frequency of data by making use of the spectral density frequency.

2.2.1 ARIMA Process • ARIMA processes are mathematical models used for forecasting. The acronym ARIMA stands for "Auto-Regressive Integrated Moving Average." The ARIMA approach to forecasting is based on the following ideas: • 1. The forecasts are based on linear functions of the sample observations; • 2. The aim is to find the simplest models that provide an adequate description of the observed data. This is sometimes known as the principle of parsimony. • Each ARIMA process has three parts: the autoregressive (or AR) part; the integrated (or I) part; and the moving average (or MA) part. The models are often written in shorthand as ARIMA (p, d, q) where p describes the AR part, d describes the integrated part and q describes the MA part. (Pankratz, 1983) • AR: This part of the model describes how each observation is a function of the previous p observations. For example, if p = 1, then each observation is a function of only one previous observation. That is, • Y (t) = c + φ (1) Y (t−1) + e (t) • Where Y (t) represents the observed value at time t, Y (t−1) represents the previous observed value at time t − 1, e (t) represents some random error and c and φ (1) are both constants. Other observed values of the series can be included in the right-hand side of the equation if p > 1: • Y (t) = c + φ (1) Y (t−1) + φ (2) Y (t−2) + · · · + φ (p) Y (t−p) + e (t)

I: This part of the model determines whether the observed values are modeled directly, or whether the differences between consecutive observations are modeled instead. If d = 0, the observations are modeled directly. If d = 1, the differences between consecutive observations are modeled. If d = 2, the differences of the differences are modeled. In practice, d is rarely more than 2. • MA: This part of the model describes how each observation is a function of the previous q errors. For example, if q = 1, then each observation is a function of only one previous error. That is, • Y (t) = c + θ (1) e (t−1) + e (t). • Here e (t) represents the random error at time t and e (t−1) represents the previous random error at time t − 1. Other errors can be included in the right-hand side of the equation if q > 1. • Combining these three parts gives the diverse range of ARIMA models. 2.2.2 Stochastic Process • The sequence of random variables {Yt: t = 0, ±1, ±2, ±3,…} is called a stochastic process and serves as a model for an observed time series. It is known that the complete probabilistic structure of such a process is determined by the set of distributions of all finite collections of the Y’s.

2.2.3 Spectral Density • For estimating spectral densities of stationary seasonal time series processes, a new kernel is proposed. The proposed kernel is of the shape which is in harmony with oscillating patterns of the autocorrelation functions of typical seasonal time series process. Basic properties such as consistency and non-negativity of the spectral density estimator are vital. (Box, 1970) 2.2.4 Measures of central tendency • This includes mean, mode and median. Other basic statistics operations are skewness, kurtosis and variance. • The mode is the most frequently appearing value in the population or sample. • To find the median, we arrange the observations in order from smallest to largest value. If there are an odd number of observations, the median is the middle value. If there is an even number of observations, the median is the average of the two middle values. • The mean of a sample or a population is computed by adding all of the observations and dividing by the number of observations.

2.2.5 Autocorrelation function • In statistics, the autocorrelation of a random process describes the correlation between values of the process at different points in time, as a function of the two times or of the time difference. Let X be some repeatable process, and i be some point in time after the start of that process. (i may be an integer for a discrete-time process or a real number for a continuous-time process.) Then Xi is the value (or realization) produced by a given run of the process at time i. Suppose that the process is further known to have defined values for meanμi and variance σi2 for all times i. Then the definition of the autocorrelation between times s and t is

2.2.6 Partial Autocorrelation Function • Given a time series zt, the partial autocorrelation of lag k is the autocorrelation between zt and zt + k with the linear dependence of zt + 1 through to zt + k − 1 removed; equivalently, it is the autocorrelation between zt and zt − k that is not accounted for by lags 1 to k-1, inclusive. • Partial autocorrelation plots are a commonly used tool for model identification in Box-Jenkins models. Specifically, partial autocorrelations are useful in identifying the order of an autoregressive model. The partial autocorrelation of an AR(p) process is zero at lag p+1 and greater. • An approximate test that a given partial correlation is zero (at a 5% significance level) is given by comparing the sample partial autocorrelations against the critical region with upper and lower limits given by , where n is the record length (number of points) of the time-series being analyzed. This approximation relies on the assumption that the record length is moderately large (say n>30) and that the underlying process has a multivariate normal distribution.

CHAPTER THREE: DATA ANALYSIS AND INTERPRATATION 3.1Introduction • This section describes how the study was conducted. It explains the sample used and the time series analysis methods applied. This section also explains how the data was analyzed to produce the required information required for the study and graphical representation of analysis • 3.2 Apple Inc.’s Stock Options Put-call parity analysis 1. Results of the estimates using R for stock with a maturity days of less than 100

1.1 LINEAR MODEL (c=Bo+B1p+B2k) Estimation or r • Given that the estimate of Beta2 is -0.9983, the estimated r is 0.03653106

1.2 QUADRATIC MODEL (c=Bo+B1k+B2k2) Residuals: Coefficients:

Residual standard error: 10.49 on 130 degrees of freedom • Multiple R-squared: 0.9913, • Adjusted R-squared: 0.9912 • F-statistic: 7406 on 2 and 130 DF • p-value: < 2.2e-16 • The resulting quadratic fit is c=7.070e+02+(-1.876e+00k)+1.219e-03k2 • Figure 1.2 Plot of the quadratic model of Call price against strike price

1.2.1 HYPOTHESIS TEST • To test the hypothesis Ho: B2=0 Vs H1: B2≠0 • We consider the p-value of the test statistic of B2. • This p-value is very small (< 2.2e-16) as expected from its corresponding t-value which is large (54.44) implying that the test is significant. i.e. Reject H0. Rejection of H0 implies that B2≠0 which implies that B2 is actually significant i.e. it is better to use the quadratic fit instead of the linear fit. 1.2.2 COEFFICIENT OF DETERMINATION () • The adjusted R-squared is 0.9912, implying that 99.12% of the total variability is explained by the model, which means that the very little variability is not explained (error) i.e 0.88%.

1.3 THE (Y=B0+B1X1+B2X2+e) MODEL Coefficients: The Summary is as follows: • Residual standard error: 0.01843 on 130 degrees of freedom • Multiple R-squared: 0.9913, • Adjusted R-squared: 0.9912 1.3.1 COEFFICIENT OF DETERMINATION () • The adjusted R-squared is 0.9912, implying that 99.12% of the total variability is explained by the model, which means that only 0.88% is not explained. An therefore our (Y=B0+B1X1+B2X2+e) model is y2=1.2421119+(-1.875733*x)+( 0.0012193*x1)

Figure 1.3 Superimposed Plot of call price against strike price comparing the quadratic and the Y=B0+B1X+B2X2 The above graph (call value against strike price) compares the graphs of the model (Y=B0+B1X1+B2X2+e)(red) against the graph of the QUADRATIC(blue) model.

1.4 BLACK SCHOLES MODEL Figure 1.4.1 Plot of the black scholes model of call price against strike price

Figure 1.4.2 Superimposed plot of the black scholes, quadratic, and Y=B0+B1X+B2X2+e model of call price against strike price

2. Results of the estimates using R for stock with a maturity days of more than 100 1.1 LINEAR MODEL (c=Bo+B1p+B2k) Estimation or r • Given that the estimate of Beta2 is -1.004 • , the estimated r is -0.007065742

1.2 QUADRATIC MODEL (c=Bo+B1k+B2k2) Residuals: Coefficients:

Residual standard error: 4.313 on 148 degrees of freedom • Multiple R-squared: 0.9989, • Adjusted R-squared: 0.9989 • F-statistic: 7.004e+04 on 2 and 148 DF • p-value: < 2.2e-16 • The resulting quadratic fit is c=617.2+(-1.47k)+8.762e-04k2 • Figure 1.2 Plot of the quadratic model of Call price against strike price

1.2.1 HYPOTHESIS TEST • To test the hypothesis Ho: B2=0 Vs H1: B2≠0 • We consider the p-value of the test statistic of B2. • This p-value is very small (< 2.2e-16) as expected from its corresponding t-value which is large (124.9) implying that the test is significant. i.e. Reject H0. Rejection of H0 implies that B2≠0 which implies that B2 is actually significant i.e. it is better to use the quadratic fit instead of the linear fit. 1.2.2 COEFFICIENT OF DETERMINATION () • The adjusted R-squared is 0.9989, implying that 99.89% of the total variability is explained by the model, which means that the very little variability is not explained (error) i.e0.11%.

1.3 THE (Y=B0+B1X1+B2X2+e) MODEL Coefficients: The Summary is as follows: • Residual standard error:0.01965 on 148 degrees of freedom • Multiple R-squared: 0.9932, • Adjusted R-squared: 0.9931 1.3.1 COEFFICIENT OF DETERMINATION () • The adjusted R-squared is 0.9931, implying that 99.31% of the total variability is explained by the model, which means that only 0.69% is not explained. An therefore our (Y=B0+B1X1+B2X2+e) model is y2=0.9496+(-0.9011*x)+(4.449e-10*x1)

Figure 1.3 Superimposed Plot of call price against strike price comparing the quadratic and the Y=B0+B1X+B2X2 The above graph (call value against strike price) compares the graphs of the model (Y=B0+B1X1+B2X2+e)(red) against the graph of the QUADRATIC(blue) model.

1.4 BLACK SCHOLES MODEL Figure 1.4.1 Plot of the black scholes model of call price against strike price

Figure 1.4.2 Superimposed plot of the black scholes, quadratic, and Y=B0+B1X+B2X2+e model of call price against strike price

3.4 Basic Statistics • The stock prices fluctuated from a minimum price of $6.56 and maximum price of $ 636.23. There are 3109 observations. Using R, we obtained the following information • The standard deviation for the data is 8.391015. The mode of the data is 40.98. • Kurtosis is a measure of whether the data are peaked or flat relative to a normal distribution. The kurtosis of the data is -0.23948. • Skewness is a measure of symmetry of the data. The skewness of the data is 0.728988. Thus positive skewness shows that the tail on the right side is longer than that on the left and that most of the values lie on the left as indicated on the histogram. 3.3 Data Cleaning • Data Cleaning refers to the process used to determine inaccurate, incomplete, or unreasonable data and then improving the quality through correction of detected errors and omissions. The process may include format checks, completeness checks, reasonableness checks, limit checks, review of the data to identify outliers or other errors, and assessment of data. These processes usually result in flagging, documenting and subsequent checking and correction of suspect records. • Data cleaning was done by the use of histograms and box plots as shown below:

Figure3.1 Histogram of the movement of prices for the past 13 years

As shown in the histogram, figure 3.1, most values lie on the left and the data has a positive skewness. • The spread of the data can be better observed in the box plot. • A box plot or a box-and-whisker diagram is ideal for identifying any outliers. Since our data set is obtained from real observations of the stock market and all the recordings are true we would consider outliers. This is because there are many factors that affect the stock market and a sudden dip or peak in the market is a real factor to consider in our analysis.

3.4 Time Series Analysis • Our data is obtained through real observations of the New York Stock Exchange that runs on Monday through Friday between 9.30am and 4.00pm with holiday exceptions. The purpose of time series analysis is generally twofold: to understand or model the stochastic mechanism that gives rise to an observed series and to predict or forecast the future values of a series based on the history of that series and, possibly, other related series or factors. • Using R we were able to construct a time plot of our findings as in figure 3.3

Figure 3.3. Graph of movement of adjusted closing price for 12 year period

The red line indicates the mean of the distribution of the prices. From the time series plot we can see the price fluctuation of the stock which has a general rise in prices. • The ACF and PACF of our time series is shown below Figure 3.4 The ACF of Apple inc. stock price

The ACF gradually declines with increase in lag period, indicating presence of trend. Thus we can infer that the data is not independent, hence it is not stationary. • The PACF, as shown below, further proves the existence of trend. Figure 3.5 PACF of Apple inc. Stock Price

So as to perform any operations on our data we need to make sure it is stationary (A time series with mean and variance which are constant over time) • A stationarized series is relatively easy to predict: we simply predict that its statistical properties will be the same in the future as they have been in the past. The predictions for the stationarized series can then be "untransformed," by reversing the mathematical transformations were previously used, to obtain predictions for the original series. Thus, finding the sequence of transformations needed to stationarize a time series often provides important clues in the search for an appropriate forecasting model. • We begin by logging our data to remove any component on none linearity and obtaining the first difference of the logged data.

From our ACF and PACF it is evidently clear that our data is not yet stationary as the pattern in our ACF does not show gradual exponential decay. We will then need to get the second difference of our logged data • The second difference will give us stationary data

Figure 3.10 ACF of second differenced logged data (Stationary data)

Figure 3.11 PACF of second differenced logged data (Stationary data)

From our PACF it is evidently clear that our data is now stationary as evidenced by the exponential decay in the PACF correlogram and the spike t lag 1 in the ACF correlogram. Figure 3.12. Histogram of stationary data

Figure 3.13 Box plot of detrended data • We can observe the box plot of our new detrended data. This will give us the spread of our stationary data.

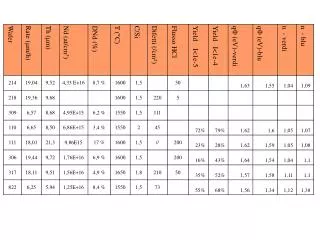

Fitting the ARIMA(p,d,q) for Apple inc. Stock Price • We then estimate the order of our ARIMA model by trying different estimates of terms (orders of the process) and trying to determine what works best. One way of doing this is by choosing the number of terms that produces the least AIC (Akaike Information Criterion) value.

As in the table above, the best fit model that gives the lowest variance is the ARIMA (0, 2, 3) model. • The results are as follows