Multiplicative Weights Update Method

Multiplicative Weights Update Method. Boaz Kaminer Andrey Dolgin. Based on: Aurora S., Hazan E. and Kale S., “The Multiplicative Weights Update Method: a Meta Algorithm and Applications” Unpublished. 2007. Motivation. Meta-Algorithm Many Applications Useful Approximations Proofs. Content.

Multiplicative Weights Update Method

E N D

Presentation Transcript

Multiplicative Weights Update Method Boaz Kaminer Andrey Dolgin Based on: Aurora S., Hazan E. and Kale S., “The Multiplicative Weights Update Method: a Meta Algorithm and Applications” Unpublished. 2007.

Motivation • Meta-Algorithm • Many Applications • Useful Approximations Proofs

Content • Basic Algorithm – Weighed Majority • Generalized Weighted Majority Algorithm • Applications: • General Guidelines • Linear Programming Approximations • Zero-Sum Games Approximations • Machine Learning • Summary and Conclusions

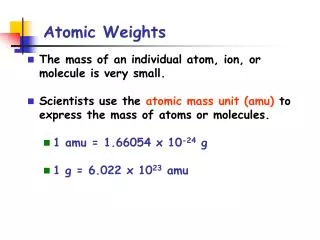

The Basic Algorithm – Weighted Majority • N ‘experts’, Each gives his estimation • At each iteration, Each ‘expert’ weight is updated based on its prediction value N. Littlestone, and M.K. Warmuth. The Weighted MajorityAlgorithm. Information and Computation, 108(2):212–261,1994.

Approximation Theorem #1 • Theorem #1: • After t steps, let mti be the number of mistakes of ‘expert’ i and mt be the number of mistakes the entire algorithm has made. Then the following bound exists for every i:

Proof of Theorem #1 • Induction: • Define the potential function: • Each mistake at least half the total weight decreases by 1-ε the potential function decreases by at least 1-ε/2: • Induction: • Since: => • Using:

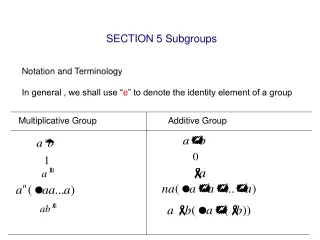

The Generalized Algorithm • Denote P – The set of events/outcomes • Assume matrix M: M(i,j) the penalty of ’expert’ i for outcome j.

The Generalized Algorithm – cont. • The expected penalty M(Dt,jt) for outcome jt: • The total loss after T rounds is

Approximation Theorem #2 • Theorem #2: • Let ε1/2.After T rounds, for every ‘expert’ i:

Theorem #2 - Proof • Proof: • Define the potential function: • Based on convexity of exponential function: • (1-ε)x(1-εx) for x[0,1], • (1+ε)-x(1-εx) for x[-1,0]

Proof of Theorem #2 – cont. • Theorem #2: ε1/2.T rounds, for every ‘expert’ i: • Proof - cont: • After T rounds: • For every i: • Use:

Corollaries: • Another possible weights update rule: • For the error parameter >0 • #3: εmin{/4,1/2}, After T=2ln(n)/εrounds, the following bound on average expected loss for every i: • #4: ε=min{/4,1/2}, After T=162ln(n)/2 rounds, the following bound on average expected loss for every i:

Gains instead of losses: • In some situations, the entries of the matrix M may specify gains instead of losses. • Now our goal is to gain as much as possible in comparison to the gain of the best expert. • We can get an algorithm for this case simply by considering the matrix M’ = −M instead of M. • (1+ε)M(i,j)/, when M(i j)0 • (1-ε)-M(i,j)/, when M(i j)<0 • #5: After T rounds, for every ‘expert’ i:

Applications • General guidelines • Approximately Solving Linear Programming • Approximately Solving Zero-Sum games • Machine Learning

General Guidelines for Applications • Let each ‘expert’ represent constraint • The penalty for an ‘expert’ is proportional to the satisfaction level of the constraint: • The penalty focuses on ‘experts’ whose constraints are poorly satisfied

Linear Programming (LP) • Consider the following LP: Axb, xP • Consider c=ipiAi , d=pibi .for some distribution p1,p2,…,pm - find cTxd, xP. • (Lagrangian Relaxation): solvecTxd instead ofAxb • We only need to check the feasibility of one constraint rather than m! • Using this “oracle” the following algorithm either: • Yields an approximately feasible solution, i.e. Aixbi- for some small >0 • or failing that, proves that the system is infeasible. S. A. Plotkin, D. B. Shmoys, and E. Tardos. Fast approximation algorithm for fractional packing and covering problems. In Proceedings of the 32nd Annual IEEE Symposium on Foundations of Computer Science, 1991. pp. 495–504.

General Framework for LP • Each “expert” represents each of the m constraints. • The penalty of the “expert” corresponding to constraint i for event x is Aix − bi. • Assume that the oracle’s responses xP which satisfy Aix−bi [−,] for all i, for some known parameter . • Thus the penalties lie in [−,]. • Run the Multiplicative Weights Update algorithm for T steps, using ε=/4. • The answer is:

Algorithm Results for LP • If the oracle returns a feasible x for cTxd in every iteration, then for every i: • Thus we have an approximately feasible solution Aixbi- • In some iteration, the oracle declares infeasibility of cTxd In this case, we conclude that the original system Axb is infeasible. • Number of iterations T proportional to 2.

Set packing problem: Suppose we have a finite set S and a list of subsets of S. Then, the set packing problem asks if some k subsets in the list are pairwise disjoint (in other words, no two of them intersect). ixi1 Set Covering: Given a universe U and a family S of subsets of U, a cover C is a subfamily CS of sets whose union is U. ixi1 Packing and Covering Problems

Fractional Set Covering Fractional Set Packing • For an error parameter ε>0: A point x is an approximate solution to: • The packing problem: if Ax(1+ε)b • The covering problem: if Ax(1-ε)b Reminder: Running time depends on ε-1 and on - =maxi{maxxP(aix/bi)}

Multi-commodity Flow Problems • The multi-commodity flow problem is a network flow problem with multiple commodities (or goods) flowing through the network, with different source and sink nodes: • Capacity constraints: • Flow conservation constraints • Demand satisfaction constraints S1 D2 S2 D1

Multi-commodity Flow Problems • Multi-commodity flow problems are represented by packing/covering LPs and thus can be approximately solved using the framework outlined above • LP formulation: maxppfp, s.t. epfp≤ce e • Solving K shortest-path for each commodity in each iteration • Unfortunately, the running time depends upon the edge capacities (as opposed to the logarithm of the capacities) and thus the algorithm is not even polynomial-time • A modification: • Weight the edges (e) • The “event” consists of routing only as much flows as is allowed by the minimum capacity edge on the path (Cpt) • The penalty incurred by edge e is M(e,pt) = cpt/ce • Start with we1=, wet+1=wet(1+)Cpt/Ce, terminate when weT≥1

Applications in Zero-Sum Games Solutions Approximations • Approximating the Zero-Sum game value: • Let > 0 be an error parameter. We wish to find mixed row and column strategies Dfinal,Pfinal such that: • Each “expert” corresponds to a single pure strategy • Thus a distribution on the experts corresponds to a mixed row strategy. Y. Freund and R. E. Schapire. Adaptive game playing using multiplicative weights. Games and Economic Behavior, 29:79–103, 1999.

Applications in Zero-Sum Games Solutions Approximations • Set: =/4 • Run for T= 16 ln(n)/2 Iterations • For any D (mixed strategy distribution) • Specifically for D* => M(D,j)≤* for any j • Thus you get a approximation to the gave value: • For the mixed strategy who reached best results Dfinal

Applications in Machine Learning • Boosting: • A machine learning meta-algorithm for performing supervised learning. Boosting is based on the question posed by Kearns: can a set of weak learners create a single strong learner? • Adaptive Boosting (Boosting by Sampling): • Fixed training set of N examples: The “experts” correspond to samples in the training set • Repeatedly run weak learning algorithm on different distributions defined on this set: “events” correspond to the set of all hypotheses that can be generated by the weak learning algorithm • The penalty for expert x is 1 or 0 depending on whether h(x) = c(x) • Final hypothesis has error under the uniform distribution on the training set Yoav Freund and R. E. Schapire. A decision-theoretic generalization of on-line learning and an application to boosting. Journal of Computer and System Sciences, 55(1):119–139, August 1997.

Summary, Conclusions, Way-ahead • Approximation optimization algorithm for difficult constrained problems was presented • Several convergence theorems were presented • Several approximation applications areas were mentioned: • Combinatorial optimization • Game theory • Machine learning • We believe this methodology can be used for solving constrained optimization problems through the Cross Entropy method