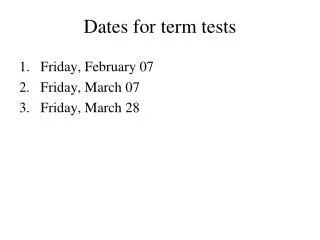

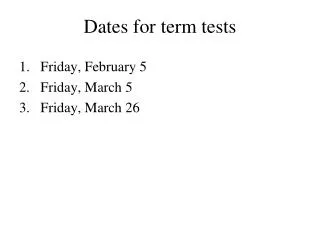

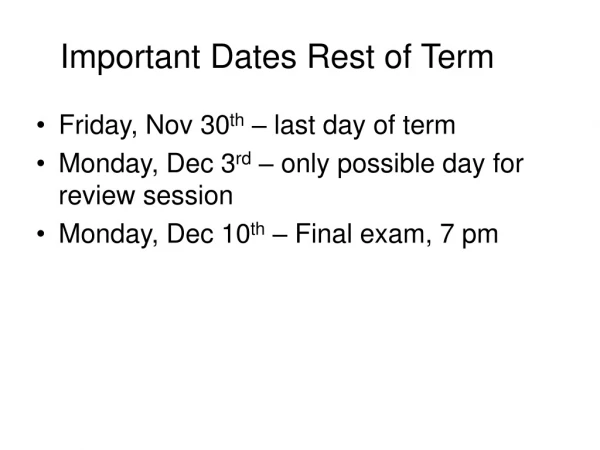

Dates for term tests

Dates for term tests. Friday, February 07 Friday, March 07 Friday, March 28. Let { x t | t T } be defined by the equation. The Moving Average Time series of order q, MA(q). where { u t | t T } denote a white noise time series with variance s 2.

Dates for term tests

E N D

Presentation Transcript

Dates for term tests • Friday, February 07 • Friday, March 07 • Friday, March 28

Let {xt|t T} be defined by the equation. The Moving Average Time series of order q, MA(q) where {ut|t T} denote a white noise time series with variance s2. Then {xt|t T} is called a Moving Average time series of order q. (denoted by MA(q))

The mean value for an MA(q) time series The autocovariance function for an MA(q) time series The autocorrelation function for an MA(q) time series

Comment The autocorrelation function for an MA(q) time series “cuts off” to zero after lag q. q

Let {xt|t T} be defined by the equation. The Autoregressive Time series of order p, AR(p) where {ut|t T} is a white noise time series with variance s2. Then {xt|t T} is called a Autoregressive time series of order p. (denoted by AR(p))

The mean value of a stationary AR(p) series The Autocovariance function s(h) of a stationary AR(p) series Satisfies the equations:

The Autocorrelation function r(h) of a stationary AR(p) series Satisfies the equations: with for h > p and

or: where r1, r2, … , rp are the roots of the polynomial and c1, c2, … , cp are determined by using the starting values of the sequence r(h).

Conditions for stationarity Autoregressive Time series of order p, AR(p)

For a AR(p) time series, consider the polynomial with roots r1, r2 , … , rp then {xt|t T} is stationary if |ri| > 1 for all i. If |ri| < 1 for at least one i then {xt|t T} exhibits deterministic behaviour. If |ri| ≥ 1 and |ri| = 1 for at least one i then {xt|t T} exhibits non-stationary random behaviour.

since: and |r1 |>1, |r2 |>1, … , | rp |> 1 for a stationary AR(p) series then i.e. the autocorrelation function, r(h), of a stationary AR(p) series “tails off” to zero.

Special Cases: The AR(1) time Let {xt|t T} be defined by the equation.

Consider the polynomial with root r1= 1/b1 • {xt|t T} is stationary if |r1| > 1 or |b1| < 1 . • If |ri| < 1 or |b1| > 1 then {xt|t T} exhibits deterministic behaviour. • If |ri| = 1 or |b1| = 1 then {xt|t T} exhibits non-stationary random behaviour.

Special Cases: The AR(2) time Let {xt|t T} be defined by the equation.

Consider the polynomial where r1 and r2 are the roots of b(x) • {xt|t T} is stationary if |r1| > 1 and |r2| > 1 . This is true if b1+b2 < 1 , b2 –b1 < 1 and b2 > -1. These inequalities define a triangular region for b1 and b2. • If |ri| < 1 or |b1| > 1 then {xt|t T} exhibits deterministic behaviour. • If |ri| ≤ 1 for i = 1,2 and |ri| = 1 for at least on i then {xt|t T} exhibits non-stationary random behaviour.

Patterns of the ACF and PACF of AR(2) Time Series In the shaded region the roots of the AR operator are complex b2

The MixedAutoregressive Moving Average Time Series of order p,q The ARMA(p,q) series

Let b1, b2, … bp , a1, a2, … ap , d denote p + q +1 numbers (parameters). The MixedAutoregressive Moving Average Time Series of order p, ARMA(p,q) Let {ut|tT} denote a white noise time series with variance s2. • independent • mean 0, variance s2. Let {xt|t T} be defined by the equation. Then {xt|t T} is called a Mixed Autoregressive- Moving Average time series - ARMA(p,q) series.

Mean value, variance, autocovariance function, autocorrelation function of anARMA(p,q) series

Similar to an AR(p) time series, for certain values of the parameters b1, …, bp an ARMA(p,q) time series may not be stationary. An ARMA(p,q) time series is stationary if the roots (r1, r2, … , rp ) of the polynomial b(x) = 1 – b1x – b2x2 - … - bpxp satisfy | ri| > 1 for all i.

Assume that the ARMA(p,q) time series{xt|t T} is stationary: Let m = E(xt). Then or

The Autocovariance function, s(h), of a stationary mixed autoregressive-moving average time series {xt|t T} be determined by the equation: Thus

The autocovariance function s(h) satisfies: For h = 0, 1. … , q: for h > q:

We then use the first (p + 1) equations to determine: s(0), s(1), s(2), … , s(p) We use the subsequent equations to determine: s(h) for h > p.

Example:The autocovariance function, s(h), for an ARMA(1,1) time series: For h = 0, 1: or for h > 1:

Substituting s(0) into the second equation we get: or Substituting s(1) into the first equation we get:

Consider the time series {xt : tT} and Let Mdenote the linear space spanned by the set of random variables {xt : tT} (i.e. all linear combinations of elements of {xt : tT} and their limits in mean square). Mis a vector space Let B be an operator on M defined by: Bxt = xt-1. B is called the backshift operator.

Note: • We can also define the operator Bk with Bkxt = B(B(...Bxt)) = xt-k. • The polynomial operator p(B) = c0I + c1B + c2B2 + ... + ckBk can also be defined by the equation. p(B)xt = (c0I + c1B + c2B2 + ... + ckBk)xt . = c0Ixt + c1Bxt + c2B2xt + ... + ckBkxt = c0xt + c1xt-1 + c2xt-2 + ... + ckxt-k

The power series operator p(B) = c0I + c1B + c2B2 + ... can also be defined by the equation. p(B)xt= (c0I + c1B + c2B2 + ... )xt = c0Ixt + c1Bxt + c2B2xt + ... = c0xt + c1xt-1 + c2xt-2 + ... • If p(B) = c0I + c1B + c2B2 + ... and q(B) = b0I + b1B + b2B2 + ... are such that p(B)q(B) = I i.e. p(B)q(B)xt = Ixt = xt than q(B) is denoted by [p(B)]-1.

Other operators closely related to B: • F = B-1 ,the forward shift operator, defined by Fxt = B-1xt = xt+1and • D = I - B ,the first difference operator, defined by Dxt = (I - B)xt = xt - xt-1 .

The Equation for a MA(q) time series xt= a0ut + a1ut-1 +a2ut-2 +... +aqut-q+ m can be written xt= a(B)ut + m where a(B)= a0I + a1B +a2B2 +... +aqBq

The Equation for a AR(p) time series xt= b1xt-1 +b2xt-2 +... +bpxt-p+ d +ut can be written b(B)xt= d + ut where b(B)= I - b1B - b2B2 -... - bpBp

The Equation for a ARMA(p,q) time series xt= b1xt-1 +b2xt-2 +... +bpxt-p+ d +ut + a1ut-1 +a2ut-2 +... +aqut-q can be written b(B)xt= a(B)ut + d where a(B)= a0I + a1B +a2B2 +... +aqBq and b(B)= I - b1B - b2B2 -... - bpBp

Some comments about the Backshift operator B • It is a useful notational device, allowing us to write the equations for MA(q), AR(p) and ARMA(p, q) in a very compact form; • It is also useful for making certain computations related to the time series described above;

The partial autocorrelation function A useful tool in time series analysis

The partial autocorrelation function Recall that the autocorrelation function of an AR(p) process satisfies the equation: rx(h) = b1rx(h-1) + b2rx(h-2) + ... +bprx(h-p) For 1 ≤ h ≤ p these equations (Yule-Walker) become: rx(1) = b1 + b2rx(1) + ... +bprx(p-1) rx(2) = b1rx(1) + b2 + ... +bprx(p-2) ... rx(p) = b1rx(p-1)+ b2rx(p-2) + ... +bp.

In matrix notation: These equations can be used to find b1, b2, … , bp, if the time series is known to be AR(p) and the autocorrelation rx(h)function is known.

If the time series is not autoregressive the equations can still be used to solve for b1, b2, … , bp, for any value of p ≥1. In this case are the values that minimizes the mean square error:

Definition: The partial auto correlation function at lag k is defined to be: Using Cramer’s Rule

Comment: The partial auto correlation function, Fkk is determined from the auto correlation function, r(h) The partial auto correlation function at lag k, Fkk is the last auto-regressive parameter, . if the series was assumed to be an AR(k) series. If the series is an AR(p) series then An AR(p) series is also an AR(k) series with k > p with the auto regressive parameters zero after p.

Some more comments: • The partial autocorrelation function at lag k, Fkk, can be interpreted as a corrected autocorrelation between xt and xt-k conditioning on the intervening variables xt-1, xt-2, ... ,xt-k+1 . • If the time series is an AR(p) time series than Fkk = 0 for k > p • If the time series is an MA(q) time series than rx(h) = 0 for h > q

A General Recursive Formula for Autoregressive Parameters and the Partial Autocorrelation function (PACF)

Let denote the autoregressive parameters of order k satisfying the Yule Walker equations: