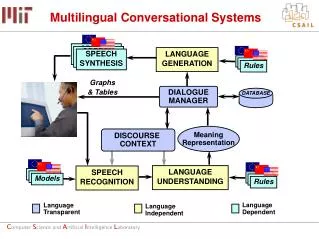

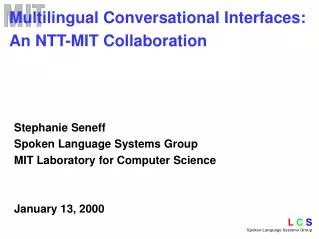

Multilingual Conversational Systems

SPEECH SYNTHESIS. SPEECH SYNTHESIS. LANGUAGE GENERATION. SPEECH SYNTHESIS. Rules. Graphs & Tables. DIALOGUE MANAGER. DATABASE. Meaning Representation. DISCOURSE CONTEXT. LANGUAGE UNDERSTANDING. SPEECH RECOGNITION. Models. Models. Models. Rules. Language Independent.

Multilingual Conversational Systems

E N D

Presentation Transcript

SPEECH SYNTHESIS SPEECH SYNTHESIS LANGUAGE GENERATION SPEECH SYNTHESIS Rules Graphs & Tables DIALOGUE MANAGER DATABASE Meaning Representation DISCOURSE CONTEXT LANGUAGE UNDERSTANDING SPEECH RECOGNITION Models Models Models Rules Language Independent Language Transparent Language Dependent Multilingual Conversational Systems

Steps to Develop Language Learning System • Begin with existing mature system in English • Develop English-to-Mandarin translation capability • Induce Mandarin corpus from English corpus • Train LM statistics for both recognizers from corpora • Develop parsing grammar for Mandarin queries and generation rules for Mandarin responses Not yet completed: • Develop domain-specific user simulation capability • Generate thousands of dialogues in both languages 3. Train recognizers and users from simulated dialogues

Activities over the Last Nine Months • Translation from English to Mandarin • Mainly focused on user queries (as contrasted with responses) • Integrating generation-based translation with example-based approach • Exploring the use of statistical machine translation • Use phrase-based statistical translation framework developed by Phillip Koehn • Utilized the formal methods to generate domain-specific parallel corpus in weather query domain • Implemented a finite-state transducer version of the decoder and integrated with Galaxy • Translation from Mandarin to English • Use statistical method to obtain Chinese to English translation capability • Explore grammar induction techniques to create parsing grammar for Mandarin queries, towards developing formal methods for Mandarin to English translation

Activities over the Last Nine Months, Cont’d • System Development • Upgraded weather harvesting process • Upgraded database server to support Postgres in addition to Oracle • Improved dialogue management • Better handling of meta queries • Developed a new GUI interface ovecoming firewall limitations • Support automatic checking and correction of typed tone errors • Better display of tones as diacritcs • Developed a new concatenative speech synthesis capability for high quality translation of user queries spoken in English using Envoice • Developed a batchmode capability to process synthetic speech through dialogue interaction to aid system development

Activities over the Last Nine Months, Cont’d Presentations: • Three talks at InStill Workshop in Venice • Wang and Seneff: Translation • Seneff et al. : LL Systems • Peabody et al.: Web based interface for tone acquisition • ISCSLP: • Seneff et al.: Focused on MuXing system overall • SigDial Demo Session • Wang and Seneff: Presentation and live demonstration • One hour seminar at Microsoft China’s Speech Group • One hour seminar at Defense Language Institute in Monterey • Demonstrated system to Julian Wheatley, head of Chinese department at MIT and to Henry Jenkins, director of MIT Comparative Media Studies

Activities over the Last Nine Months, Cont’d Data collection initiatives: • Eight subjects have completed Web-based exercise at MIT • Two visits by Stephanie Seneff to Defense Language Institute in Monterey California • One successful class participation exercise • Another attempted but aborted due to power outage • Installed Web-based exercise system on computers at MIT Language Lab • Julian Wheatley has agreed to support data collection initiatives with students in the MIT Chinese classes

Semantic Frame Parse Generate Chinese corpus Recognizer English Recognizer Language Model Chinese Recognizer Language Model English Network Chinese Network Bilingual Recognizer Construction English corpus • Two languages compete in common search space • Automatically translate existing English corpus into Mandarin • Use NL grammar to automatically induce language model for both English and Mandarin recognizers

parse Interlingua generate Mandarin Sentence Grammar Induction Mandarin Parsing Grammar Automatic Grammar Induction Once translation ability exists from English to target language, can create reverse system almost effortlessly English Sentence Corpus Pairs Utilizes English parse tree and Mandarin generation lexicon to induce Mandarin parse tree

English Chinese Spanish Japanese Recognition Models NLU Generation Rules NLG Parsing Rules English Chinese Spanish Japanese Synthesis Speech Corpora Multilingual Spoken Translation Framework Common meaning representation: semantic frame Semantic Frame

Challenges in Cross-languageGeneration for Translation • Some expressions have very different syntactic structures in different languages What is your name? 你(you) 叫(call) 什么(what) 名字(name)? I like her. Ella me gusta. • Syntactic features are expressed in many different ways • Determiners (English but not Chinese) 附近(vicinity) 哪儿(where) 有(have) 银行(bank)? Where is a bank nearby? • Particles (Chinese but not English) that hotel 那(that)家(<particle>) 旅馆(hotel) I lost my key.我(I) 丢(lose) 了(<past tense>)我的(my) 钥匙(key). • Gender (extensive in Spanish)

An Example: English/Chinese How long does it take to take a taxi there • Function words disappear in Chinese How long does it take to take a taxi there How long take take taxi there How long need take taxi there How long need take taxi go there ( take taxi go there need how long ) 坐 出租车 去 那里 要 多久 • Two instances of “take” have different translations • Verb “go” omitted in English • Sentence structure is very different

{c wh_question :aux “do” :phatic_pronoun “it” :pred {p take_time :trace “how_long” :aux “to_inf” :v_complement {p take_ride :topic {q taxi :quantifier “indef” } :pred {p destination :topic “there” } } } } English Chinese } Semantic Frame for Example • Semantic frame is identical for both inputs, except for missing function words in Mandarin • Where necessary, constituent movement is invoked to render the same hierarchical structure • English generation predicts missing function words • Mandarin generation infers “go” from “destination” predicate

Strategies for Achieving High Quality and Robustness • Interlingua-based translation • Maintain consistency of semantic frame representation across different languages whenever possible • Seed grammar rules for each new language on English grammar rules • Target language dependent generation rules specify constituent order • Word sense disambiguation achieved through semantic features • Restricted conversational domains (lesson plans) • Emphasis on mechanisms to enable rapid porting to new domains and languages • Use parsability to assess quality of translation outputs • Back off to example-based method when parse fails

“will” conditioned by “verify” pulled to the front bo1 shi4 dun4 zhe4 zhou4 mo4hui4 bu2 hui4xia4 yu3? ( Boston this weekendwill-not-willrain ? ) zhe4 zhou4 mo4 bo1 shi4 dun4 hui4 xia4 yu3ma5 ? (this weekend Boston willrain <question-particle> ? ) Schematic of Generation into Mandarin {c verify :aux “will” :subject “it” :pred {p rain :pred {p locative :prep ‘in” :topic {q city :name “boston” } } :pred {p temporal :topic {q weekday :quanitifier “this” :name “weekend” } }} }

Example-based Translation Chinese Sentence rejected Semantic Frame English Input Chinese Output Parse? Parse Generate accepted English Grammar Chinese Grammar Chinese Rules Generation-based Translation • Semantic frame serves as interlingua • Translation achieved by parsing and generation • Use Mandarin grammar to detect potential problems • Rejected sentences routed to example-based translation for a second chance

Example-based Translation • Requires translation pairs and a retrieval mechanism • Corpus automatically obtained via the generation-based approach • Retrieval based on lean semantic information • Encoded as key-value pairs • Obtained from semantic frame via simple generation rules • Generalizes words to classes (e.g., city name, weekday, etc.) to overcome data sparseness

Parser Semantic Frame KV String English Input Chinese Output English Grammar Generator Key-value Rules { <CITY> : San Francisco } <CITY> <CITY> hui4 bu2 hui4 xia4 yu3? Example-based Translation Procedure KV-Chinese Table Is there any chance of rain in San Francisco? WEATHER: rain CITY: San Francisco { <CITY> : jiu4 jin1 shan1 } jiu4 jin1 shan1 • Key-value string serves as interlingua • Translation achieved by parsing and table lookup • City name masked during retrieval and recovered in final surface string

Evaluation: English to MandarinWeather Domain • Evaluation data • Drawn from the publicly available Jupiter weather system • Telephone recordings; conversational speech • Unparsable utterances (English grammar) were excluded • Total of 695 utterances, with 6.5 words per utterance on average • System configuration • Text input or speech input • Recognizer achieved 6.9% word error rate, and 19.0% sentence error rate • Generation-based method preferred over example-based method • NULL output if both failed • Evaluation criteria • Yield of each translation method • Human judgment of translation quality

% Perfect Adequate Wrong Failed Rule 550 34 8 85% Example 27 16 5 55 15% Total 577(83%) 50(7%) 13(2%) 8% 100% Spoken Language Translation: Evaluation Results • Recognizer WER was 6.9% • Bilingual judge rated translations • Example-based translation increased yield by 6% • Incorrect translation provided only 2% of the time • Often due to recognition errors • English paraphrase provides context for errors 13(2%)

rain/storm wind hail Japanese: Spanish: Algunas tormentas posiblement acompanadas por vientos racheados y granizo Chinese:¤@ ¨Ç ¹p «B ¥i ¯à ·| ¦ñ ¦³ °} · ©M ¦B ¹r Multilingual Weather Responses English source: Some thunderstorms may be accompanied by gusty winds and hail clause: weather_event topic: precip_act, name: thunderstorm, num: pl quantifier: some pred: accompanied_by adverb: possibly topic: wind, num: pl, pred: gusty and: precip_act, name: hail Frame indexed under wind, rain, storm, and hail

Stage 1: Drill Exercises • Web-based Interface to provide practice in typing queries in the weather domain • 10 weather scenarios to be solved using typed pinyin: “Boston, rain, tomorrow” • Student given feedback on both query completeness and tone accuracy • Separate recording sessions allow user to practice both read and spontaneous spoken queries • Recordings will be used to train the system on accented speech • Recordings will also be assessed for tone quality • The Defense Language Institute in Monterey conducted a successful experiment using this Web-based interface in a class of 30 students • We are planning to introduce the exercise in the language laboratory at MIT

Lexical Tone Correction • Character representation does not explicitly encode tone: • 洛杉矶星期一刮风吗? • Exploit pinyin to help student acquire tonal knowledge: • Diacritic: luò shān jī xīng qī yī guā fēng ma? • Numeric: luo4 shan1 ji1 xing1 qi1 yi1 gua1 feng1 ma5? • Hypothesis: Errors in typed pinyin reflect inaccurate knowledge of tones • luo3 shan1 ji3 xing1 qi2 yi1 gua4 feng2 ma2? • Provide explicit feedback about typed tone errors

Lexical Tone Correction • Exploit some features of Chinese • Syllable lexicon is small, approximately 420 unique syllables • 5 tones (including neutral tone) • Exploit some abilities of TINA NL system • Ability to parse weighted word FST using probabilistic models • FST normally represents a list of recognizer hypotheses • A path through the FST represents the most likely correct parse • Given some input • Generate FST of single sentence • Expand the tones on each syllable • Attempt to parse FST • Selected path through FST represents corrected tones

FST Example: Step 1 Step 1: Generate simple FST Given: luo3 shan1 ji3 xing1 qi2 yi1 gua4 feng2 ma2

FST Example: Step 2 Step 2: Assign benefit of doubt to items that appear in lexicon Items that do not appear in lexicon are removed. Given: luo3 shan1 ji3 xing1 qi2 yi1 gua4 feng2 ma2

FST Example: Step 3 Step 3: Expand each syllable to alternate tones. More compact than specifying each possible sentence variant. Given: luo3 shan1 ji3 xing1 qi2 yi1 gua4 feng2 ma2

FST Example: Step 4 Step 4: Remaining probability is uniformly distributed among alternate tones Given: luo3 shan1 ji3 xing1 qi2 yi1 gua4 feng2 ma2

FST Example: Step 5 Step 5: Parsing reveals the correct tones Given:luo3 shan1 ji3 xing1 qi2 yi1 gua4 feng2 ma2 Correct: luo4 shan1 ji1 xing1 qi1 yi1 gua1 feng1 ma5

San Francisco Tuesday Hot Web interface: Practice Exercise Student is prompted for city, time, and event

Web interface: Practice Exercise Xing1 qi1 er3 jiu3 jin3 shan1 hui4 bu2 hui4 re1 • Student types in: • A question concerning this topic in Mandarin using pinyin • OR • An English word or phrase for a translation

Web interface: Practice Exercise Student is given feedback

Spoken Conversational Interaction • Weather information domain (rain, snow, wind, temperature, etc.) • Initial version configured for American learning Mandarin • Recognizer supports both English and Mandarin • Seamless language switching • English queries are translated into Mandarin • Mandarin queries are answered in Mandarin • User can ask for a translation into English of the response at any time • Uses Mandarin synthesizer provided by DELTA Electronics for responses, Envoice concatenative synthesizer for query translations • System can be configured as telephone-only or as telephone augmented with a Web-based gui interface

Illustration of Dialogue Interaction User: Bo1 Shi4 Dun4 ming2 tian1 hui4 xia4 yu3 ma5? (Is it going to rain tomorrow in Boston?) System: Tian1 qi4 yu4 bao4 ming2 tian1 Bo1 shi4 dun4 mei2 you3 yu3. (The forecast calls for no rain tomorrow in Boston) User: (in English) What is the temperature? System: (translates) Qi4 wen1 shi4 duo1 shao3? User: (emulates) Qi4 wen1 shi4 duo1 shao3? System: Bo1 Shi4 Dun4 ming2 tian1 zui4 gao1 qi4 wen1 er4 she4 shi4 du4, ming2 tian1 ye4 jian1, zui4 di4 qi4 wen1 ling2 xia4 wu3 she4 shi4 du4. User: Could you translate that? System: In Boston tomorrow, high 2 degrees Celsius, Tomorrow night, low -5 Celsius.

Example Dialogue in Weather Domain • “What is the forecast for San Francisco tomorrow?” • System paraphrases request, then answers • “Please translate” • High quality synthesis for translation using MIT’s Envoice concatenative synthesis framework • “Could you repeat that” – system provides translation • User emulates in Mandarin and system repeats previous response • “Will it rain in London?” • “I’m sorry I didn’t understand you.” – response given when it fails to recognize or parse the user query

Video Clip Demo

Assessment • Phonetic aspects • Expand phonological rules to support non-native realizations (e.g., /dh/ /d/ or schwa insertion) • Allow realizations of selected phones from native language to compete in recognizer search • Tonal aspects (Mandarin) • Use tone recognition system (Wang et al., 1998) to score tone productions; highlight worst-scoring words • Tabulate frequencies of tone errors in typed inputs (pinyin) • Use phase-vocoder techniques (Tang et al., 2001) to repair user’s tone productions by replacing prosodic contour with native speech patterns • Fluency measures • Word-by-word speaking rate (Chung & Seneff, 1999) • Percentage of utterance containing pauses and disfluencies

Tone analysis: Native vs Non-Native Mandarin • Creating pitch contours • F0 extracted using algorithm in (Wang and Seneff, 2000) • Statistics of each pitch contour over each syllable considered without regard for left or right contexts • Normalization • Duration normalized by sampling at 10% intervals • Pitch normalized according to: • Comparisons based on (Wang et al., 2003) • Include normalized F0 value, peak, valley, range, peak position, valley position, falling range, and rising range • Corpus (from the Defense Language Institute) • 2065 utterances from 4 native speakers • 4657 utterances from 20 non-native speakers

Tonal averages over all syllables: Non-Native Example

Capturing Phonological Errors • Leverage phonological modeling capabilities of SUMMIT • Model typical pronunciation errors explicitly • Direct and intuitive mapping from linguistic rules • Support both within-language and cross-language substitutions • Initial experiments completed on Koreans learning English (Kim et al., ICSLP 2004) • Phonological rules capture typical problems such as schwa insertion and /dh/ /d/ confusions • Best path in alignment used to detect errors • Verbal feedback given to student • Current research to apply to Americans learning Mandarin • Build single recognizer to support both languages • Use data-driven approaches to discover most likely cross-language phone substitution errors • Explicitly encode such errors in formal phonological rules • Side benefit may be improved recognition for English-accented Mandarin

Detecting Phonological Errors • {CONSONANT} td {CONSONANT} => [tcl] [t] | tcl t [ax]; • // No CCC allowed in Korean • {} dd {} => dcl [d [ax]] ; • // A vowel may be inserted after a coda consonant (Staccato Rhythm) • {} dh {} => dh | [dcl] d ; • // Becomes an onset stop as in 'they'. No [dh] in Korean phonemes..

Future Plans • Develop tools to rapidly port to new domains and languages • Automatic grammar induction • Generic dialogue modeling • Simulated dialogue interactions • Develop various scoring algorithms for quality assessment of student’s speech • Develop high quality synthesis capability for Mandarin translations, for multiple domains of knowledge • Collect and transcribe data from language learners and evaluate both system and students • Begin with weather domain, our most mature system • Extend to other domains once they are better developed • Refine all aspects of systems based on collected data