Introduction to mpi

170 likes | 317 Views

Class CS 775/875, Spring 2011. Introduction to mpi. Take Away. Motivation Basic difference between: MPI vs. RPC Parallel Computer Memory Architectures Parallel Programming Models MPI Definition MPI Examples. Motivation Work Queues.

Introduction to mpi

E N D

Presentation Transcript

Class CS 775/875, Spring 2011 Introduction to mpi Amit H. Kumar, OCCS Old Dominion University

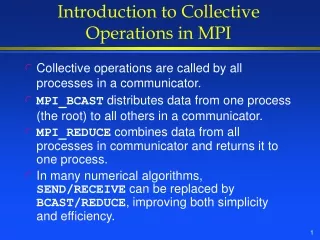

Take Away • Motivation • Basic difference between: MPI vs. RPC • Parallel Computer Memory Architectures • Parallel Programming Models • MPI Definition • MPI Examples

Motivation Work Queues • Work queues allow threads from one task to send processing work to another task in a decoupled fashion C P Shared Queue P C Producer Consumer

Motivation … • Make this work in a distributed setting … C P Shared Network Queue P C Producer Consumer

MPI vs. RPC • In simple terms they both are methods of Inter-Process Communication (IPC) • And both fall between Transport Layer and Application layer in the OSI model.

Parallel Computing one liner • Ultimately, parallel computing is an attempt to maximize the infinite but seemingly scarce commodity called time.

Parallel Computer Memory Architectures • Shared Memory: Shared memory parallel computers vary widely, but generally have in common the ability for all processors to access all memory as global address space. • Uniform Memory Access (UMA). Ex. SMP • Non-Uniform Memory Access (NUMA). Ex two or more SMPs linked.

Parallel Computer Memory Architectures… • Distributed Memory: • Like shared memory systems, distributed memory systems vary widely but share a common characteristic. Distributed memory systems require a communication network to connect inter-processor memory. • Memory addresses in one processor do not map to another processor, so there is no concept of global address space across all processors.

Parallel Computer Memory Architectures… • Hybrid Distributed-Shared Memory: • The largest and fastest computers in the world today employ both shared and distributed memory architectures.

Parallel Programming ModelsParallel programming models exist as an abstraction above hardware and memory architectures. There are several parallel programming models in common use: • Shared Memory • tasks share a common address space, which they read and write asynchronously. • Threads • a single process can have multiple, concurrent execution paths. Ex implementations: POSIX threads & OpenMP • Message Passing • tasks exchange data through communications by sending and receiving messages. Ex: MPI & MPI-2 specification. • Data Parallel • tasks perform the same operation on their partition of work. Ex: High Performance Fortran – support data parallel constructs. • Hybrid

Define MPI • M P I = Message Passing Interface • In short: • MPI is a specificationfor the developers and users of message passing libraries. By itself, it is NOT a library - but rather the specification of what such a library should be. • Few implementations of MPI: MPICH,MPICH2, MVAPICH, MVAPICH2…

MPI uses objects called communicators and groups to define which collection of processes may communicate with each other.

Rank • Within a communicator, every process has its own unique, integer identifier assigned by the system when the process initializes. A rank is sometimes also called a "task ID". Ranks are contiguous and begin at zero. • Used by the programmer to specify the source and destination of messages. Often used conditionally by the application to control program execution (if rank=0 do this / if rank=1 do that).

References • References of the content in the presentation is available upon request. • MPICH2 download: http://www.mcs.anl.gov/research/projects/mpich2/downloads/index.php?s=downloads • MVAPICH2 dowload: http://mvapich.cse.ohio-state.edu/download/mvapich2/