CUDA - Based Sequence Alignment

CUDA - Based Sequence Alignment. By: Galal and Sameh 2013. Outline . Explore the architecture of the GPU. Parallel Programming using CUDA Sequence Alignment Algorithms Needleman – Wunsch Algorithm Smith – Waterman Algorithm Project Plan. Graphical Processing Units (GPU).

CUDA - Based Sequence Alignment

E N D

Presentation Transcript

CUDA - Based Sequence Alignment By: Galal and Sameh 2013

Outline • Explore the architecture of the GPU. • Parallel Programming using CUDA • Sequence Alignment Algorithms • Needleman – Wunsch Algorithm • Smith – Waterman Algorithm • Project Plan

Graphical Processing Units (GPU) • GPU is a many core processor optimized for graphics workloads • Example: NVIDIA GeForce GTX 280 GPU with 240 core and in order single instruction heavy multithreads • Each 8 cores shares control and instruction cache • GPUs memory has high bandwidth in comparison with CPU (10 to 1) • The combination of GPU and CPU, becauseCPUs consists of a few cores optimized forserial processing, while GPU consists of many cores (maybe thousands) optimized for parallel processing CPU Multiple of cores GPU Thousands of cores

GPU Architecture • Device Architecture. • Many Streaming Multiprocessors. • Each SM has up to 8 Processors. • Has different types of Memory. Ref: 3

Computing Unified Device Architecture (CUDA) • CUDA (Compute Unified Device Architecture) is an extension of C/C++ • Scalable multi-threaded programs for CUDA-enabled GPUs • Facilitate heterogeneous computing: CPU + GPU

CUDA Program • Kernels: • Parallel portions of an application are executed on the device (GPU) • Invoked as a set of concurrently executing threads • Threads are organized in a hierarchy consisting of thread blocks and grids • Grid: set of independent thread blocks • Thread block: set of concurrent threads • Each thread has a unique ID (threadIdx, blockIdx) ∈ {0,..., dimBlock-1} × {0,..., dimGrid-1}

CUDA Programming Model CPU (host) GPU (Device)

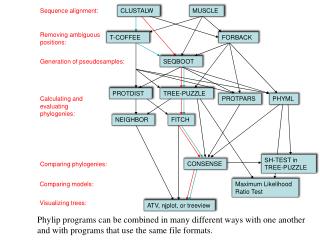

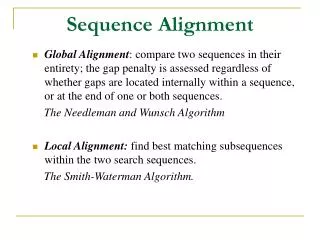

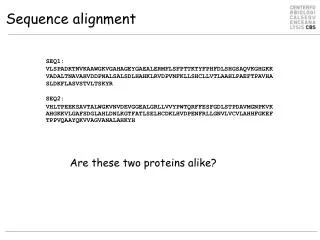

Sequence Alignment • In bioinformatics, a sequence alignment is a way of arranging the sequences of DNA, RNA, or protein to identifyregions of similarity that may be a consequence of functional, structural, or evolutionary relationships between the sequences. • There are two main types of sequence alignment: • Global : When the two sequence has almost the same size. • Local: compare two sequences with different sizes.

Global alignment Local alignment Sequence alignment on their whole length Alignment of the high similarity regions G G C T G A C C A C C - T T | | | | | | | G A - T C A C T T C C A T G G A C C A C C T T | | | | | | | G A T C A C - T T Local alignement / Global alignement Sequence A Sequence B Optimal global pair-wise alignement : Needleman and Wunsch, 1970 Optimal local pair-wise alignement : Smith and Waterman, 1981

Why? • Computationally intensive task proportionally to the database size. • This problem has been solved using Iterative methods PSSMs (Position Specific Scoring Matrices) instead of Hamming distance for simplicity. • There have been some remarkable efforts toward accelerate the execution time using traditional computing power based on heuristic techniques such as BLAST, FASTA. • High computing capabilities are nowadays handy and affordable. For instance, My Laptop has some GPU power: ( Nvidia® Tesla GForce 315M )

sequence alignment • The NW algorithm has three main steps: • Initialization • Fill • Trace back

Siriwardena and Ranasinghe • Their motivation sources were: • Global sequence alignment is the most resource consuming in comparison with local alignment . • Very few studies conducted on global sequence alignment. • Their research goal is to evaluate different levels of memory access strategiesand different block sizes. • They have parallelize the “Fill” step only (computational intensive step) Accelerating Global Sequence Alignment using CUDA compatible multi-core GPU

Siriwardena and Ranasinghe • Regardless of the dependency in the “Fill” step, the algorithm shows a pattern. • Scores in the atni-diagonal locations are independent. • Memory access has been planed to minimize communication between device and host main memories. • Intra-block • Inter-block • Implementation: • without blocking strategy: • host is responsible for copying the data forward and backward from device memory • Device did the computations only to decide the score of the current cell • Blocking strategy: • Global memory based strategy (Copied to main mem. Each SP gets a block to shared mem., once they finish it. It is copied back to GM but with barrier implementation) • Shared memory based strategy (Explicit synchronization)

Siriwardena and Ranasinghe:Evaluation • Specifications (CPU) : • 2.4GHz Intel quad core Processor • 3 GB RAM, • LINUX OS • Specifications (GPU): • Nvidia GeForce 8800 GT GPU • 512MB graphics memory • 114 cores and 16KB of shared memory per block • CUDA version 2.3

Cheet. al • They investigated the performance of GPUs on different application fields that need more speed. • A comparison has been made among the GPU implementation with a single core CPU and multil-core CPU (CUDA vs. OpenMP, CUDA vs. Serial implementation). • They have reported that the Multi-core CPU implementation has outperform the GPU implementation (CUDA 1.1). A performance study of general-purpose applications on graphics processors using CUDA

Cheet. al • The parallelization took place on the “Fill” step only. • They identified two parallelism levels • Thread level parallelism • Block level parallelism • They reported 2.9x speedup of CUDA implementation against single CPU implementation.

Zhenget. al • Smith-Waterman algorithm is used • Computing the scoring matrix is parallelized • 32 threads are used to process the sub-matrices in parallel • Threads in one warp are synchronized • Maximum score within a block -> shared memory • Global maximum score -> global memory Accelerating biological sequence alignment algorithm on GPU with CUDA

Zhenget. al • NVIDIA GeForce9600GT • 8 SMswith 8 SPs for each • 8192 registers • 16KBshared memory • 64KB constant memory in one SM • 768 MB global memory • 256 threads to be executed concurrently

Zhenget. al • Swiss-Port protein sequence database • Query sequence length: 64 to 2048 amino acids • Speedup: 19x compared with CPU implementation

CUDASW++ • Based on Smith-Waterman algorithm • Inter-task and intra-task parallelization • Inter-task: • Each task is assigned to exactly one thread • dimBlock tasks are performed in parallel by different threads in a thread block subject query CUDASW++: optimizing Smith-Waterman sequence database searches for CUDA-enabled graphics processing units

CUDASW++ • Intra-task parallelization: • Each task is assigned to one thread block • All dimBlock threads in the thread block cooperate to perform the task in parallel • Exploiting the parallel characteristics of cells in the minor diagonals subject query

CUDASW++ • Database : Swiss-Prot release 56.6 • Number of query sequences: 25 • Query Length: 144 ~ 5,478 • Single-GPU: NVIDIA GeForceGTX 280 ( 30M, 240 cores, 1G RAM) • Multi-GPU: NVIDIA GeForceGTX 295 (60M, 480 cores, 1.8G RAM )

Our Plan • Develop and execute the two main algorithms using CUDA as well as OpenMP. • Study different parallelization scenarios and examine different memory strategies. • Parallelize the “Fill” step as well as the “Trace back” if it is applicable. • Performance criteria: • The GPU implementation compared against sequential code implementation (CPU). • The performance of GPU will be compared to the pervious work with only “Fill” step parallelism • OpenMPvs CUDA investigated.

Parallelism possibilities Block level parallelism: Different block sizes will be evaluated Such as (4x4, 8x8, 16x16, 32X32), parallel parts will be the anti-diagonal directions Another possibility is to split the data into column-wise portions and carry out execution in row-wise direction

References • GPU Gems 2: Chapter30 (https://developer.nvidia.com/content/gpu-gems-2-chapter-30-geforce-6-series-gpu-architecture) • What is GPU computing (http://www.nvidia.com/object/what-is-gpu-computing.html) • D. Kirk and W. Hwu, Programming Massively Parallel Processors: A Hands-on Approach. Burlington, Massachusetts: Morgan Kaufmann Elsevier, 2010 • T. R. P. Siriwardena and D. N. Ranasinghe, “Accelerating Global Sequence Alignment Using CUDA Compatible Multi-core GPU,” in 5th International Conference on Information and Automation for Sustainability (ICIAFs), pp. 201–206, 2010. • S. Che, M. Boyer, J. Meng, D. Tarjan, J. W. Sheaffer, and K. Skadron, “A Performance Study of General-purpose Applications on Graphics Processors Using CUDA,” J. Parallel Distrib. Comput., vol. 68, no. 10, pp. 1370–1380, Oct. 2008. • Zheng, Fang, et al. "Accelerating biological sequence alignment algorithm on gpu with cuda.“IEEE International Conference on Computational and Information Sciences (ICCIS), 2011 • Liu, Yongchao, Douglas L. Maskell, and Bertil Schmidt, "CUDASW++: optimizing Smith-Waterman sequence database searches for CUDA-enabled graphics processing units," BMC research notes 2.1 (2009): 73.

Needleman vs. waterman • Needleman-Wunsch • Global sequence • Matches the whole sequence • No gap penalty required • Hij=max{diag+ s, left+e,up +e} • Score cannot decrease between two cells of a pathway • Simth-Waterman • Local sequence • Part of the sequence could be matched • Requires a gap penalty to work effectively • Score can increase, decrease or stay level between two cells of a pathway

GPU Properties TotalMemory: 6.4420e+09 FreeMemory: 6.3484e+09 MultiprocessorCount: 16 ClockRateKHz: 1301000 ComputeMode: 'Default' GPUOverlapsTransfers: 1 KernelExecutionTimeout: 0 CanMapHostMemory: 1 DeviceSupported: 1 DeviceSelected: 1 Name: 'Quadro 7000' Index: 1 ComputeCapability: '2.0' SupportsDouble: 1 DriverVersion: 5 MaxThreadsPerBlock: 1024 MaxShmemPerBlock: 49152 MaxThreadBlockSize: [1024 1024 64] MaxGridSize: [65535 65535] SIMDWidth: 32