Parameterized Unit Testing: Principles, Techniques, and Applications in Practice

Tutorial. Parameterized Unit Testing: Principles, Techniques, and Applications in Practice. Nikolai Tillmann, Peli de Halleux, Wolfram Schulte (Microsoft Research) Tao Xie (North Carolina State University). Outline. Unit Testing Parameterized Unit Testing Input Generation with Pex

Parameterized Unit Testing: Principles, Techniques, and Applications in Practice

E N D

Presentation Transcript

Tutorial Parameterized Unit Testing:Principles, Techniques, and Applications in Practice • Nikolai Tillmann, Peli de Halleux, Wolfram Schulte (Microsoft Research) • Tao Xie (North Carolina State University)

Outline • Unit Testing • Parameterized Unit Testing • Input Generation with Pex • Patterns for Parameterized Unit Testing • Parameterized Models • Wrapping up

About the tool… • We will use the tool Pex as part of the exercises • To install it, you need • Windows XP or Vista • .NET 2.0 • Download Pex fromhttp://research.microsoft.com/en-us/projects/pex/downloads.aspx • Two variations : • pex.devlabs.msi:requires Visual Studio 2008 Team System • pex.academic.msi:does not require Visual Studio, but for non-commercial use only • To run the samples, you need VS Team System

Unit Testing Introduction

Unit Testing • A unit test is a small program with assertions • Test a single (small) unit of code void ReadWrite() { var list = new List(); list.Add(3); Assert.AreEqual(1, list.Count); }

Unit Testing Good/Bad • Design and specification By Example • Documentation • Short feedback loop • Code coverage and regression testing • Extensive tool support for test execution and management • Happy path only • Hidden integration tests • Touch the database, web services, requires multiple machines, etc… • New Code with Old Tests • Redundant tests

Unit Testing: Measuring Quality • Coverage: Are all parts of the program exercised? • statements • basic blocks • explicit/implicit branches • … • Assertions: Does the program do the right thing? • test oracle Experience: • Just high coverage of large number of assertions is no good quality indicator. • Only both together are!

Exercise: “TrimEnd”Unit Testing Goal: Implement and test TrimEnd: • Open Visual Studio • Create CodeUnderTestProject, and implement TrimEnd • File -> New -> Project -> Visual C# -> Class Library • Add new StringExtensions class • Implement TrimEnd • Create TestProject, and test TrimEnd • File -> New -> Project -> C# -> Test -> Test Project • Delete ManualTest.mht, UnitTest1.cs, … • Add new StringExtensionsTest class • Implement TrimEndTest • Execute test • Test -> Windows -> Test View, execute test, setup and inspect Code Coverage // trims occurences of the ‘suffix’ from ‘target’ string TrimEnd(string target, string suffix);

Exercise: “TrimEnd”Unit Testing public class StringExtensions { public static string TrimEnd(string target, string suffix) { if (target.EndsWith(suffix)) target = target.Substring(0, target.Length-suffix.Length); return target; } } [TestClass] public class StringExtensionTest { [TestMethod] public void TrimEndTest() { var target = "Hello World"; var suffix = "World"; var result = StringExtensions.TrimEnd(target, suffix); Assert.IsTrue(result == "Hello "); } }

Parameterized Unit Testing Introduction

The Recipe of Unit Testing • Three essential ingredients: • Data • Method Sequence • Assertions void Add() { int item = 3; var list = new List(); list.Add(item); var count = list.Count; Assert.AreEqual(1, count); }

The (problem with) Data list.Add(3); • Which value matters? • Bad choices cause redundant, or worse, incomplete test suites. • Hard-coded values get stale when product changes. • Why pick a value if it doesn’t matter?

Parameterized Unit Testing • Parameterized Unit Test = Unit Test with Parameters • Separation of concerns • Data is generated by a tool • Developer can focus on functional specification void Add(List list, int item) { var count = list.Count; list.Add(item); Assert.AreEqual(count + 1, list.Count); }

Parameterized Unit Tests areAlgebraic Specifications • A Parameterized Unit Test can be read as a universally quantified, conditional axiom. void ReadWrite(string name, string data) {Assume.IsTrue(name != null && data != null); Write(name, data);varreadData = Read(name); Assert.AreEqual(data, readData); } string name, string data: name ≠ null ⋀ data ≠ null ⇒ equals( ReadResource(name,WriteResource(name,data)), data)

Parameterized Unit Testingis going mainstream Parameterized Unit Tests (PUTs) commonly supported by various test frameworks • .NET: Supported by .NET test frameworks • http://www.mbunit.com/ • http://www.nunit.org/ • … • Java: Supported by JUnit 4.X • http://www.junit.org/ Generating test inputs for PUTs supported by tools • .NET: Supported by Microsoft Research Pex • http://research.microsoft.com/Pex/ • Java: Supported by AgitarAgitarOne • http://www.agitar.com/

Exercise: “TrimEnd”Manual Parameterized Unit Testing Goal: Create a parameterized unit test for TrimEnd.. • Refactor: Extract Method [TestMethod] public void TrimEndTest() { var target = "Hello World"; var suffix = "World"; var result = ParameterizedTest(target, suffix); Assert.IsTrue(result == "Hello "); } static string ParameterizedTest(string target, string suffix) { var result = StringExtensions.TrimEnd(target, suffix); return result; }

Data Generation Challenge Goal: Given a program with a set of input parameters, automatically generate a set of input values that, upon execution, will exercise as many statements as possible Observations: • Reachability not decidable, but approximations often good enough • Encoding of functional correctness checks as assertions that reach an error statement on failure

Assumptions and Assertions void PexAssume.IsTrue(bool c) { if (!c)throw new AssumptionViolationException();}void PexAssert.IsTrue(bool c) { if (!c)throw new AssertionViolationException();} • Assumptions and assertions induce branches • Executions which cause assumption violations are ignored, not reported as errors or test cases

Data Generation • Human • Expensive, incomplete, … • Brute Force • Pairwise, predefined data, etc… • Semi - Random: QuickCheck • Cheap, Fast • “It passed a thousand tests” feeling • Dynamic Symbolic Execution: Pex, Cute,EXE • Automated white-box • Not random – Constraint Solving

Dynamic Symbolic Execution Choose next path • Code to generate inputs for: Solve Execute&Monitor void CoverMe(int[] a) { if (a == null) return; if (a.Length > 0) if (a[0] == 1234567890) throw new Exception("bug"); } Negated condition a==null F T a.Length>0 T F Done: There is no path left. a[0]==123… F T Data null {} {0} {123…} Observed constraints a==null a!=null && !(a.Length>0) a!=null && a.Length>0 && a[0]!=1234567890 a!=null && a.Length>0 && a[0]==1234567890 Constraints to solve a!=null a!=null && a.Length>0 a!=null && a.Length>0 && a[0]==1234567890

Exercise: “CoverMe”Dynamic Symbolic Execution with Pex • Open CoverMe project in Visual Studio. • Right-click on CoverMe method, and select “Run Pex”. • Inspect results in table. public static void CoverMe(int[] a) { if (a == null) return; if (a.Length > 0) if (a[0] == 1234567890) throw new Exception("bug"); }

Motivation: Unit Testing HellResourceReader • How to test this code?(Actual code from .NET base class libraries)

Exercise: “ResourceReaderTest”ResourceReader [PexClass, TestClass] [PexAllowedException(typeof(ArgumentNullException))] [PexAllowedException(typeof(ArgumentException))] [PexAllowedException(typeof(FormatException))] [PexAllowedException(typeof(BadImageFormatException))] [PexAllowedException(typeof(IOException))] [PexAllowedException(typeof(NotSupportedException))] public partial class ResourceReaderTest { [PexMethod] public unsafe void ReadEntries(byte[] data) { PexAssume.IsTrue(data != null); fixed (byte* p = data) using (var stream = new UnmanagedMemoryStream(p, data.Length)) { var reader = new ResourceReader(stream); foreach (var entry in reader) { /* reading entries */ } } } }

When does a test case fail? • If the test does not throw an exception, it succeeds. • If the test throws an exception, • (assumption violations are filtered out), • assertion violations are failures, • for all other exception, it depends on further annotations. • Annotations • Short form of common try-catch-assert test code • [PexAllowedException(typeof(T))] • [PexExpectedException(typeof(T))]

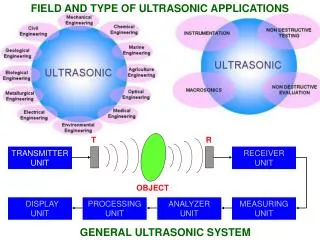

Dynamic Symbolic ExecutionPex Architecture A brief overview

Code Instrumentation • Instrumentation framework “Extended Reflection” • Instruments .NET code at runtime • Precise data flow and control flow analysis Pex Code Under Test Z3 ExtendedReflection

Dynamic Symbolic Execution • Pex listens to monitoring callbacks • Symbolic execution along concrete execution Pex Code Under Test Z3 ExtendedReflection

Constraint Solving • Constraint solving with SMT solver Z3 • Computes concrete test inputs Pex Code Under Test Z3 ExtendedReflection

Test Generation • Execute with test inputs from constraint solver • Emit test as source code if it increases coverage Pex Code Under Test Z3 ExtendedReflection

Components • ExtendedReflection: Code Instrumentation • Z3: SMT constraint solver • Pex: Dynamic Symbolic Execution Pex Code Under Test Z3 ExtendedReflection

Code Instrumentationby example ldtoken Point::GetX call __Monitor::EnterMethod brfalse L0 ldarg.0 call __Monitor::Argument<Point> L0: .try { .try { call __Monitor::LDARG_0 ldarg.0 call __Monitor::LDNULL ldnull call __Monitor::CEQ ceq call __Monitor::BRTRUE brtrue L1 call __Monitor::BranchFallthrough call __Monitor::LDARG_0 ldarg.0 … ldtoken Point::X call __Monitor::LDFLD_REFERENCE ldfld Point::X call __Monitor::AtDereferenceFallthrough br L2 L1: call __Monitor::AtBranchTarget call __Monitor::LDC_I4_M1 ldc.i4.m1 L2: call __Monitor::RET stloc.0 leave L4 } catch NullReferenceException { ‘ call __Monitor::AtNullReferenceException rethrow } L4: leave L5 } finally { call __Monitor::LeaveMethod endfinally } L5: ldloc.0 ret class Point { intx; static int GetX(Point p) { if (p != null) return p.X; else return -1;} } Prologue p Record concrete values to have all information when this method is calledwith no proper context (The real C# compiler output is actually more complicated.) null p p == null Calls to build path condition Calls will performsymbolic computation Epilogue Calls to build path condition

Symbolic State Representation Pex’s representation of symbolic values and state is similar to the ones used to build verification conditions in ESC/Java, Spec#, … Terms for • Primitive types (integers, floats, …), constants, expressions • Struct types by tuples • Instance fields of classes by mutable ”mapping of references to values" • Elements of arrays, memory accessed through unsafe pointers by mutable “mapping of integers to values" Efficiency by • Many reduction rules, including reduction of ground terms to constants • Sharing of syntactically equal sub-terms • BDDs over if-then-else terms to represent logical operations • Patricia Trees to represent AC1 operators (including parallel array updates)

Constraint Solving: Z3 • SMT-Solver (“Satisfiability Modulo Theories”) • Decides logical first order formulas with respect to theories • SAT solver for Boolean structure • Decision procedures for relevant theories: uninterpreted functions with equalities,linear integer arithmetic, bitvector arithmetic, arrays, tuples • Model generation for satisfiable formulas • Models used as test inputs • Limitations • No decision procedure for floating point arithmetic • http://research.microsoft.com/z3

Pex inVisual Studio Parameterized Unit Testing

Test Generation WorkFlow Code-Under-Test Project Test Project Generated Tests Parameterized Unit Tests Pex

Creating a (Parameterized)Test Project • Right-click on the code-under-test project • In the Solution Explorer • Select “Pex” • Select “Create Parameterized Unit Test Stubs”

(Parameterized) Test Project Generated Test Project References Microsoft.Pex.Framework (and more) References VisualStudioUnitTestFramework Contains source files with generated Parameterized Unit Test stubs

Running Pex from the Editor • Right-click on Parameterized Unit Test • For now, it just calls the implementation,but the you should edit it, e.g. to add assertions • Select “Run Pex Explorations”

Exploration Result View Input/Output table Row = generated test Column = parameterized test input or output Current Parameterized Unit Test Exploration Status Issue bar Important messages here !!!

Input/Output table Test outcome filtering Failing Test New Test Fix available Passing Test

Input/Output table Exception Stack trace Review test Allow exception

Pex Explorer Pex Explorer makes it easy to digest the results of many Parameterized Unit Tests, and many generated test inputs

Pex from theCommand line pex.exe

HTML Reports • Rich information, used by Pex developers • Full event log history • Parametertable • Code coverage • etc… HTML rendered from XML report file: It is easy to programmatically extract information!

Annotations: Code Under Test • Tell Pex which Type you are testing • Code coverage • Exception filtering • Search prioritization [PexClass(typeof(Foo))] public class FooTest { …

Code Coverage Reports • 3 Domains • UserCodeUnderTest • UserOrTestCode • SystemCode public void CodeUnderTest() { … } [PexClass] public class FooTest { [PexMethod] public void ParamTest(…) { … public class String { public bool Contains(string value) { …

Command line: Filters • Namespace, type, method filters • pex.exe test.dll /tf:Foo /mf:Bar • Partial match, case insensitive • Full match: add “!”: /tf:Foo! • Prefix: add “*”: /tf:Foo* • Many other filters • e.g. to select a “suite”: /sf=checkin • For more information:pex.exe help filtering

ExerciseData generation Goal: Explore input generation techniques with TrimEnd • Write or discuss a random input generator for TrimEnd • Adapt your parameterized unit tests for Pex • Start over with “Create Parameterized Unit Test Stubs”, or… • Add reference to Microsoft.Pex.Framework • Add using Microsoft.Pex.Framework; • Add [PexClass(typeof(StringExtensions))] on StringExtensionsTest • Add [PexMethod] on your parameterized tests • Right-click in test and ‘Run Pex Explorations’ • Compare test suites